ASU faculty warn against hidden AI prompts in assignments over integrity and accessibility risks

Arizona State University guidance highlights limits of AI detection tactics as institutions rethink assessment strategies.

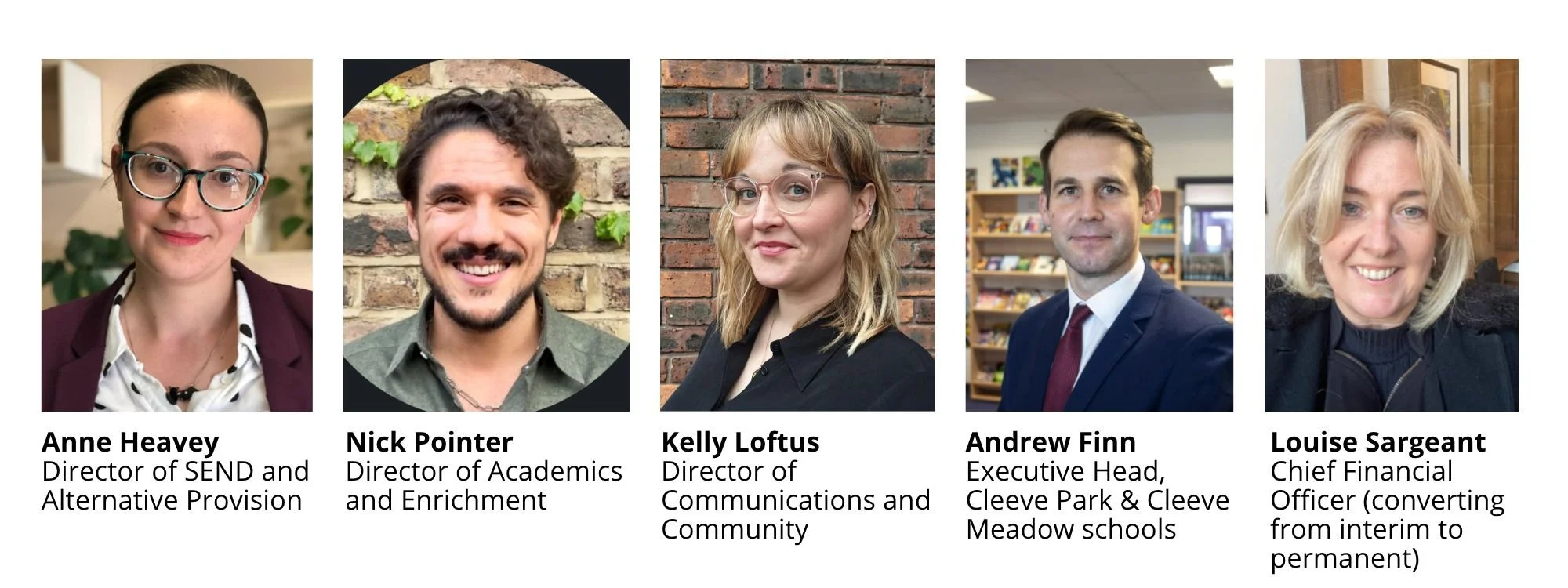

ASU faculty highlight challenges in AI detection as universities shift toward assessment models that account for generative AI use

Arizona State University faculty are advising against the use of hidden AI prompts in assignments, warning that the approach does not produce reliable evidence of misconduct and may introduce accessibility risks for students.

The guidance, developed within the College of Integrative Sciences and Arts (CISA), comes as universities continue to test ways of identifying AI-generated work while maintaining academic integrity standards.

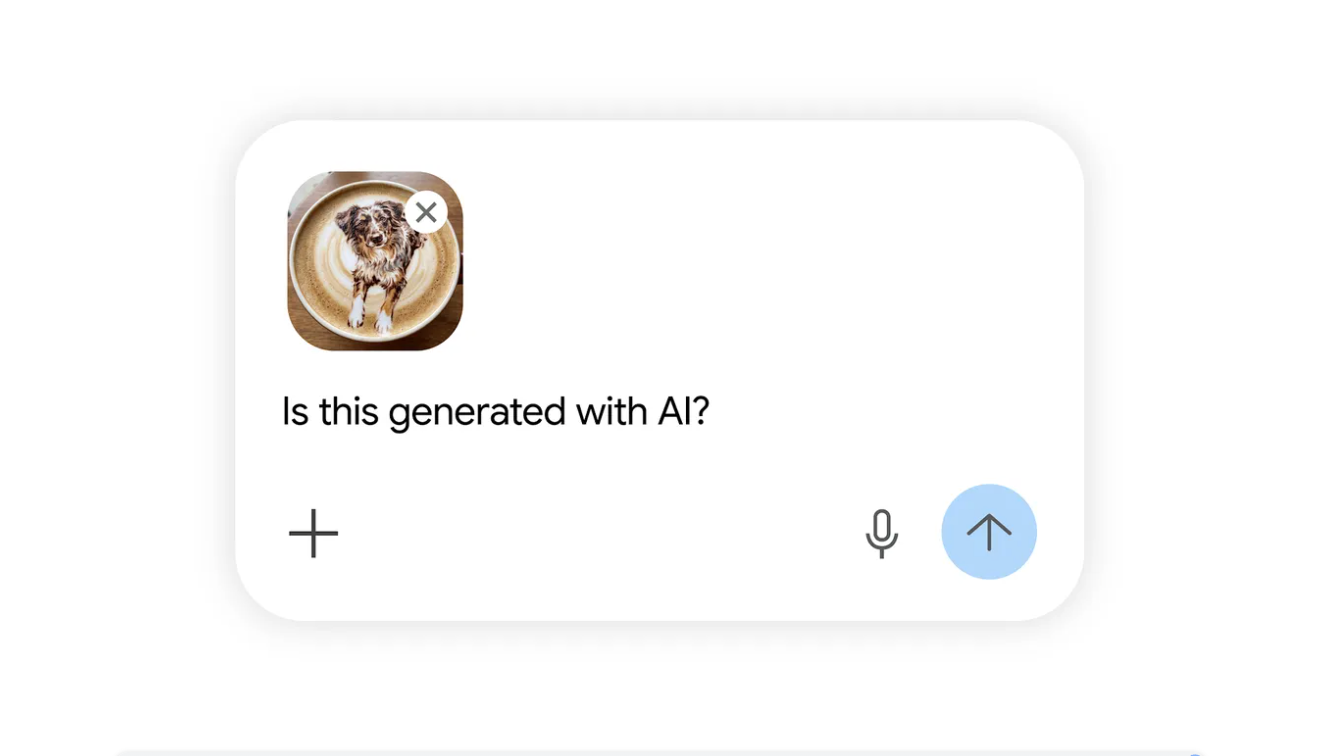

The tactic involves embedding invisible text or instructions within assignment materials, designed to influence outputs if students paste the content into AI tools. Faculty involved in the guidance say the method is ineffective and may create unintended consequences for both students and instructors.

Detection approach fails to produce usable evidence

In a LinkedIn post, Adam Pacton, Dean’s Fellow for AI Literacy and Integration at ASU, said the approach does not meet the threshold required for academic integrity cases.

He wrote, “First, in our college ‘evidence’ of AI use generated through hidden prompts isn’t sufficient for a formal academic integrity inquiry. It’s a trap that doesn’t ‘catch’ anything.”

The guidance explains that AI systems do not respond consistently to hidden instructions. The same prompt may be ignored, partially followed, or reproduced in ways that are indistinguishable from standard student writing. As a result, the presence of specific phrases or outputs cannot be treated as conclusive proof of misconduct.

It also notes that hidden prompts tend to capture only a narrow set of behaviors, primarily students who directly copy and paste assignment text into AI tools, while others may avoid detection entirely.

Accessibility and compliance risks highlighted

Beyond reliability, the guidance raises concerns about accessibility. Hidden text in digital materials may be detected by screen readers and other assistive technologies, potentially exposing instructions to some students but not others.

Pacton wrote, “Second, invisible text in digital course materials may violate the 2024 federal ADA Title II rule. Screen readers can surface hidden instructions in confusing ways to students using assistive technology. That’s not a quirk of detection failure; that’s an accessibility failure.”

This creates the risk of inconsistent student experiences within the same assignment, particularly for learners using accessibility tools, and introduces potential compliance issues under federal accessibility standards.

Impact on classroom trust and learning environment

The guidance also points to the broader classroom impact of detection-based tactics. Hidden prompts may create what faculty describe as an “adversarial” dynamic, where students perceive instructors as attempting to catch them rather than support learning.

It notes that some students are already aware of these techniques, reducing their effectiveness as a deterrent while potentially increasing skepticism about assessment practices.

The result, according to the guidance, is limited benefit in detecting AI use, alongside potential reputational and pedagogical costs.

Shift toward assignment redesign and transparency

Instead of detection methods, the guidance recommends redesigning assignments to make AI offloading more difficult or less relevant. Suggested approaches include requiring personal reflection, incorporating staged drafts with revision tracking, and embedding work in class-based discussion.

It also encourages instructors to build in verification points, where students explain their process in their own words, and to clearly define how AI tools can be used within each assignment.

Pacton wrote that the work is not formal policy but part of an ongoing effort to develop more effective approaches across the college, describing it as “us learning out loud, working across roles, and trying to do right by students and colleagues alike within the college, the university, and the larger sector.”