Google DeepMind AI curriculum targets university students building language models from scratch

A new course pathway from Google DeepMind focuses on how students can understand, build, and fine-tune language models, with a focus on practical development and responsible AI use.

Google DeepMind has introduced an AI Research Foundations curriculum for university students, setting out a structured pathway for learning how modern language models are developed and applied.

The program targets learners with Python experience studying computer science, mathematics, physics, or related technical disciplines, and centers on hands-on model building rather than high-level theory.

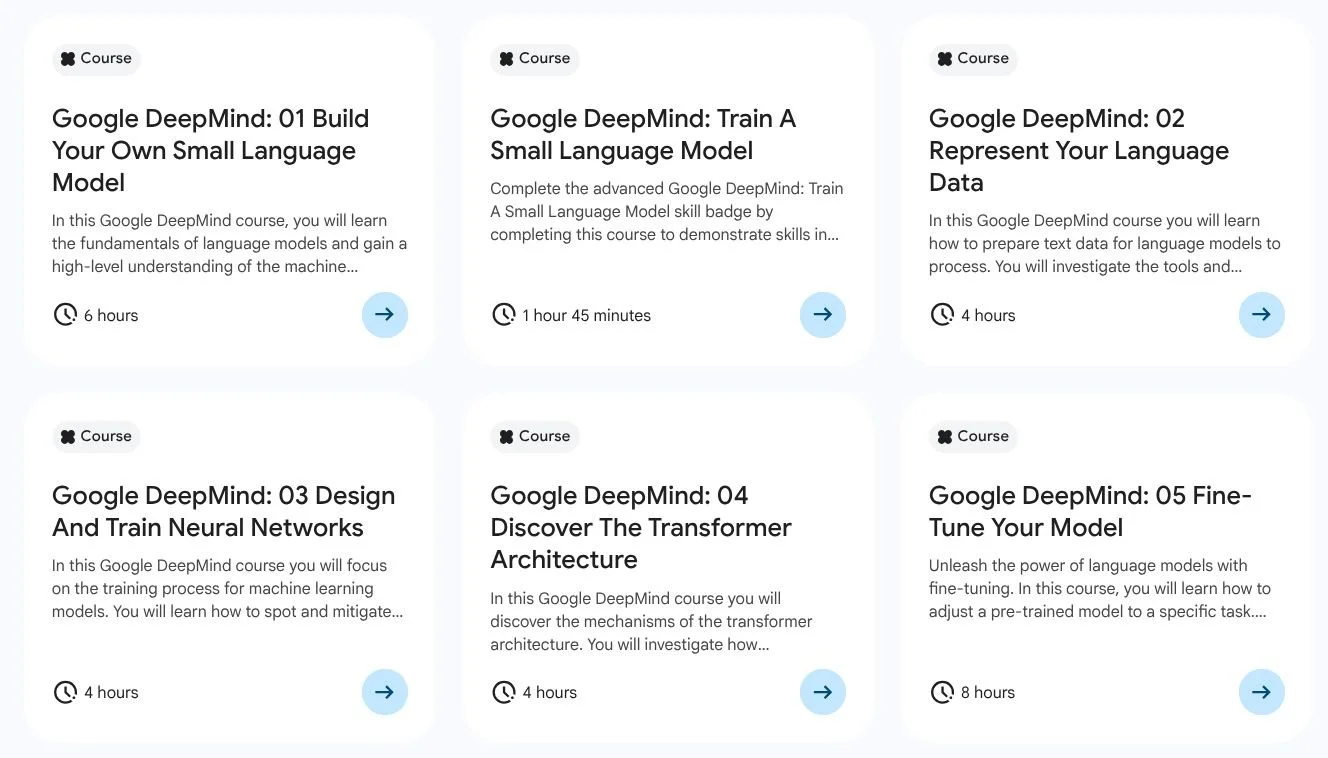

The curriculum outlines a series of courses designed to build technical capability in areas such as tokenization, neural networks, and transformer architectures. It reflects ongoing demand for skills linked to large language models and systems such as Google Gemini, while also addressing how those systems are trained and adapted in practice.

Focus on building and training models

The course pathway includes modules such as “Build Your Own Small Language Model” and “Train A Small Language Model,” which guide students through the fundamentals of machine learning pipelines and model development. Learners are introduced to both traditional n-gram approaches and transformer-based models, with coding labs used to demonstrate how systems generate text and identify patterns in language.

Later modules shift toward deeper technical implementation, including dataset preparation, tokenization, and embedding techniques. Students are expected to work with vectors and matrices, analyze how meaning is represented in models, and consider how data decisions can introduce bias.

The curriculum also covers neural network training processes, including how to identify and address issues such as overfitting and underfitting. Practical exercises include implementing classification models and understanding backpropagation, providing a foundation for more advanced model development work.

From transformer models to fine-tuning techniques

A dedicated module on transformer architecture examines how models process prompts and generate context-aware outputs, including attention mechanisms and multi-head attention. This is followed by content on fine-tuning, where students learn how to adapt pre-trained models for specific tasks.

The course introduces both full-parameter fine-tuning and parameter-efficient methods such as LoRA, alongside an introduction to reinforcement learning as an alternative to supervised approaches. Additional modules explore how to scale model training using GPU resources and how to manage computational and memory requirements.

Alongside technical skills, the curriculum incorporates content on responsible AI development. This includes ethical dataset design, stakeholder mapping, and consideration of environmental factors such as energy use and resource impact.

The program uses skill badges to validate practical knowledge, with learners completing hands-on labs and challenge-based assessments. Courses are available through a credit-based system, with a monthly allocation allowing users to access labs without upfront cost.