Former UK government AI advisor highlights £40m shift toward long-term research

A former UK government AI strategy lead has pointed to a new £40 million research lab as a move away from scaling-led AI development, with implications for how future systems are built and studied.

Oliver Purnell, Strategic Partnerships at the Incubator for Artificial Intelligence and former AI Strategy Lead at the Department for Science, Innovation and Technology, has positioned the UK government’s new Fundamental AI Research Lab as a deliberate move toward foundational research that commercial AI labs are less likely to pursue.

Drawing on his experience leading national security and engagement work within the Sovereign AI Unit, and previously serving as Senior AI Advisor in the Cabinet Office, Purnell framed the investment as part of a broader rethink in how AI progress is being approached at a national level.

Focus shifts beyond scaling AI models

In a LinkedIn post, Purnell said: “The UK just made a £40m bet that the next generation of AI won't come from scaling alone.”

He pointed to the lab’s focus on structural challenges that continue to limit current AI systems, including hallucinations, unreliable memory, and unpredictable reasoning.

Rather than extending existing models with more data and compute, the lab is intended to explore new approaches to how AI systems are designed and built.

The initiative is backed by the Department for Science, Innovation and Technology and UK Research and Innovation, with up to £40 million allocated over six years.

In addition to funding, the lab will provide access to large-scale compute through the UK’s AI Research Resource, including around two million GPU hours per year.

At least ten PhD studentships will also be funded, linking research output with future skills development.

Purnell said the program targeted areas “that commercial AI labs aren't pursuing because the timescales are too long and the risks too high,” positioning the work as complementary to industry-led development rather than competitive with it.

Government strategy and research priorities

The lab is one of the first major deliverables under UKRI’s AI Strategy, which is backed by more than £1.6 billion in funding over four years. The strategy outlines plans to strengthen the UK’s capabilities in mathematics, computer science, and engineering, alongside improving access to infrastructure, tools, and training.

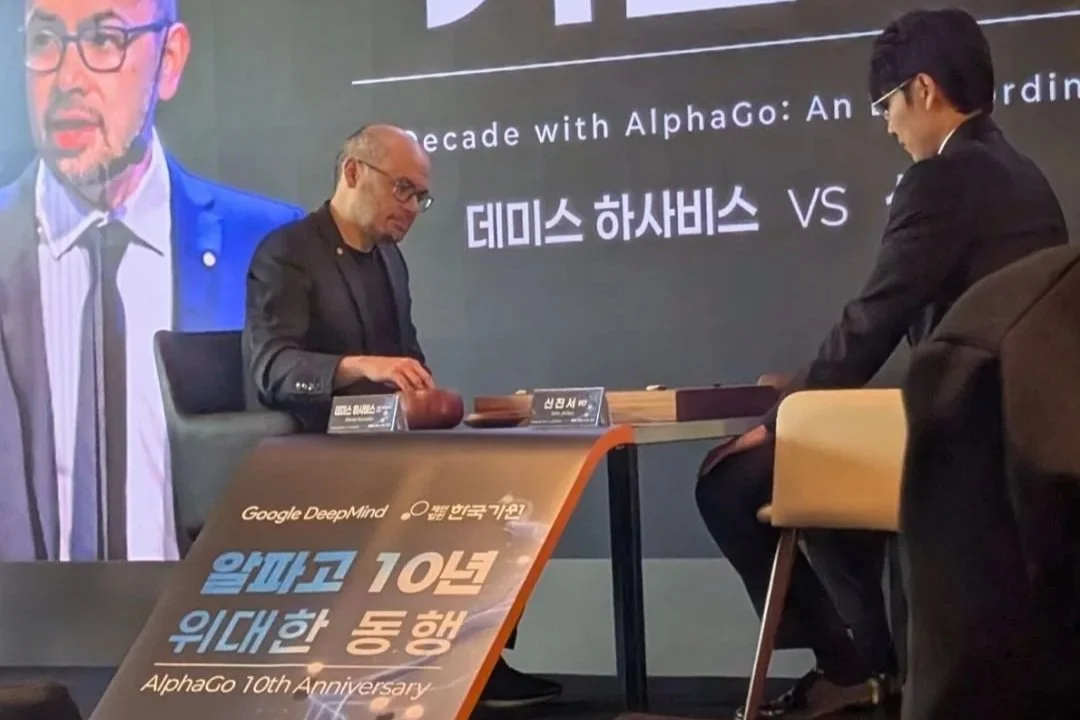

The government has opened a national call for proposals, inviting researchers across the UK to submit high-risk, high-reward ideas that could address longstanding limitations in AI systems. Proposals will be assessed by a peer review panel chaired by Raia Hadsell, Vice President of Research at Google DeepMind and a UK government AI Ambassador.

Purnell highlighted the structure of the panel as significant, describing it as “a deliberate bridge between frontier industry, UK academia and government,” with those setting research priorities closely connected to current advances in AI development.

Alongside the lab, existing UKRI-backed projects demonstrate the types of outcomes the government is targeting. These include AI systems used to detect faults in railway infrastructure in real time, as well as machine learning platforms supporting clinical research and drug development in areas such as Alzheimer’s, Parkinson’s, and Huntington’s disease.

Implications for research, education, and capability

Purnell framed the investment as a signal that the UK is focusing on areas where it has established depth, particularly in long-horizon academic research. He said: “This is the UK doubling down on where it has genuine depth - the kind of long-horizon, high-risk research that world-class universities are built for and commercial labs aren't incentivised to pursue.”

The lab’s structure combines funding, compute access, and PhD training, creating a pipeline that links research activity with skills development. For universities and EdTech providers, this may influence how AI research pathways are designed, particularly as demand grows for expertise in model architecture, evaluation, and reliability.

The focus on foundational challenges also points to a shift in how AI progress is being measured. Rather than prioritizing short-term performance gains, the program centers on reliability, transparency, and system-level improvements that could underpin future applications across healthcare, transport, science, and public services.