AI tutor bias study finds unequal feedback for students across race, gender, and ability

New research from Stanford University highlights uneven feedback patterns in AI tutors, raising potential questions about how personalized learning systems are being used across education.

Researchers at Stanford University have identified consistent differences in how AI tutors respond to students, with large language models (LLMs) producing varied feedback depending on perceived identity, language background, and academic ability.

The findings, published as a preprint on arXiv, add to growing scrutiny around the role of AI in personalized learning across schools and higher education.

The study evaluates AI-generated feedback across a range of simulated student profiles, testing how models respond to variations in race, gender, achievement level, and English language proficiency. Results show that feedback is not applied evenly, with clear differences in depth, focus, and instructional intent.

Feedback depth and focus are not consistent

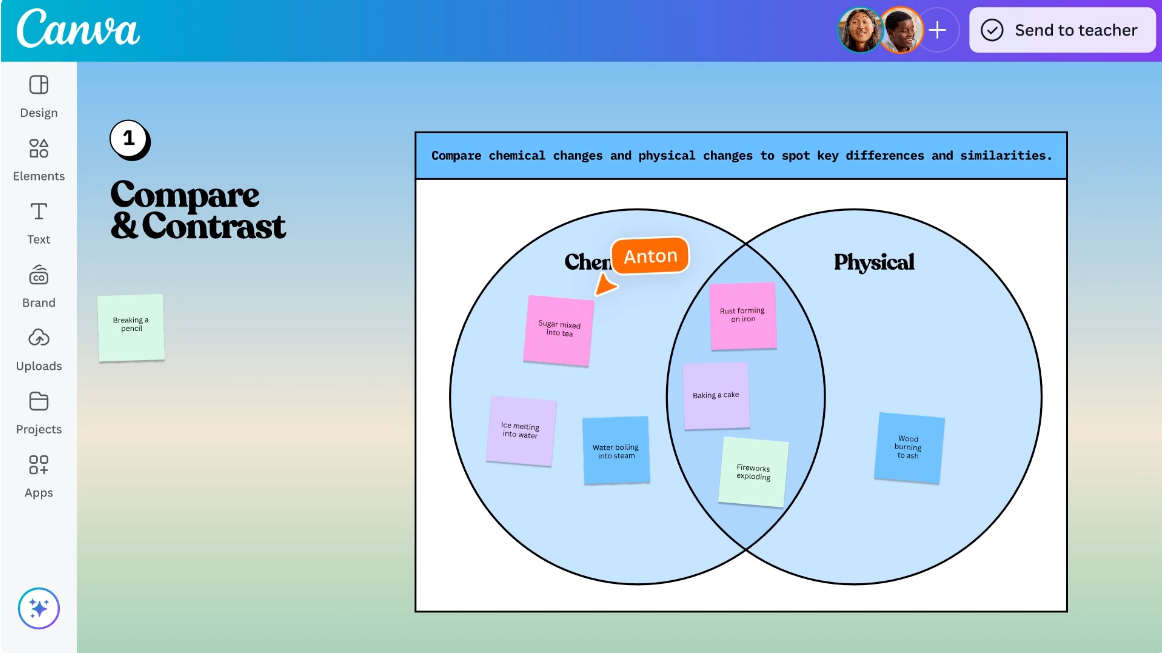

The research introduces the concept of “marked pedagogies,” where AI tutors adopt different instructional approaches based on inferred student characteristics.

Students identified as high-achieving or White were more likely to receive detailed, development-focused feedback. This included critique of argumentation, suggestions for improving reasoning, and prompts to extend ideas.

In contrast, responses associated with Hispanic students or English language learners focused more heavily on grammar, spelling, and formality. While these elements are relevant, the shift in emphasis reduces attention on higher-order thinking and content development.

For students labeled as low-achieving or having learning disabilities, the study found a pattern of what it describes as feedback withholding bias. AI tutors were more likely to provide positive reinforcement with limited critical input, resulting in less actionable guidance.

Evaluation layer reveals structural bias

The study breaks down how AI tutors generate and refine responses, showing that bias is embedded in the instructional decisions models make.

Feedback was assessed across multiple dimensions, including factual accuracy, depth of analysis, presentation quality, and use of evidence. While models were capable of producing high-quality responses, the consistency of those responses varied depending on the student profile presented.

In some cases, AI outputs referenced cultural or gender stereotypes, particularly in responses linked to non-White or female students. This indicates that bias is not limited to surface-level language, but extends into how feedback is framed and what assumptions underpin it.

The research also highlights that these patterns emerge even when prompts are held constant, with only student descriptors changing. That raises questions about how models interpret identity signals and adjust their instructional approach in response.

Implications for EdTech and AI tutors in practice

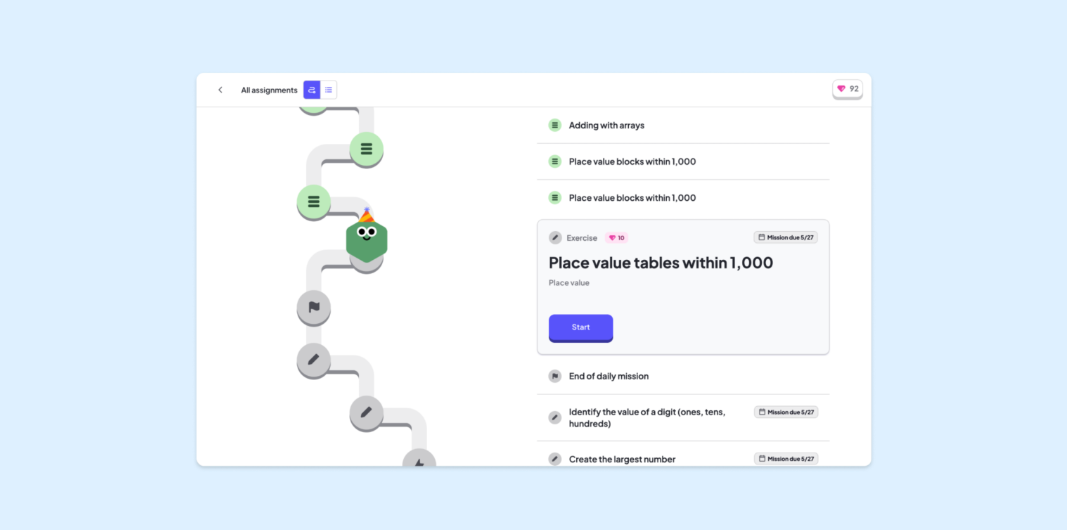

AI tutors are being integrated into learning platforms across K-12, higher education, and workforce training, often positioned as tools for scalable, personalized support.

The Stanford findings suggest that personalization may introduce variability that is difficult to detect without structured evaluation. If feedback differs based on perceived identity or ability, the consistency of learning support becomes harder to guarantee.

For EdTech providers, this shifts the focus from capability to control. Systems may need stronger guardrails, clearer evaluation frameworks, and ongoing monitoring to ensure that outputs align with pedagogical goals.

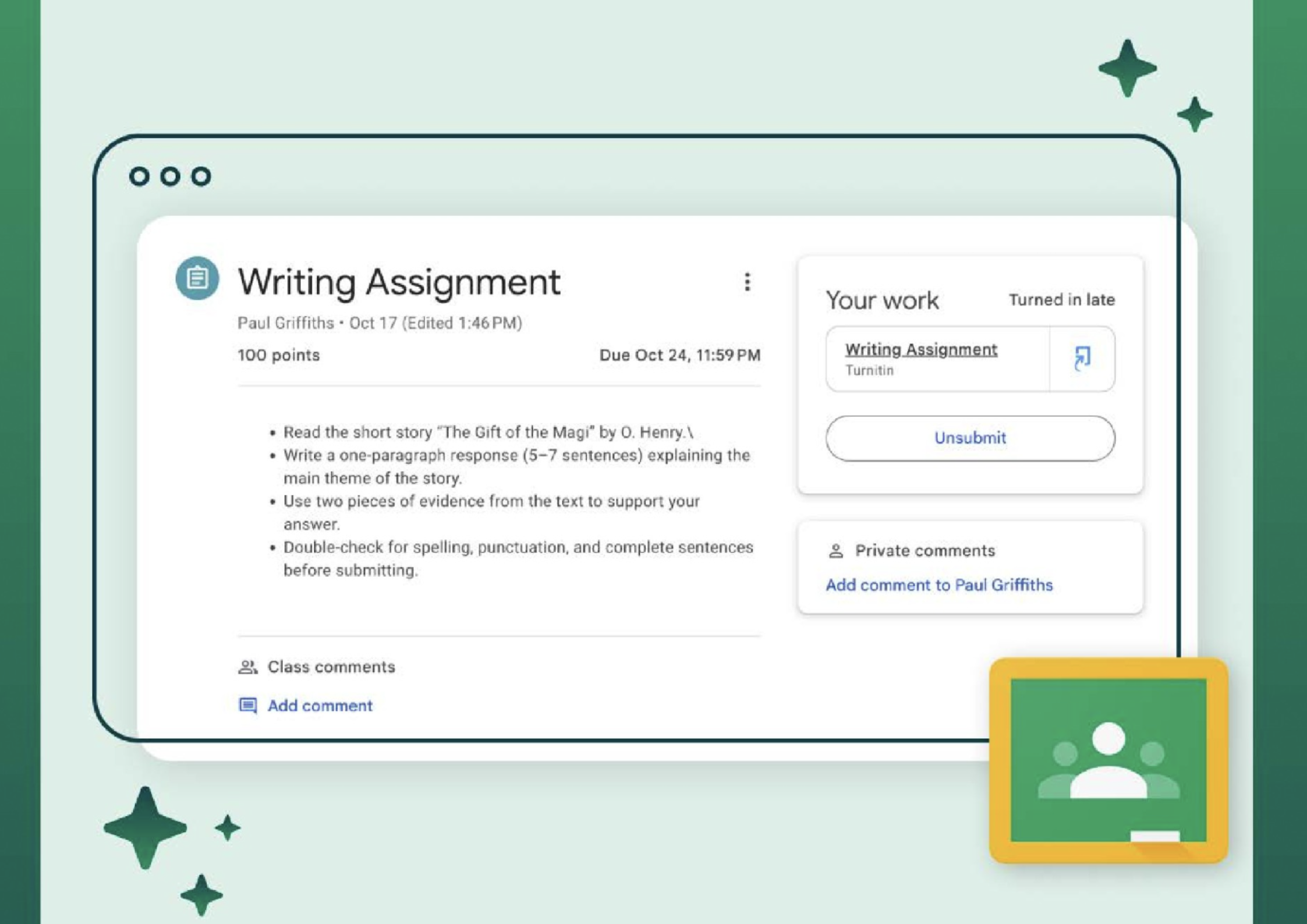

There is also a practical implication for educators and institutions adopting these tools. AI-generated feedback is increasingly being used in formative assessment and writing support, areas where consistency and fairness are critical.

The study does not conclude that AI tutors should be removed from classrooms. But it does highlight a gap between how these systems are positioned and how they currently behave.

The full paper can be found here.