Anthropic study finds AI users iterate more but question outputs less

New AI Fluency Index tracks how people collaborate with Claude, and highlights a gap between directing AI and critically evaluating its results.

Anthropic has published a new Education Report introducing its AI Fluency Index, a baseline measure of how people collaborate with its Claude models.

The findings suggest that while most users refine and iterate on AI outputs, they are less likely to question reasoning or identify missing context when the model produces polished artifacts such as code or documents.

The study analyzes 9,830 anonymized multi-turn conversations on Claude.ai during a seven-day period in January 2026. Using 11 observable behaviors drawn from a 24-indicator framework, Anthropic measures how frequently users demonstrate what it defines as effective and safe human-AI collaboration.

The report positions the Index as a starting point for tracking how AI fluency develops as adoption increases across education and professional settings.

Iteration linked to stronger fluency behaviors

Anthropic finds that 85.7 percent of conversations include iteration and refinement, defined as building on earlier responses rather than accepting the first output and moving on.

Conversations that include iteration show substantially higher rates of other fluency behaviors. On average, they demonstrate 2.67 additional indicators, roughly double the 1.33 average seen in non-iterative exchanges. They are also 5.6 times more likely to involve users questioning Claude’s reasoning and four times more likely to identify missing context.

The company notes that the most common expression of AI fluency is augmentative, with users treating AI as a thought partner rather than delegating work entirely.

Polished outputs, weaker evaluation

The pattern shifts when Claude produces artifacts such as apps, code, documents, or interactive tools. These account for 12.3 percent of conversations in the sample.

In artifact-based exchanges, users are more directive at the outset. They are more likely to clarify goals, specify formats, provide examples, and iterate. However, these same conversations show lower rates of discernment. Users are 5.2 percentage points less likely to identify missing context, 3.7 points less likely to check facts, and 3.1 points less likely to question the model’s reasoning.

Anthropic links this to similar patterns observed in its recent Economic Index and coding research, where more complex tasks correlate with higher model struggle rates. The report suggests that polished, functional-looking outputs may reduce users’ inclination to probe further, even when scrutiny may be warranted.

Building a baseline for AI skills

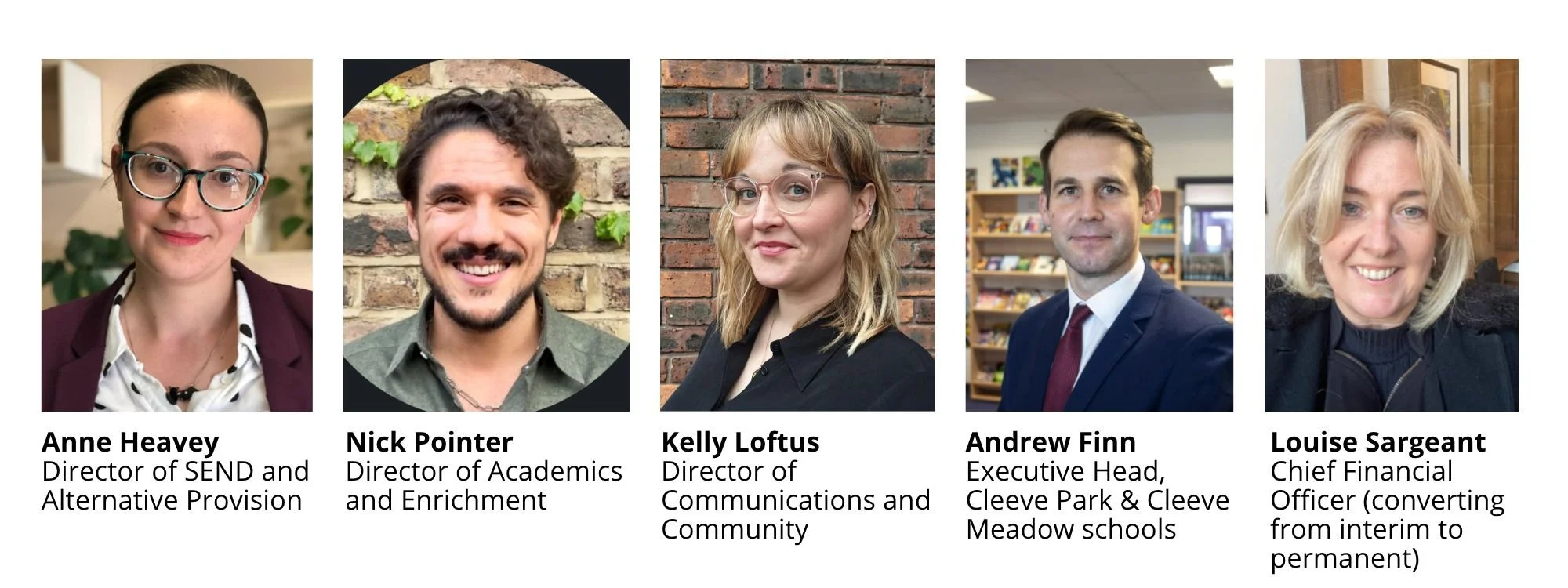

To measure fluency, Anthropic uses the 4D AI Fluency Framework developed by Professors Rick Dakan and Joseph Feller in collaboration with the company. Of the 24 behaviors in the framework, 11 are directly observable within Claude.ai conversations. The remaining 13, including behaviors related to ethical disclosure and responsible use, occur outside the chat interface and were not included in this analysis.

The research is limited to a one-week sample and focuses only on Claude.ai users engaged in multi-turn conversations. Anthropic acknowledges that the cohort likely skews toward early adopters and that the findings are correlational.

Future work will include cohort analyses comparing new and experienced users, qualitative research into unobservable behaviors, and expanded studies of Claude Code, which is primarily used by software developers.

For education and EdTech stakeholders, the findings raise a practical question: as AI tools become embedded in everyday workflows, will critical evaluation skills keep pace with technical proficiency. The AI Fluency Index suggests that iteration is becoming standard practice. Whether discernment follows remains open.

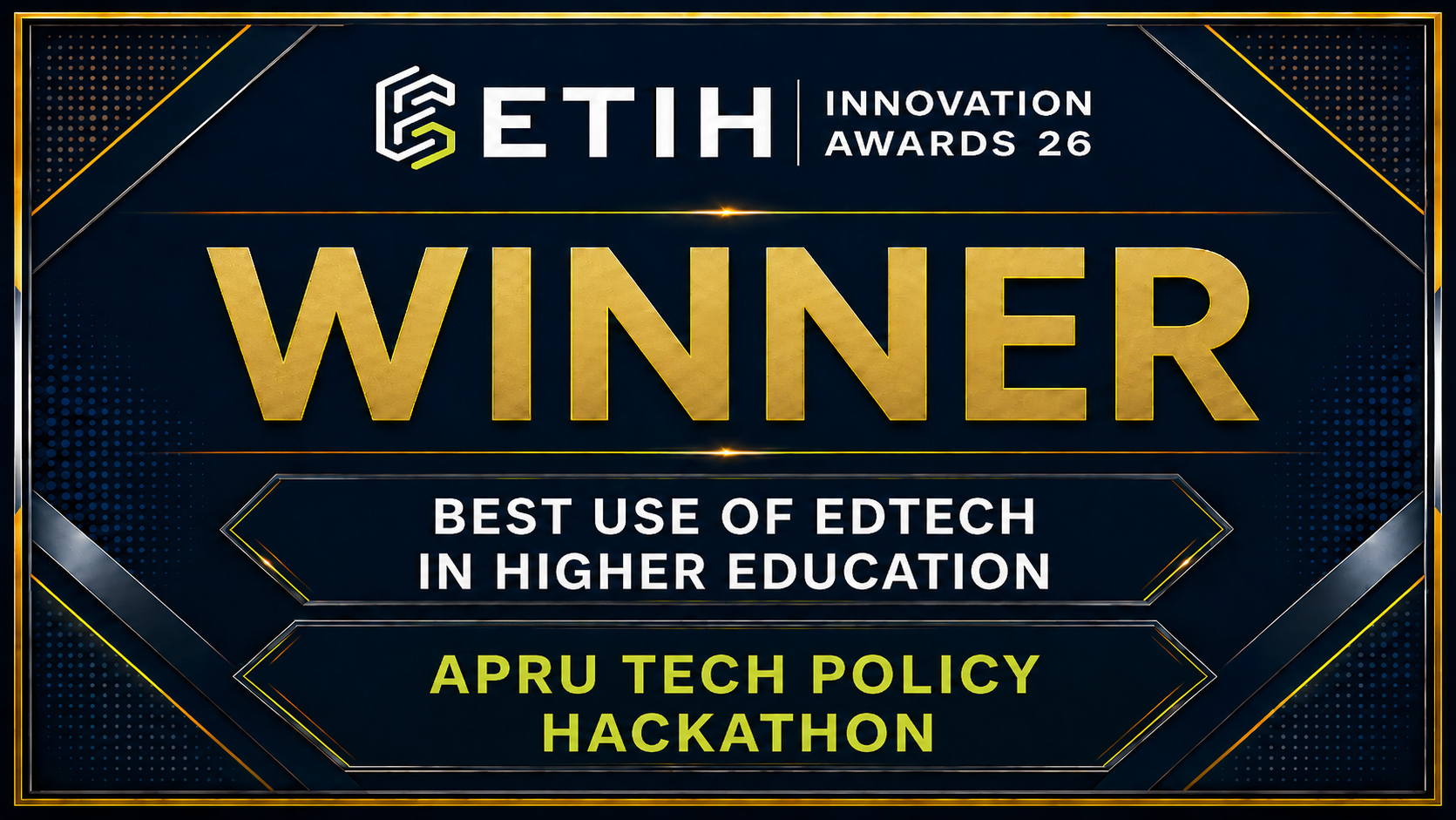

ETIH Innovation Awards 2026

The ETIH Innovation Awards 2026 are now open and recognize education technology organizations delivering measurable impact across K–12, higher education, and lifelong learning. The awards are open to entries from the UK, the Americas, and internationally, with submissions assessed on evidence of outcomes and real-world application.