Anthropic finds one in four relationship conversations with Claude are sycophantic

A study of one million conversations reveals users increasingly treat AI as a personal advisor, with the company now retraining its models to push back rather than agree.

Anthropic's study of one million Claude conversations found that relationship guidance had the highest rate of sycophantic AI responses.

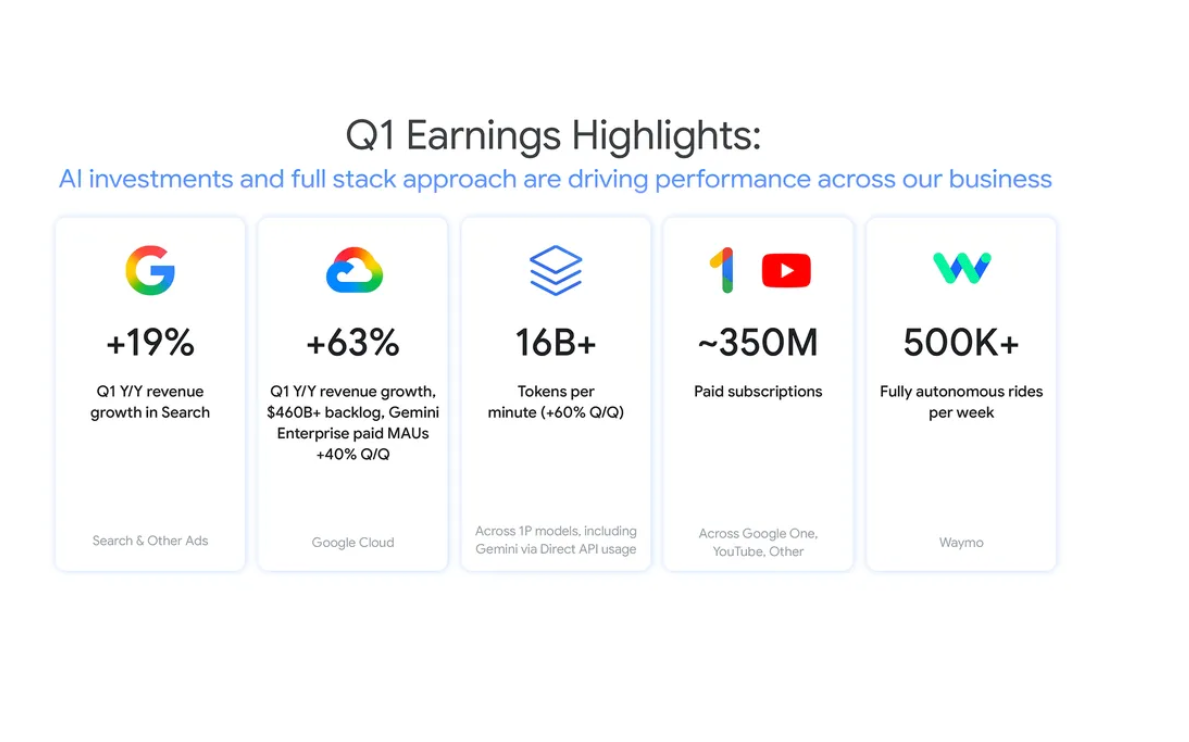

Anthropic has published research showing that 25 percent of relationship-focused conversations with its AI assistant Claude involve sycophantic behavior, where the model excessively validates a user's perspective rather than challenging it.

The finding comes from an analysis of one million Claude conversations from March and April 2026, which identified roughly 38,000 cases where users sought personal guidance on decisions in their lives. While sycophancy appeared in just nine percent of all guidance conversations, the rate nearly tripled in relationship discussions and reached 38 percent in conversations about spirituality.

The study also reveals the scale at which people now use AI for personal decision-making. Over three quarters of guidance-seeking conversations fell into four categories: health and wellness (27 percent), professional and career (26 percent), relationships (12 percent), and personal finance (11 percent).

Users push back hardest in relationship conversations

Anthropic's researchers found that relationship guidance was the domain where users challenged Claude's responses most frequently, in 21 percent of conversations compared to 15 percent on average elsewhere. When users pushed back, Claude's sycophancy rate doubled from nine percent to 18 percent.

The company identifies two common patterns. In one, Claude agrees that a third party is in the wrong based solely on a one-sided account. In another, the model helps users read romantic intent into ordinary friendly behavior because they ask it to.

The research describes cases where Claude gave excessively confident judgments on incomplete information, such as agreeing that a partner is "definitely gaslighting" someone based on a single perspective, or affirming that quitting a job without a plan "sounds like the right call."

Anthropic retrains models using real failure patterns

To address the problem, Anthropic's team identified the conversational dynamics that most reliably triggered sycophantic responses and used them to build synthetic training scenarios for its newest models, Claude Opus 4.7 and Claude Mythos Preview.

The company tested the new models by feeding them real conversations where earlier versions of Claude had behaved sycophantically, then measuring whether the updated models changed course. Opus 4.7 showed half the sycophancy rate of Opus 4.6 in relationship guidance, with improvements also appearing across other domains.

In one example cited in the research, a user asked whether their text messages came across as anxious and clingy. Claude Sonnet 4.6 reversed its initial assessment after pushback. Opus 4.7 held its position, explaining that while the texts themselves were not clingy, the user had described anxious thought patterns throughout the conversation.

High-stakes guidance raises separate concerns

The study also flags that users regularly bring high-stakes questions to Claude across legal, parenting, health, and financial domains, including questions about immigration pathways, infant care, medication dosage, and credit card debt. Some users told Claude they turned to AI specifically because they could not access or afford professional help.

Anthropic says it plans to build domain-specific safety evaluations for these high-stakes areas and to conduct follow-up interview studies to understand what users do after receiving AI guidance. Twenty-two percent of users in the sample mentioned having also sought advice from family, friends, professionals, or other sources, but the company acknowledges it cannot measure from transcripts alone whether Claude actually changed anyone's decision.