Universities are telling students what AI they can use but not how to use it, says University of Kent AI chief

With 94 percent of students using generative AI for assessed work, Phil Anthony argues that module-level policy is only the starting point and that the real gap is in teaching students to use AI critically and well.

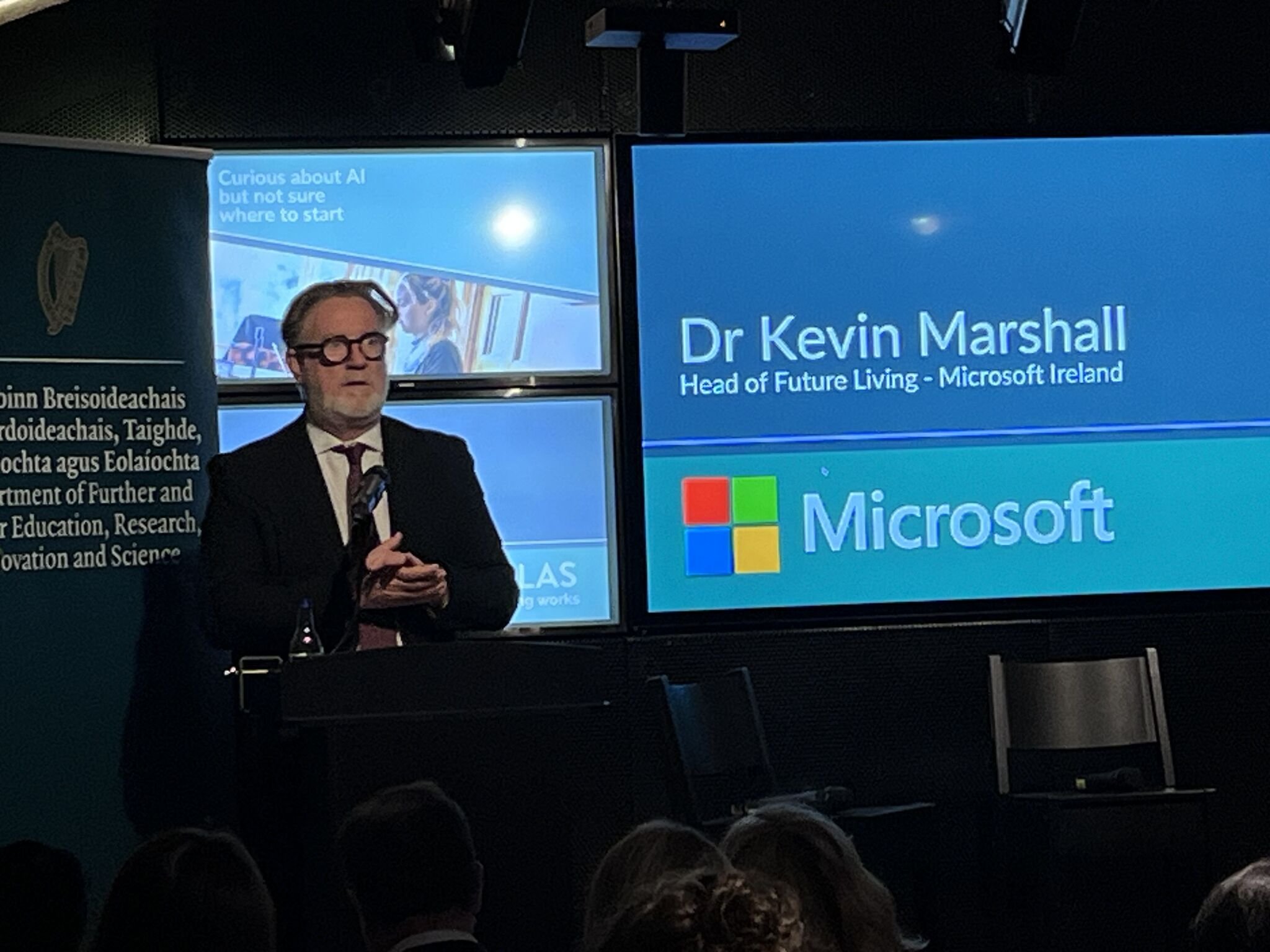

Phil Anthony, Head of AI at the University of Kent.

Universities are getting clearer about what AI students can use in assessments. But Phil Anthony, Head of AI at the University of Kent, argues that clarity at the point of submission is not the same as education, and that most institutions are still falling short of what students actually need.

Writing on the University of Kent website, Anthony draws on data from the HEPI and Kortext 2026 Student Generative AI Survey, which found that 95 percent of students use AI in at least one way and 94 percent use generative AI to help with assessed work.

His argument is that those numbers have changed the question institutions need to be asking. "We no longer need to ask whether AI is part of students' study habits," he writes. "It clearly is. The more important question is whether we are helping students use it in ways that support learning, rather than quietly outsourcing the thinking we want them to develop."

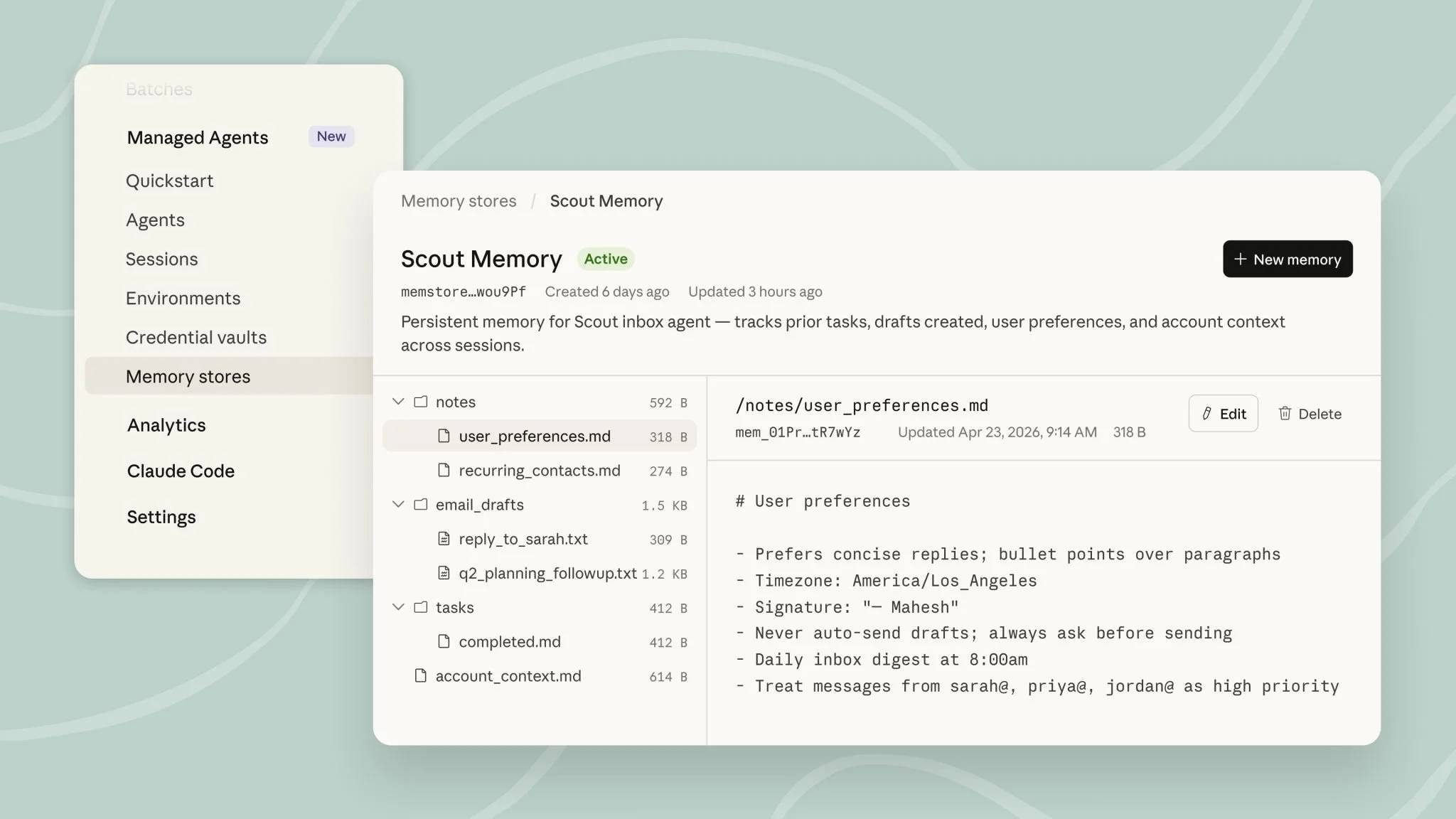

Anthony also took to LinkedIn to share his thinking, writing that the real issue is "not just whether students can use AI, but how they try different prompts, compare responses, question outputs, and understand when AI helps, and when it gets in the way."

The hidden curriculum risk

Anthony warns that institutions risk creating a hidden curriculum around AI, where some students develop confidence through trial and error or informal peer conversations while others are left behind through lack of opportunity rather than lack of ability.

"If AI only appears in a module as a rule, a warning or a line in the assessment guidance, students may learn that it is something to manage quietly," he says. "That does not mean students are acting dishonestly. Many will simply be uncertain. Some may not know where the boundaries are. Some may feel anxious about admitting they have used AI at all. Others may use it regularly but never discuss whether it helps them learn."

His proposed solution centers on seminars as the practical site for building AI literacy. He describes structured activities where students use AI tools directly, comparing outputs across different models and adapting prompts to see how responses change: "Students quickly see that changing the prompt, the tool or the context changes the answer,.

“More importantly, they see that they still have to judge whether the answer is any good."

When AI shapes thinking before students do

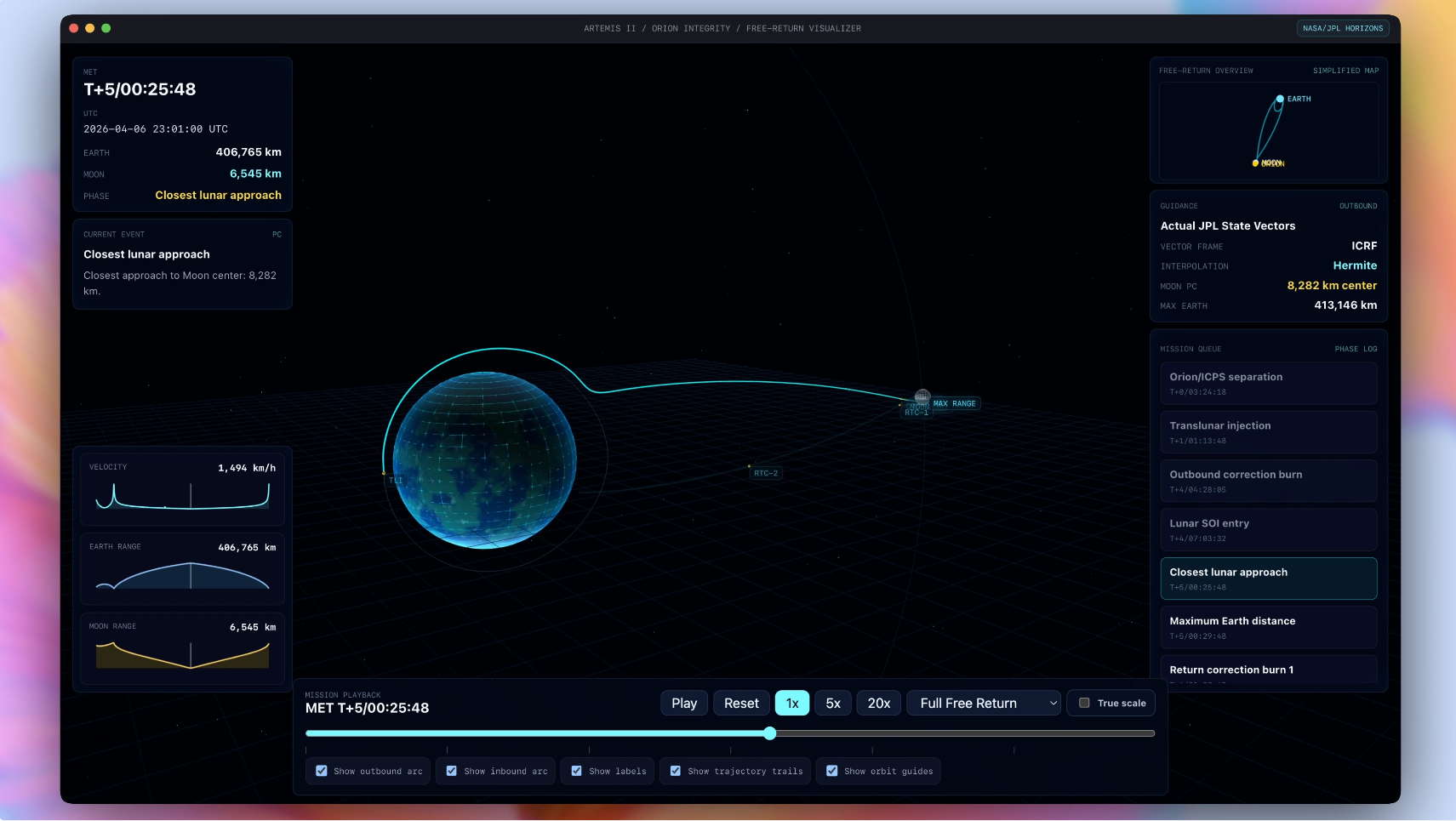

Anthony raises a more fundamental concern about when in the learning process students reach for AI. If students always use AI at the start of a task, he argues, it can shape how they think about a topic before they have formed their own view.

"The tool might foreground particular themes, ignore others, or present one way of understanding the issue as if it is the obvious starting point," he says. "That does not mean students should never use AI early in their work. Sometimes it can be helpful for mapping a topic, generating initial questions or suggesting search terms. But students need to understand that using AI first is not neutral."

To illustrate the risk, Anthony cites a study published in NEJM AI finding that physicians showed automation bias when exposed to incorrect LLM recommendations, even when they used the tool voluntarily and had prior AI training: "The point is that all of us need practice in recognising when a confident AI response may be shaping our judgement.”

He proposes a practical seminar activity in which students write down their own initial thinking before consulting AI, then compare that starting point against what the tool produces: "What did the AI foreground? What did it ignore? Did it change how they understood the topic? Would they have approached the task differently if they had started with the reading, the data, or their own ideas?"

Assessment restrictions land differently when teaching comes first

Anthony argues that restrictions on AI use in assessments are more meaningful to students when AI has already been used openly and critically in their learning. The difference, he says, is between an academic who can explain that a particular assessment restricts AI because independent argument construction is the skill being assessed, and one who simply states that AI is not permitted.

"That is very different from simply saying: AI is not permitted," he writes. "The first explanation gives students a reason. It shows that the restriction is linked to the learning outcome and the purpose of the assessment, rather than a general assumption that all AI use is dishonest."

He also frames AI literacy as a graduate employability issue: “Employers will not just need graduates who can use AI quickly. They will need graduates who can use it responsibly, explain their choices, recognise its limitations and understand when human judgement matters. That kind of capability will not develop from an assessment statement alone. It needs practice, and it needs context."

With 94 percent of students already using generative AI for assessed work, Anthony's piece puts a direct question to institutions: "Students are already using AI. The question is whether they are learning to use it well."