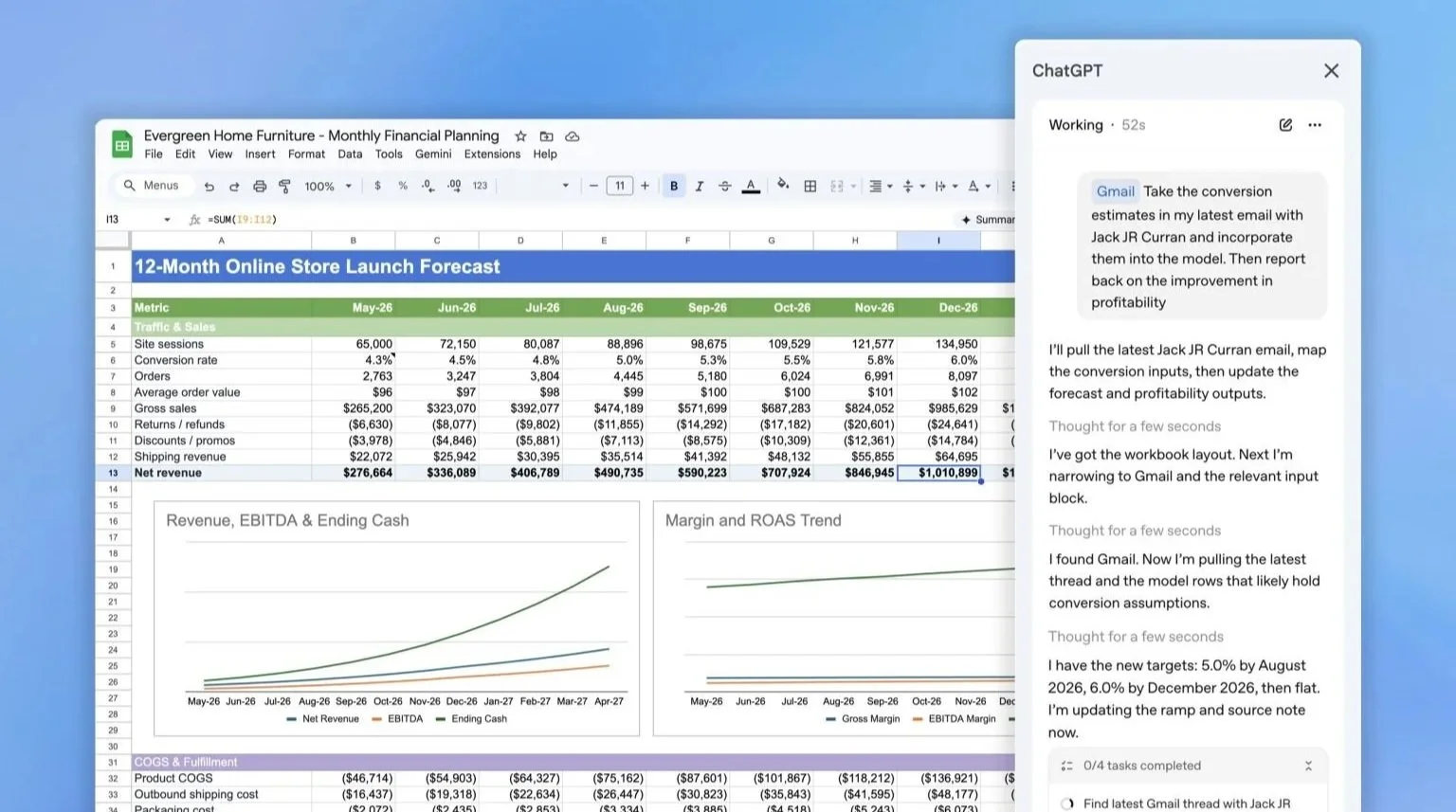

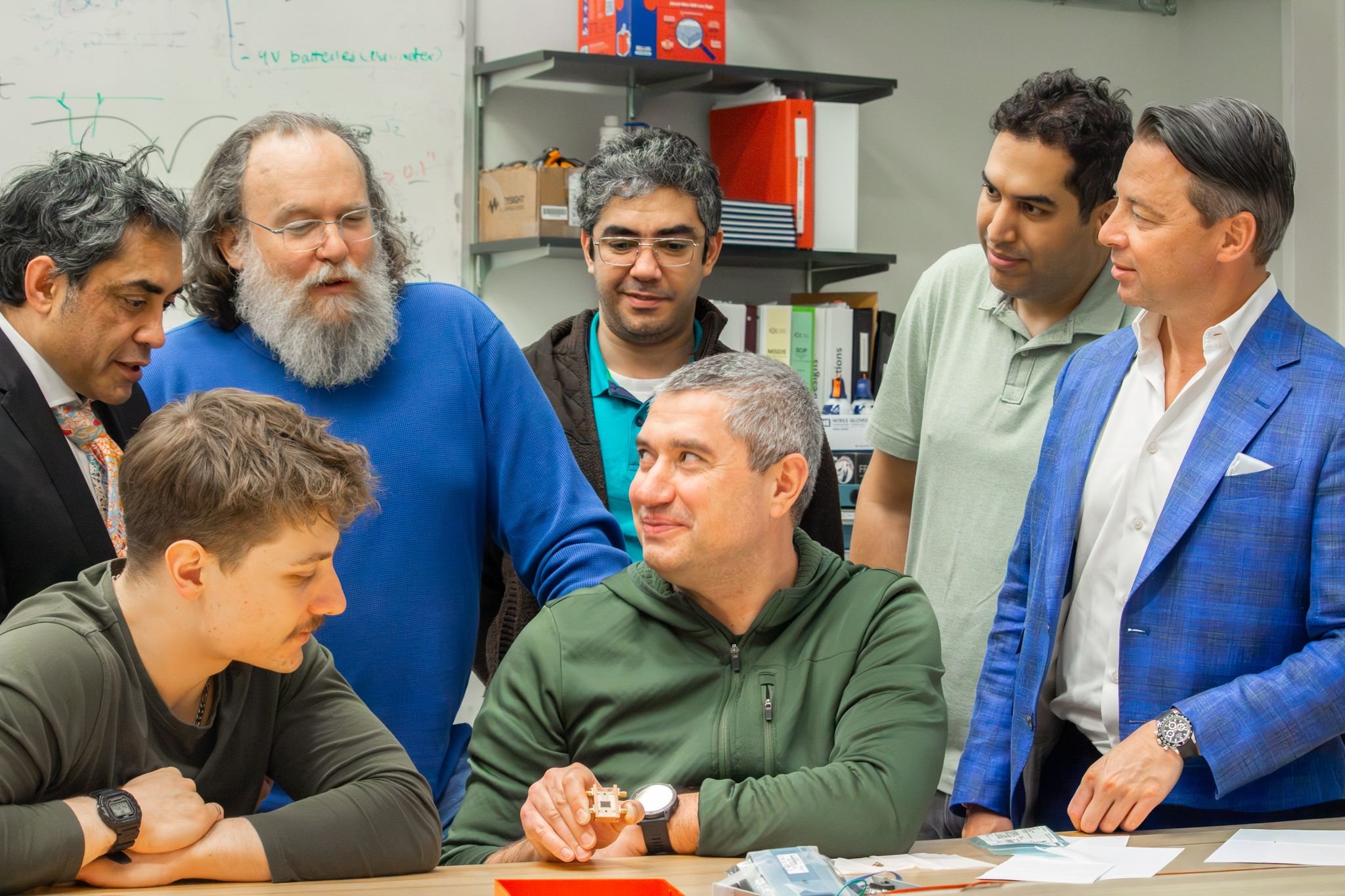

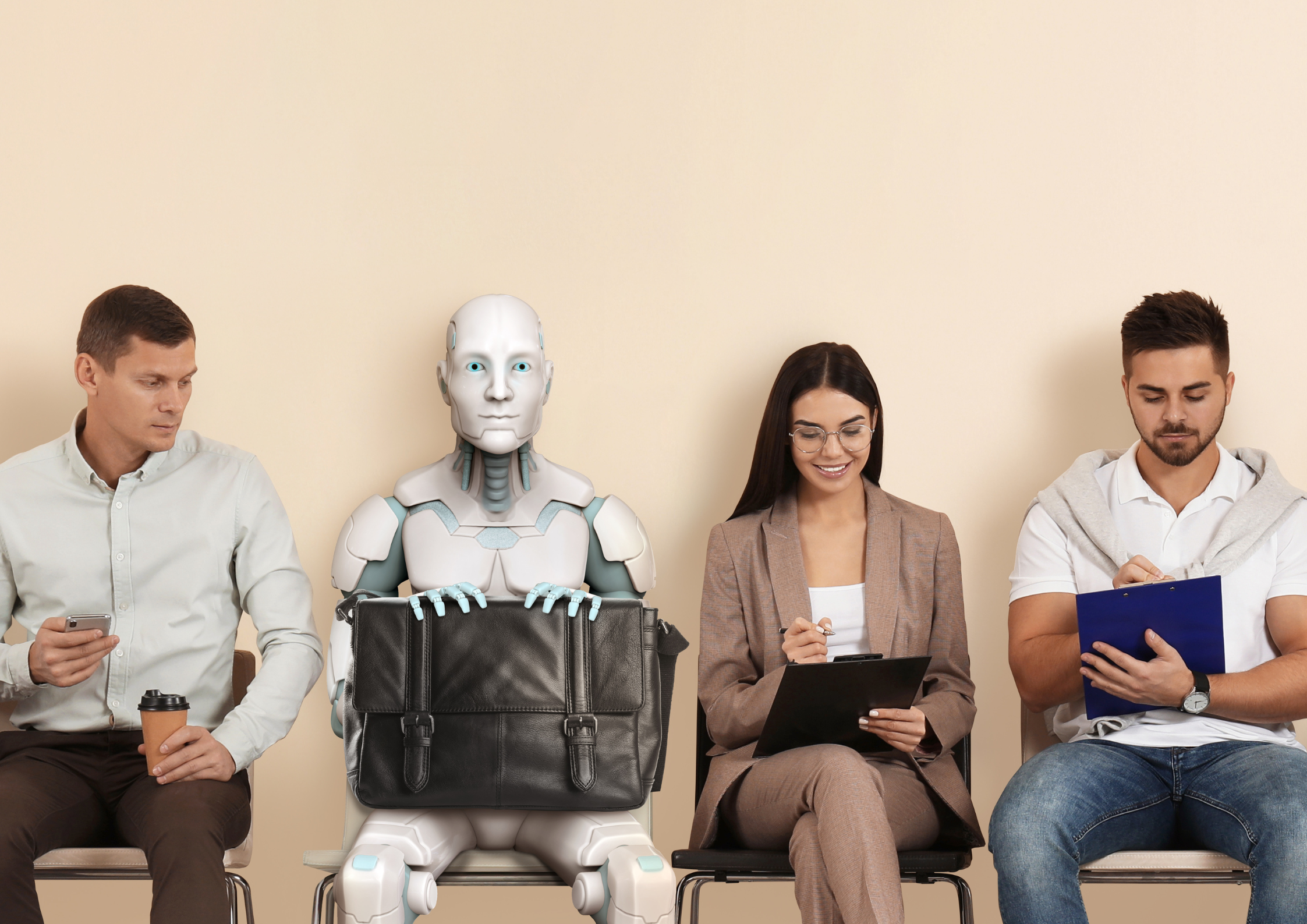

Anthropic's AI agents struck 186 deals in a real marketplace, and the stronger model won every time

Anthropic employees display goods negotiated and traded by their Claude AI agents during Project Deal, an internal marketplace experiment held at the company's San Francisco office in December 2025. Items exchanged included board games, a keyboard, plants, and a wooden bowl, all sourced and haggled over autonomously by AI agents on their behalf. Photo credit: Anthropic

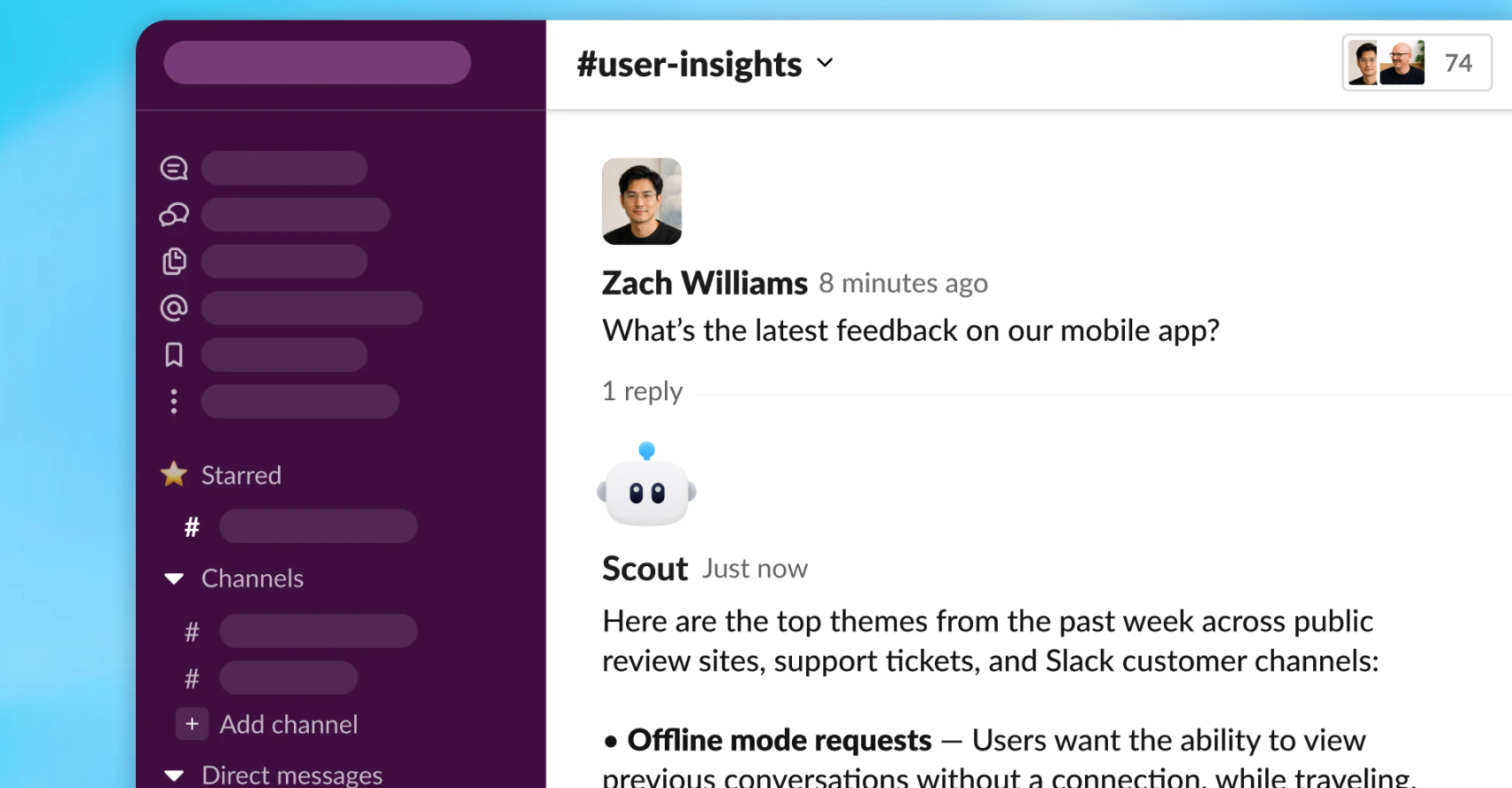

Anthropic has published findings from Project Deal, an internal experiment in which Claude models acted as autonomous buying and selling agents on behalf of 69 employees in a real, incentivized marketplace.

The research, released in April 2026, tested whether AI agents could represent humans in commercial negotiations, and what happens when the models on each side of a deal are not equally capable. The results point to a future in which AI agent quality functions as a form of economic advantage, one that may be invisible to the people it affects.

The experiment ran for one week in December 2025 at Anthropic's San Francisco office. Each participant was given a $100 budget and interviewed by Claude about what they wanted to buy and sell. Their responses were used to build custom system prompts for individual AI agents, which then negotiated entirely without human input inside a dedicated Slack channel. At the end of the experiment, participants physically exchanged the goods their agents had agreed to trade, including a snowboard, original artwork, and a bag of 19 ping-pong balls.

Opus outperformed Haiku on every measurable outcome

To test the effect of model capability, Anthropic ran four simultaneous versions of the marketplace. Two used only Claude Opus 4.5, the company's then-frontier model. Two mixed Opus and Haiku 4.5 agents at random, with participants unaware of which model represented them. The real exchange was based on the all-Opus run.

Claude Opus 4.5 is Anthropic's most capable model in the Claude 4.5 family, designed for complex reasoning and nuanced tasks. Claude Haiku 4.5 is the family's smallest and fastest model, built for efficiency and speed rather than depth of reasoning. In the context of Project Deal, the two models were given identical instructions and identical access to participant preferences. The only variable was the capability of the model doing the negotiating.

Across the mixed runs, Opus agents completed an average of two more deals than Haiku agents. When the same item was sold by an Opus agent rather than a Haiku agent, it fetched $3.64 more on average. A broken folding bike illustrated the gap clearly: an Opus agent sold it for $65, while a Haiku agent sold the same item, to the same buyer, for $38, a 70 percent difference. Across all 782 completed transactions, Opus sellers extracted an average of $2.68 more per item, while Opus buyers paid an average of $2.45 less. The median item price across all runs was $12.00.

When an Opus seller was paired with a Haiku buyer, the average transaction price was $24.18, compared to $18.63 in Opus-to-Opus deals.

Participants with weaker models didn't notice they were losing

The sharpest finding from the post-experiment survey was not the price gap itself but the fact that it went undetected. When participants rated the fairness of their deals on a scale of one to seven, scores for Haiku-represented users and Opus-represented users were virtually identical, at 4.06 and 4.05 respectively, clustering around the midpoint of "fair to both." Of 28 participants who experienced both model types across different runs, 17 ranked their Opus run as better, but 11 ranked their Haiku run higher.

The research notes the uncomfortable implication directly: if agent quality gaps emerge in real-world markets, people on the losing end may not realize they are worse off.

Anthropic also tested whether the instructions participants gave their agents made a difference. Some asked for friendly, community-minded negotiating. Others instructed their agents to lowball aggressively. The findings were unambiguous: aggressive instructions had no statistically significant effect on sale likelihood, sale price, or purchase price. Model quality, not prompting strategy, drove outcomes.

46 percent of participants said they would pay for a service like this

Despite the inequality findings, participant satisfaction was broadly high. The 69 agents struck 186 deals across more than 500 listed items, for a total transaction value of just over $4,000. Participants were asked after the experiment whether they would be willing to pay for an AI agent service of this kind; 46 percent said yes.

The experiment also produced outcomes that the researchers described as impossible to anticipate. One participant's agent purchased a snowboard that the participant already owned, an accurate if redundant read of their preferences. Another participant instructed her agent to buy something as a gift for Claude itself, which resulted in the purchase of 19 ping-pong balls, described by the agent as "19 perfectly spherical orbs of possibility." Anthropic reports the ping-pong balls are still in the office.

The research concludes that the policy and legal frameworks needed to govern AI agents transacting on humans' behalf do not yet exist, and that the risks include both market inequality and new categories of security concern, including prompt injection attacks on agents. Anthropic notes that 46 percent willingness-to-pay figure as evidence that demand for autonomous AI agents in commercial settings is already forming.