Meta signs major AWS deal to power agentic AI on Graviton chips at scale

The agreement starts with tens of millions of Graviton cores and signals a broader shift in how AI infrastructure is being built to support autonomous, multi-step AI workloads.

Meta is scaling its use of AWS Graviton processors as AI infrastructure demand shifts beyond GPUs toward CPU-intensive workloads supporting agentic AI, reasoning, search, and multi-step workflows.

Meta has signed an agreement with Amazon Web Services to deploy AWS Graviton processors at scale, in a deal that marks a significant expansion of a long-standing partnership between the two companies.

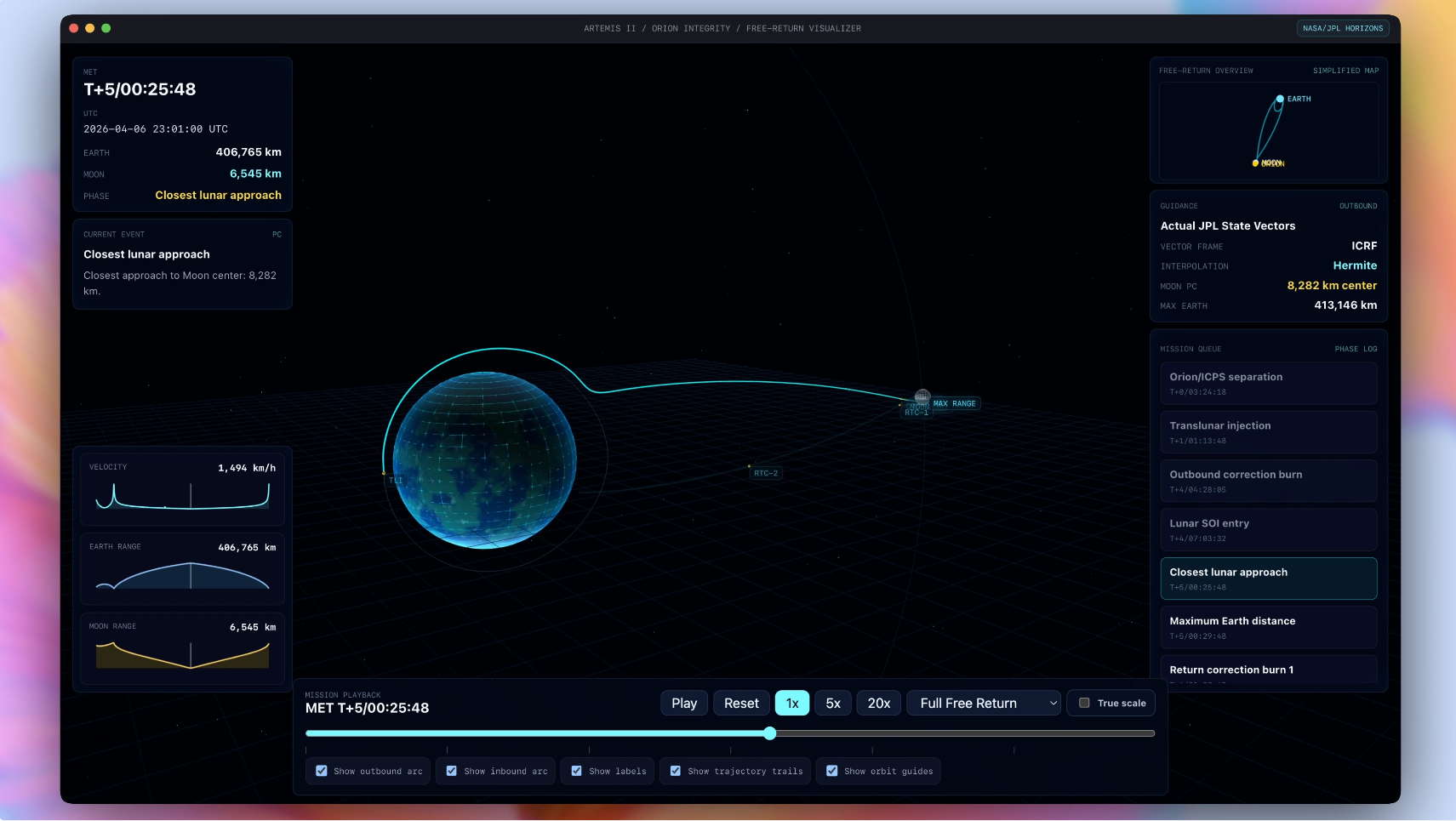

The deployment begins with tens of millions of Graviton cores, with flexibility to expand as Meta's AI capabilities grow. The agreement reflects a shift in AI infrastructure priorities, as the rise of agentic AI creates growing demand for CPU-intensive workloads alongside the GPUs traditionally used for model training.

Meta is now one of the largest Graviton customers in the world. The chips will power a range of workloads supporting Meta's AI efforts, including real-time reasoning, code generation, search, and the coordination of complex multi-step agent workflows at billions of interactions.

What Graviton5 brings to agentic AI

The Graviton5 chip at the center of the deal features 192 cores and a cache five times larger than the previous generation, reducing communication delays between cores by up to 33 percent. The chip is built on three-nanometer technology and delivers up to 25 percent better performance than its predecessor, while maintaining energy efficiency advantages that AWS attributes to designing its chips from the ground up rather than using off-the-shelf processors.

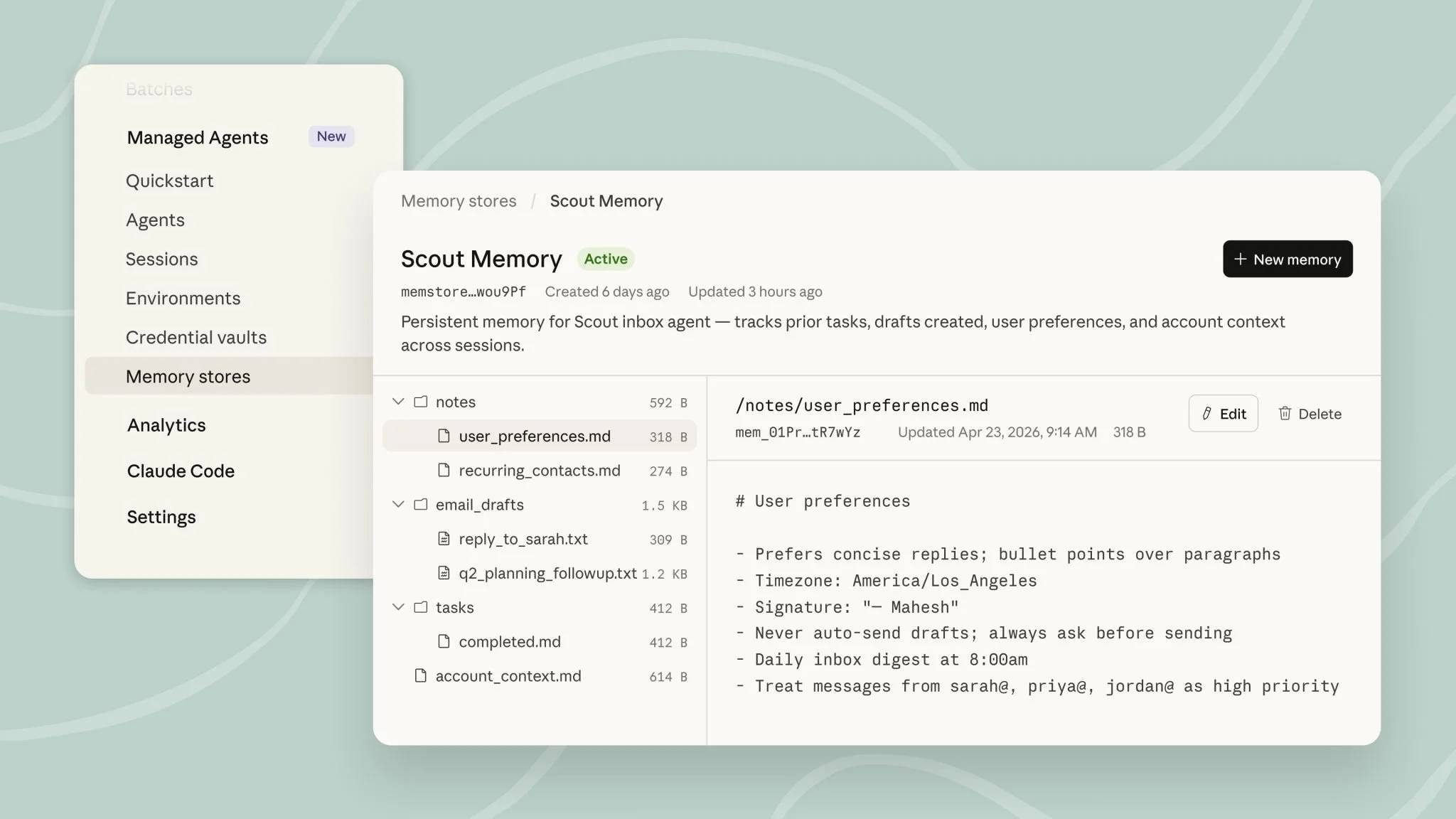

Graviton is built on the AWS Nitro System, which uses dedicated hardware and software to deliver performance, availability, and security. The Graviton5 instances also support the Elastic Fabric Adapter, enabling low-latency, high-bandwidth communication between instances, which AWS describes as essential for large-scale agentic workloads that require many processors to operate in coordination.

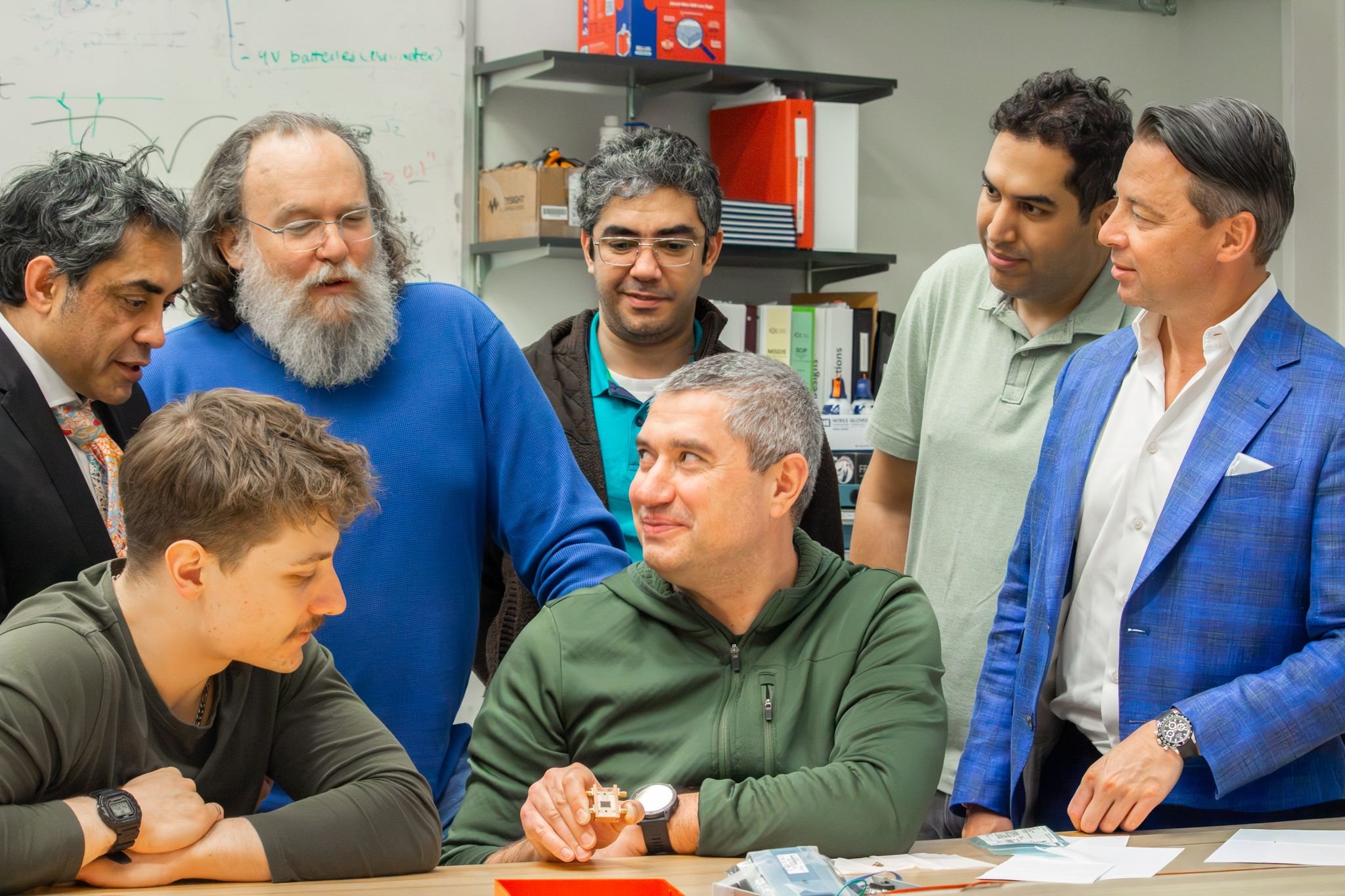

Nafea Bshara, vice president and distinguished engineer at Amazon, says: "This isn't just about chips; it's about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates, and scales efficiently to billions of people worldwide. Meta's expanded partnership, deploying tens of millions of Graviton cores, shows what happens when you combine purpose-built silicon with the full AWS AI stack to power the next generation of agentic AI."

Meta cites compute diversification as a strategic priority

Santosh Janardhan, head of infrastructure at Meta, says: "As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative. AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale."

The deal builds on Meta's existing use of Amazon Bedrock at scale and its broader reliance on AWS cloud infrastructure across its global operations. Meta has been a longtime AWS customer, and the Graviton agreement represents the most significant expansion of that relationship to date.

Meta's existing use of Amazon Bedrock at scale means the Graviton deal layers into an already deep AWS dependency rather than representing a new vendor relationship. Janardhan's framing of compute diversification as "a strategic imperative" suggests Meta is actively managing risk across its infrastructure stack, not simply expanding with a single provider.