Anthropic brings persistent memory to Claude Managed Agents in public beta

Developers can now build agents that learn and improve across sessions, with full programmatic control over what is stored, shared, and rolled back.

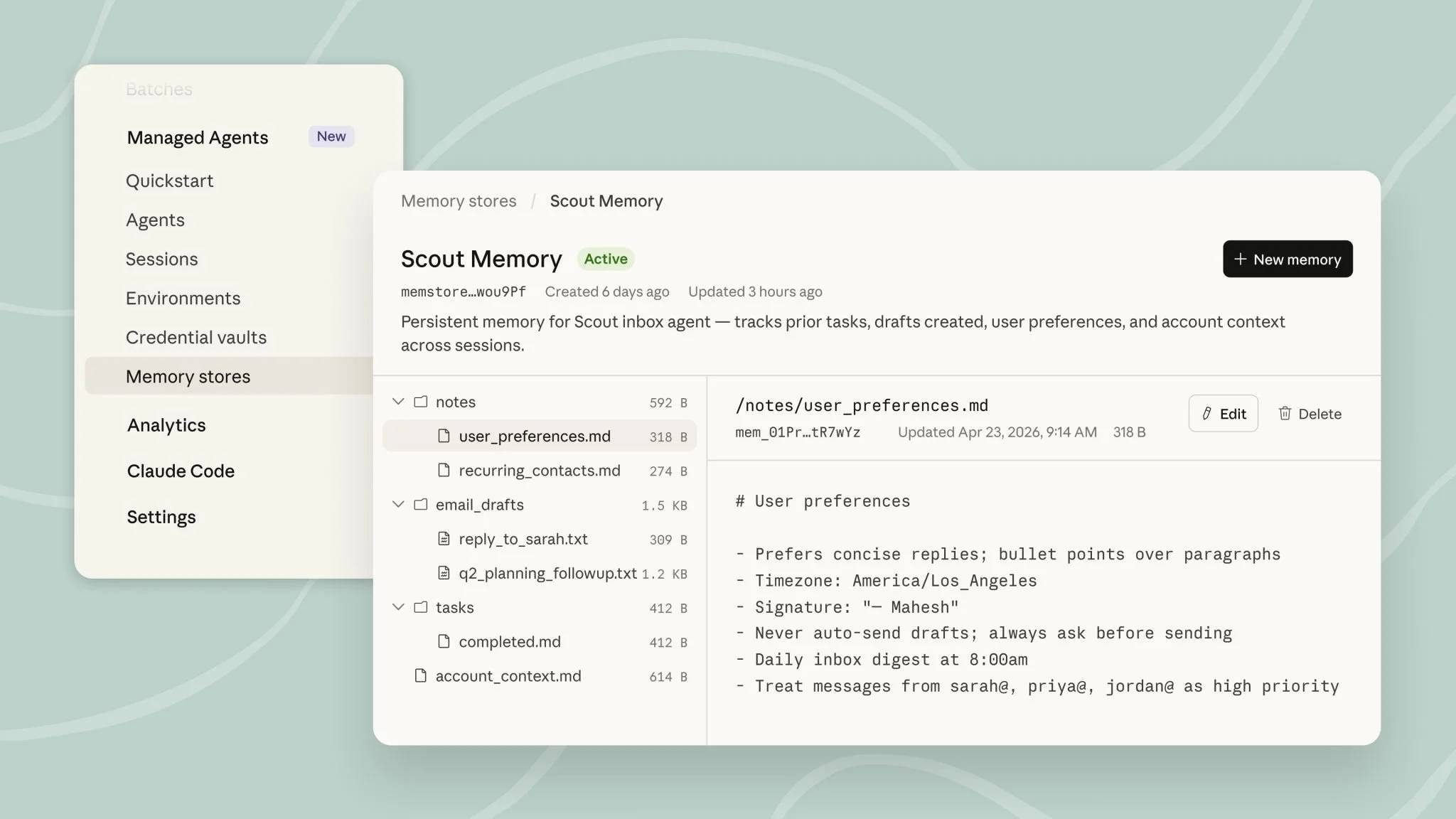

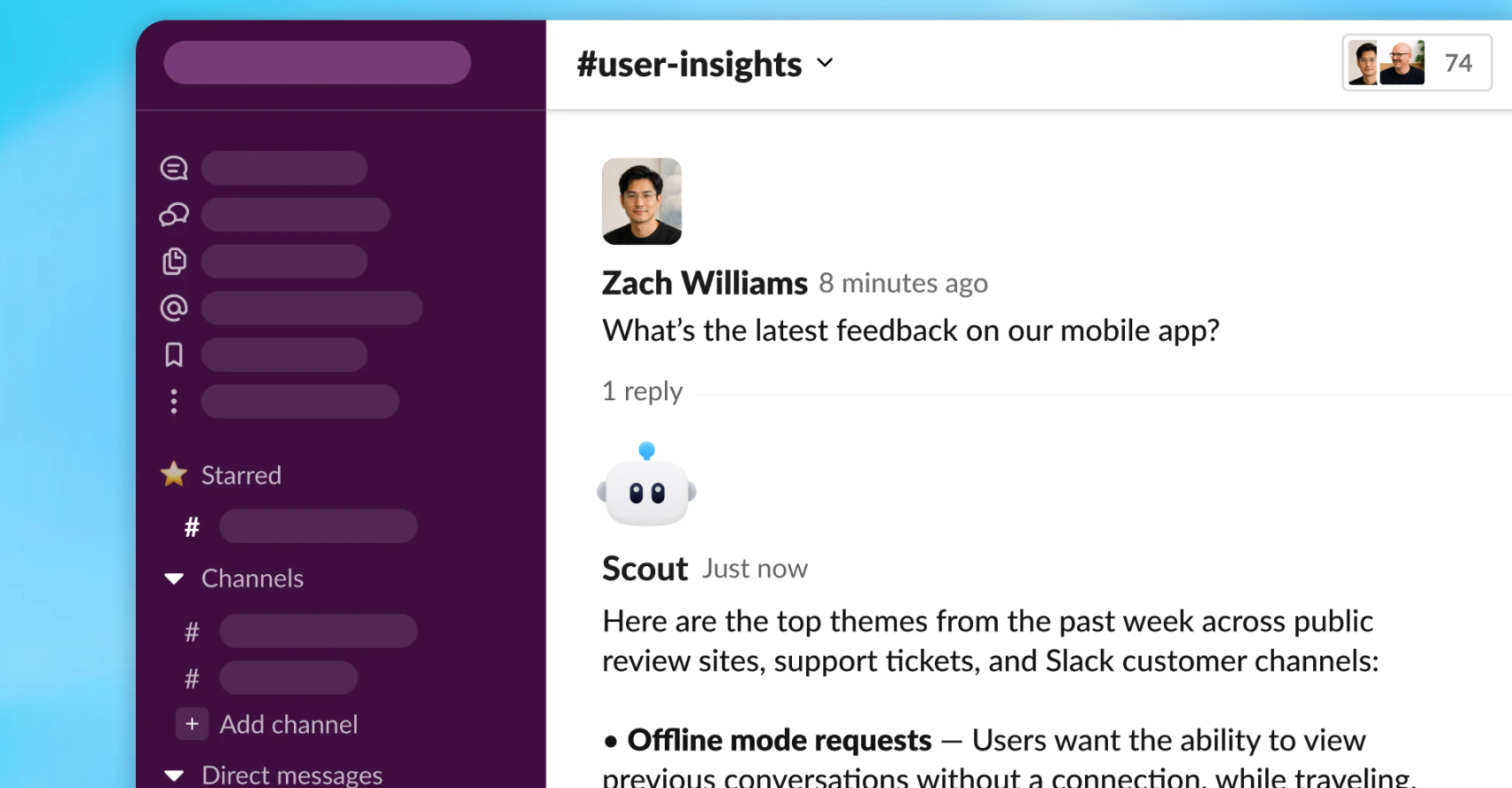

The Claude Console showing an active memory store for a Scout inbox agent, with user preferences, email drafts, and tasks tracked across sessions. Memory files can be viewed, edited, and deleted directly in the console or via API.

Anthropic has made memory for Claude Managed Agents available in public beta on the Claude Platform, giving developers the ability to build AI agents that retain and apply learning across sessions.

The feature, announced on April 23, 2026, stores memories as files on a filesystem, allowing developers to export, edit, and manage them via API or directly in the Claude Console. Early adopters include Netflix, Rakuten, Wisedocs, and Ando, with results including a 97 percent reduction in first-pass errors and a 30 percent speed increase in document verification workflows.

Posting on LinkedIn, Angela Jiang, Head of Product, Platform at Anthropic, said managed agents "can now self learn across sessions with memory," adding that developers can "view, edit, add and delete those memories directly in the console or api."

How the memory layer works

Memories mount directly onto a filesystem, allowing Claude to use the same bash and code execution tools it already relies on for agentic tasks. Stores can be scoped at the organization or user level, with different read and write permissions applied to each. Multiple agents can work concurrently against the same memory store without overwriting each other, and all changes are tracked in a detailed audit log that records which agent and session each memory came from.

Developers can roll back to earlier versions or redact content from history, and memory updates surface in the Claude Console as session events.

Anthropic describes the memory layer as optimized against internal benchmarks for long-running agents that improve across sessions and share learning with each other. The company says its latest models save more comprehensive and organized memories and are more selective about what to retain for a given task.

What early adopters are reporting

Netflix is using memory to carry context across sessions, including insights that took multiple turns to uncover and mid-conversation corrections from human reviewers, replacing the need to manually update prompts. Rakuten's long-running task agents use memory to avoid repeating past mistakes, reporting 97 percent fewer first-pass errors within workspace-scoped, observable boundaries.

Wisedocs built its document verification pipeline on Managed Agents, using cross-session memory to identify recurring document issues. Gracy Andrew, a representative of Wisedocs, says: "A good memory API gets rid of many infrastructure headaches, especially when building across agents and sessions. In our document verification pipeline on Claude Managed Agents, we used cross-session memory to let our agents identify and remember common issues — including ones we didn't think about. It's sped verification up 30%."

Yusuke Kaji, General Manager, AI for Business at Rakuten, says: "Memory in Claude Managed Agents lets us put continuous learning into production at scale. Our agents distill lessons from every session, delivering 97% fewer first-pass errors at 27% lower cost and 34% lower latency, so users spend less time nudging agents to fix mistakes the system has already learned to avoid. And because memory is workspace-scoped and observable, continuous learning stays under our control."

Sara Du, Founder of Ando, says: "A lot of our work at Ando is making sense of fast-moving, messy conversations between teams and their agents. Memory lets us stop building memory infra and focus on the product itself."

The public beta follows Anthropic's earlier release of Claude Managed Agents as a production infrastructure layer. Rakuten's reported 27 percent cost reduction and 34 percent latency improvement alongside the 97 percent error rate reduction give the memory feature a measurable commercial case that goes beyond capability. Those figures, combined with Netflix and Ando building core product workflows on the same infrastructure, suggest enterprise adoption is already ahead of the announcement.