OpenAI's Sam Altman publishes five principles framing how the company plans to deploy AI

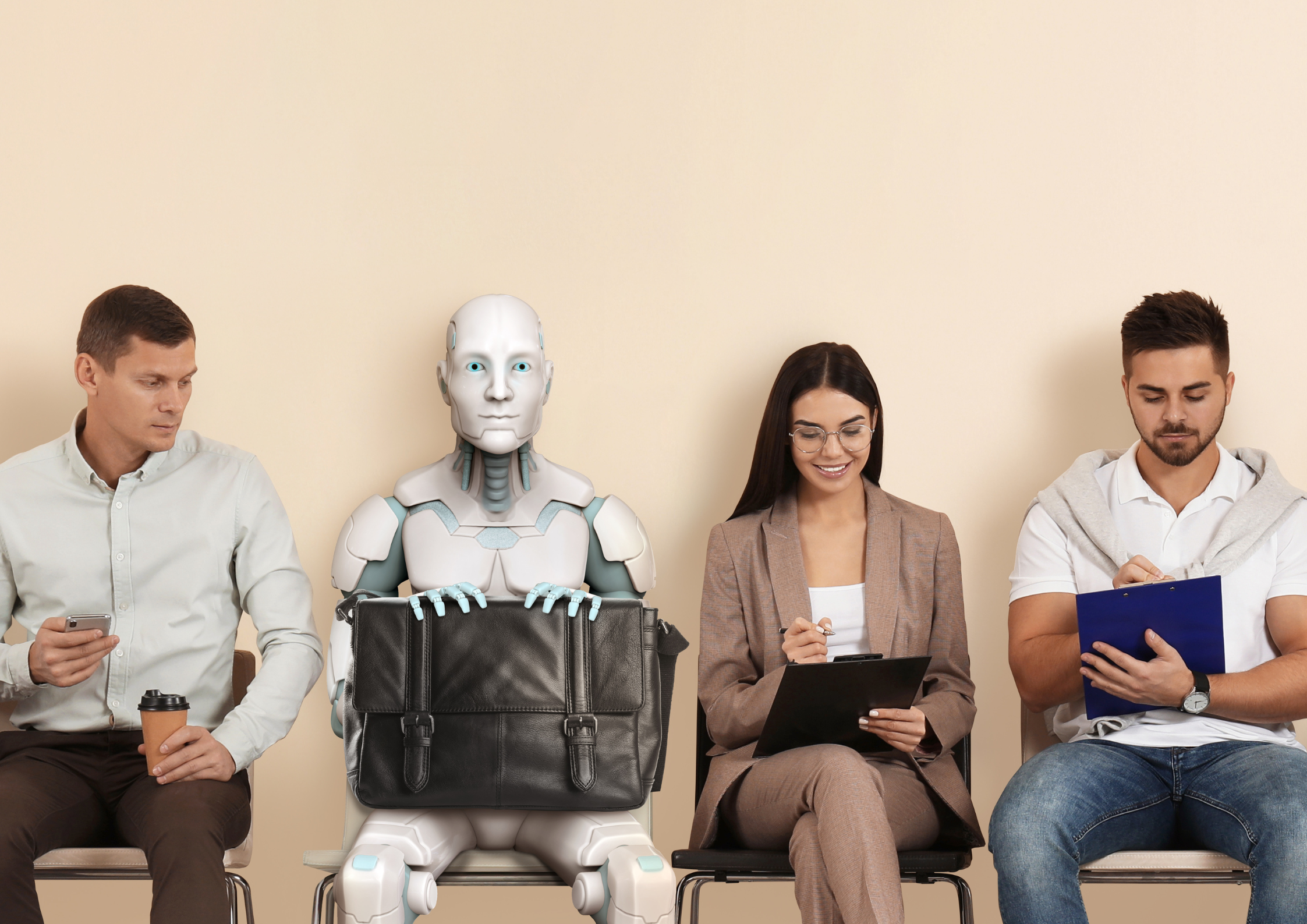

The framework, covering democratization, empowerment, universal prosperity, resilience, and adaptability, lands as OpenAI faces growing questions over access, governance, and the societal impact of its products including those used in education.

OpenAI CEO Sam Altman has published five principles guiding how the company builds and deploys AI, covering democratization, empowerment, universal prosperity, resilience, and adaptability.

OpenAI CEO and co-founder Sam Altman has published a five-point framework setting out the principles guiding how the company builds and deploys AI, in a piece released on the OpenAI website and amplified on LinkedIn by the company's Global Affairs team.

The document lands at a moment when OpenAI is under sustained scrutiny over the societal effects of its products, including those increasingly embedded in classrooms, university curricula, and workforce training programs.

The five principles are democratization, empowerment, universal prosperity, resilience, and adaptability. Together they form what Altman frames as the company's stated commitments on access to AI, the prevention of power concentration, the economic distribution of AI's gains, the management of emerging risks, and the willingness to change course as the technology evolves.

OpenAI Global Affairs took to LinkedIn to share the principles with the company's network. The post described the framework as one that reflects "not just what we believe, but what we're building towards," and signaled that the principles will guide OpenAI's positioning on access, governance, safety, and infrastructure decisions.

Democratization and the resistance to concentrated power

The first principle, democratization, is framed as a direct response to concerns that AI could entrench power in a small number of companies. Altman writes: "Power in the future can either be held by a small handful of companies using and controlling superintelligence, or it can be held in a decentralized way by people. We believe the latter is much better, and our goal is to put truly general AI in the hands of as many people as possible."

He adds: "This means that in addition to giving everyone access to AI, we need to ensure that key decisions about AI are made via democratic processes and with egalitarian principles, and not just made by AI labs."

The second principle, empowerment, sets out OpenAI's commitment to user autonomy, with Altman acknowledging that the company will need to balance broad latitude in how people use its products against responsibilities to minimize harm. He writes that this will require "erring on the side of caution in the face of uncertainty, and relaxing constraints with more evidence."

Universal prosperity and the economics of AI infrastructure

The third principle, universal prosperity, links OpenAI's compute strategy directly to its economic philosophy. Altman writes: "A lot of the things that we do that look weird, buying huge amounts of compute while our revenue is relatively small, vertically integrating to lower costs and make our technology easier to use, pushing to build datacenters all around the world, and much more, are driven by our fundamental belief in a future of universal prosperity."

He flags that achieving this prosperity will require new economic model: "Our governments may need to consider new economic models to ensure that everyone can participate in the value creation in front of us."

The fourth principle, resilience, addresses the risks introduced by increasingly capable models, including those that could make it easier to create new pathogens or accelerate cybersecurity threats. Altman frames this as a society-wide problem rather than one OpenAI can solve in isolation, writing: "No AI lab can ensure a good future alone."

Adaptability and a willingness to trade priorities

The fifth principle, adaptability, may be the most editorially significant of the five. Altman acknowledges that OpenAI's positions will change as the technology evolves and is explicit about the trade-offs that may follow: "While we are quite confident that universal prosperity will remain really important, we can imagine periods in the future where we have to trade off some empowerment for more resilience."

He also acknowledges the company's growing influence, writing: "We acknowledge that OpenAI is a much larger force in the world than it was a few years ago, and we will be transparent about when, how, and why our operating principles change."

The document closes with an explicit invitation to scrutiny: "It's very fair to critique us on every decision; we deserve an enormous amount of scrutiny given the weight of what we are doing. We will not get everything right, but we will learn quickly and course-correct."

The publication of the principles arrives as OpenAI products are increasingly embedded in education at every level, from universities to corporate workforce training programs covering millions of employees. Whether a values document of this kind shifts how regulators, educators, and end users assess the company's day-to-day decisions, particularly on questions of access, data use, and downstream impact on students and teachers, is the question the framework now puts to the sector.