Heriot-Watt researcher warns gen AI in machine learning carries serious and underestimated risks

A new paper published in international journal Patterns argues that using LLMs within machine learning workflows can expose organizations to cyber-attacks, bias, and legal compliance failures, particularly when deployed to cut costs.

Professor Michael Lones, School of Mathematical and Computer Sciences, Heriot-Watt University.

A computer scientist at Heriot-Watt University has published research warning that the growing use of generative AI to design, build, and operate machine learning systems carries risks that developers and the public are not adequately accounting for, including cyber-attacks, data breaches, and bias against underrepresented groups.

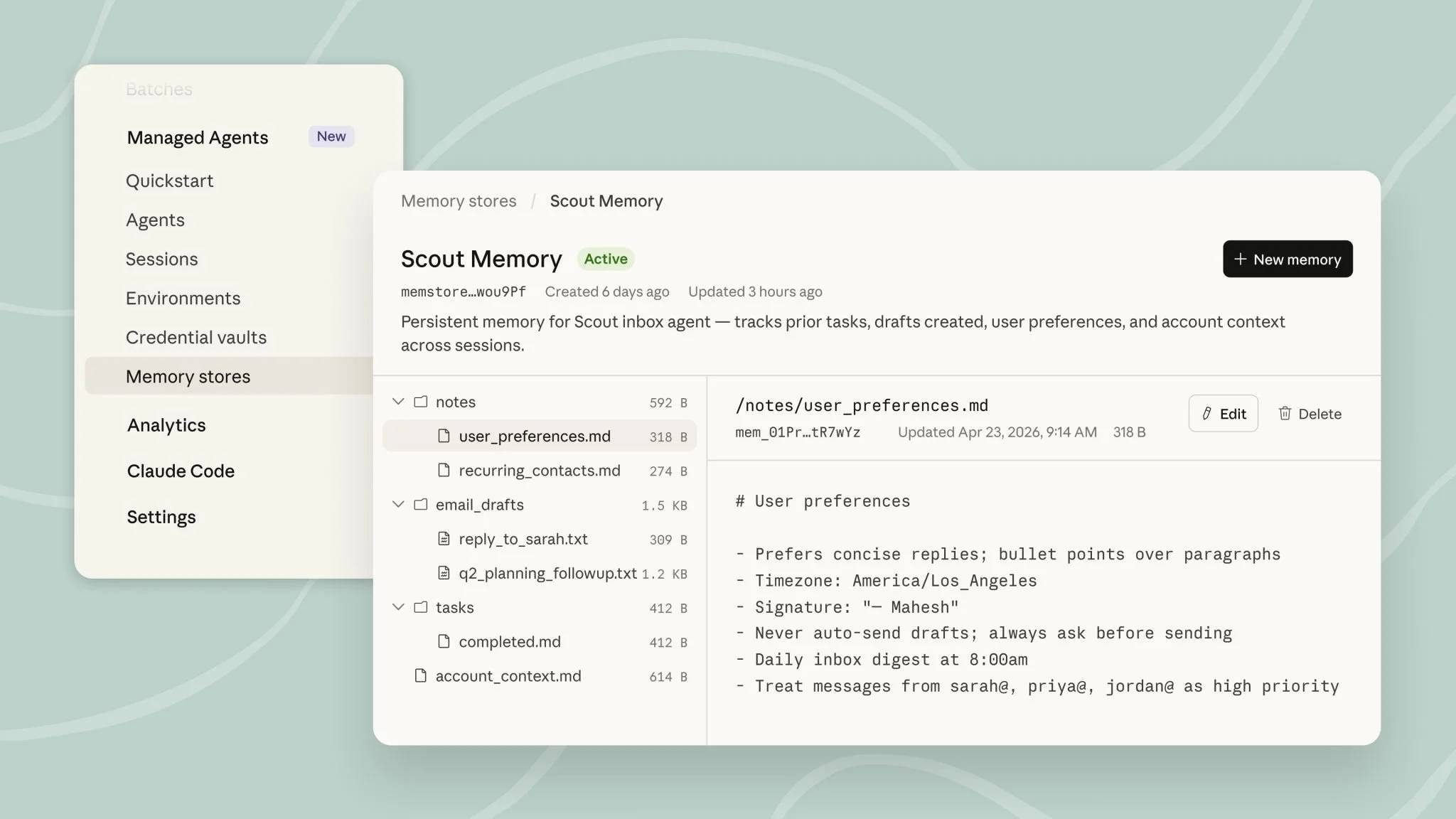

Michael Lones, professor at Heriot-Watt University's School of Mathematical and Computer Sciences, published the findings in Patterns, a data science journal produced by Cell Press. The paper, titled "Pitfalls and risks of generative AI in machine learning," identifies four ways generative AI is currently being applied within machine learning systems and examines the compounding risks each introduces.

Where the risks compound

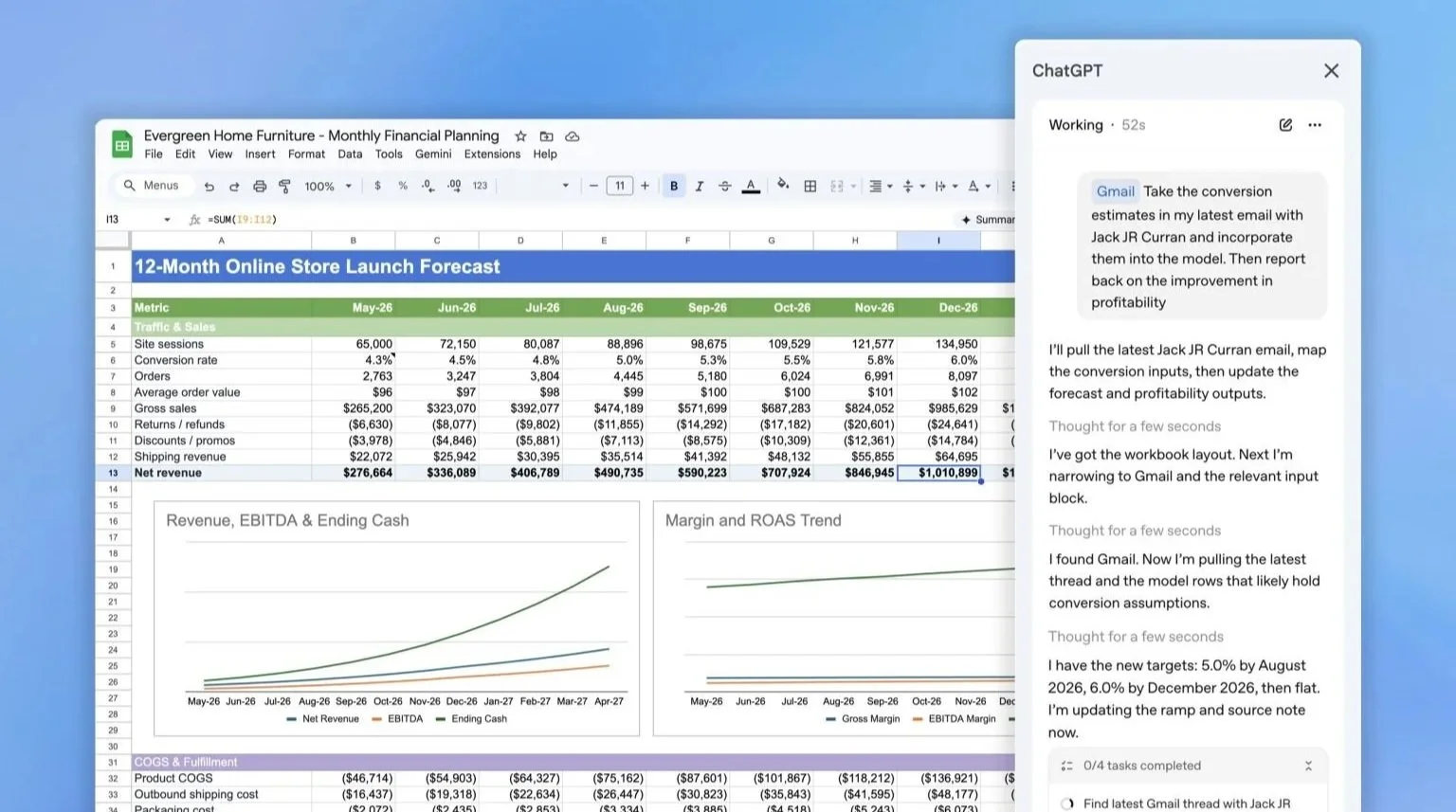

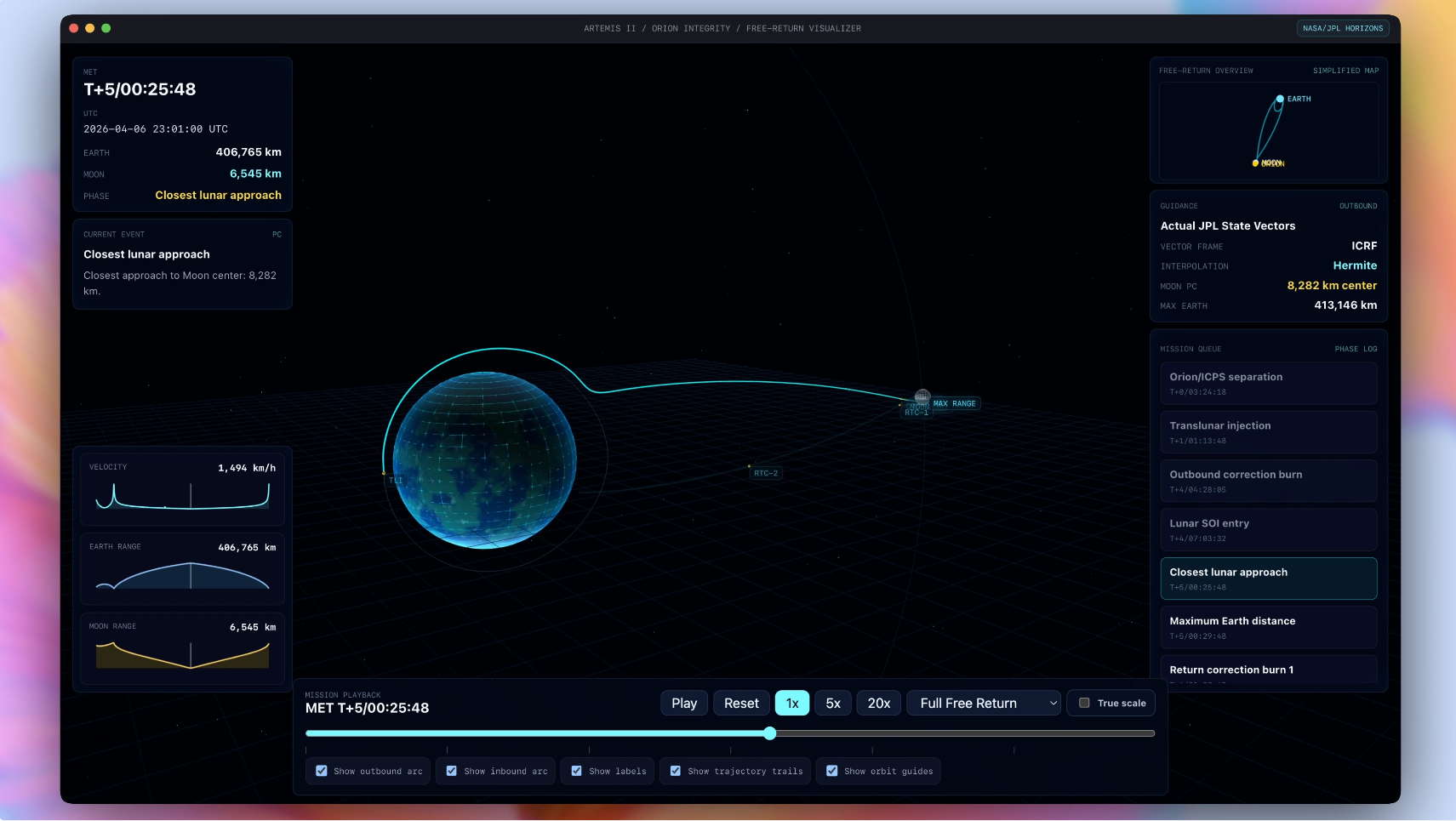

Professor Lones identifies four applications of generative AI in machine learning that each carry distinct risks: as a component within a machine learning pipeline, to design and code those pipelines, to synthesize training data, and to analyze machine learning outputs. The risks increase significantly when LLMs are used for multiple tasks within a single system, or when they operate agentically, meaning they can autonomously use external tools to solve problems.

A central concern is that LLMs make mistakes and hallucinate information in ways that are neither predictable nor easy to evaluate, because the models operate without transparency. That opacity creates a specific problem for regulated sectors.

Professor Lones says: "In areas like medicine or finance, there are laws about being able to show that the machine learning system is reliable, and that you can explain how it reaches decisions. As soon as you start using LLMs, that gets really hard, because they're so opaque. It's important for people in the general public to be aware of the limitations of GenAI systems."

Cost-cutting as a driver of risk

The paper draws a direct line between commercial pressure and the adoption of generative AI in machine learning, warning that cost reduction is a primary motivation for many deployments and that this introduces risk without equivalent reward for end users.

Professor Lones says: "Companies will deploy these systems to do things like cut costs, and this may improve the experience that end users get, but it may also have negative consequences, such as bias and unfairness."

On the complexity risk, he adds: "If you have Gen AI working in a number of different ways within your machine learning workflows or system, then they can interact in unpredictable and hard to understand ways. My advice at the moment is to avoid adding too much complexity in terms of how we use Gen AI in machine learning, particularly if you're in a sector that has high stakes that impact people's lives and livelihood."

A caution, not a prohibition

Professor Lones stops short of arguing against the use of generative AI in machine learning entirely, framing his position as a call for proportionate judgment. He says: "Machine learning developers need to be aware of the risks of using Gen AI in machine learning and find a sensible balance between improvements in capability and the risks that might come with that. Given the current limitations of generative AI, I'd say this is a clear example of just because you can do something doesn't mean you should."

The paper arrives as pressure mounts on EdTech and other sectors to integrate generative AI rapidly into existing systems. Machine learning is already embedded in high-stakes education and workforce contexts, including the assignment of students to programs, processing of applications, and personalized learning tools.