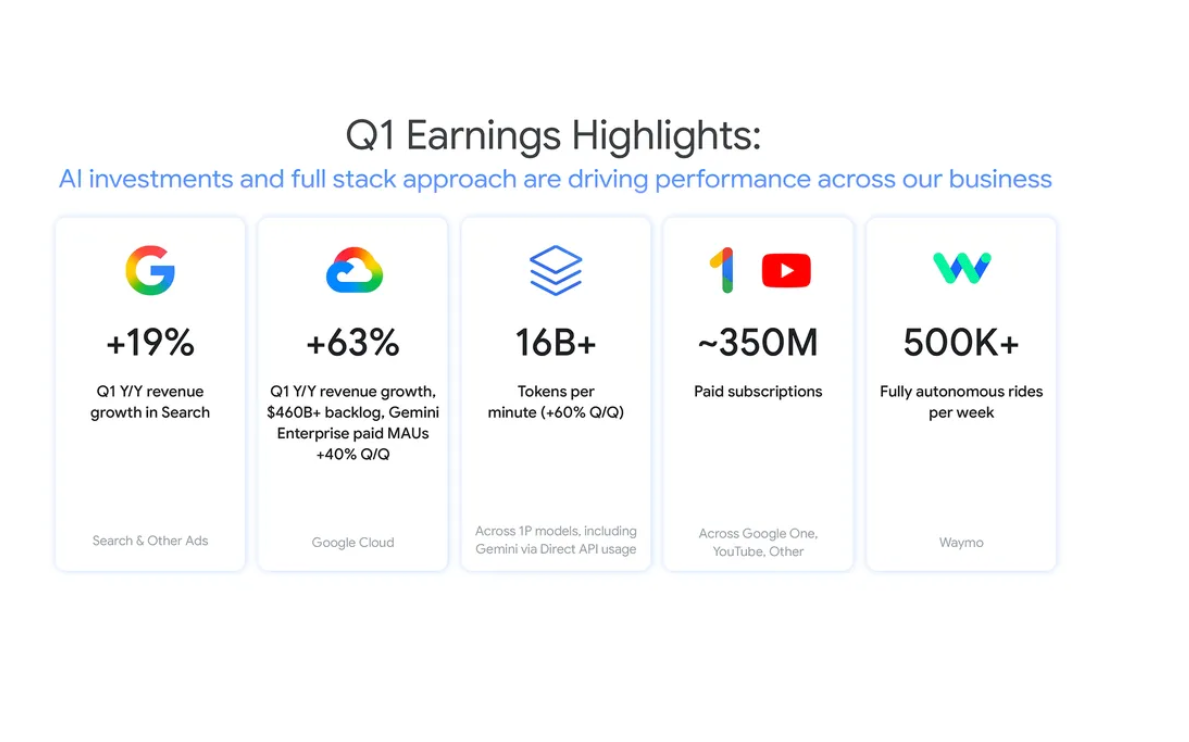

Tech giants commit $12.5M to open source security as AI pressure grows

Anthropic, AWS, GitHub, Google, Google DeepMind, Microsoft, and OpenAI have committed $12.5 million to strengthen open source software security, with funding directed through the Linux Foundation’s Alpha-Omega and Open Source Security Foundation (OpenSSF) initiatives.

The investment targets the growing strain on open source maintainers as AI accelerates vulnerability discovery and increases the volume of security issues requiring review. The update was shared on LinkedIn by Rahul Patil, CTO at Anthropic, who pointed to the fragility of the systems underpinning modern software and AI.

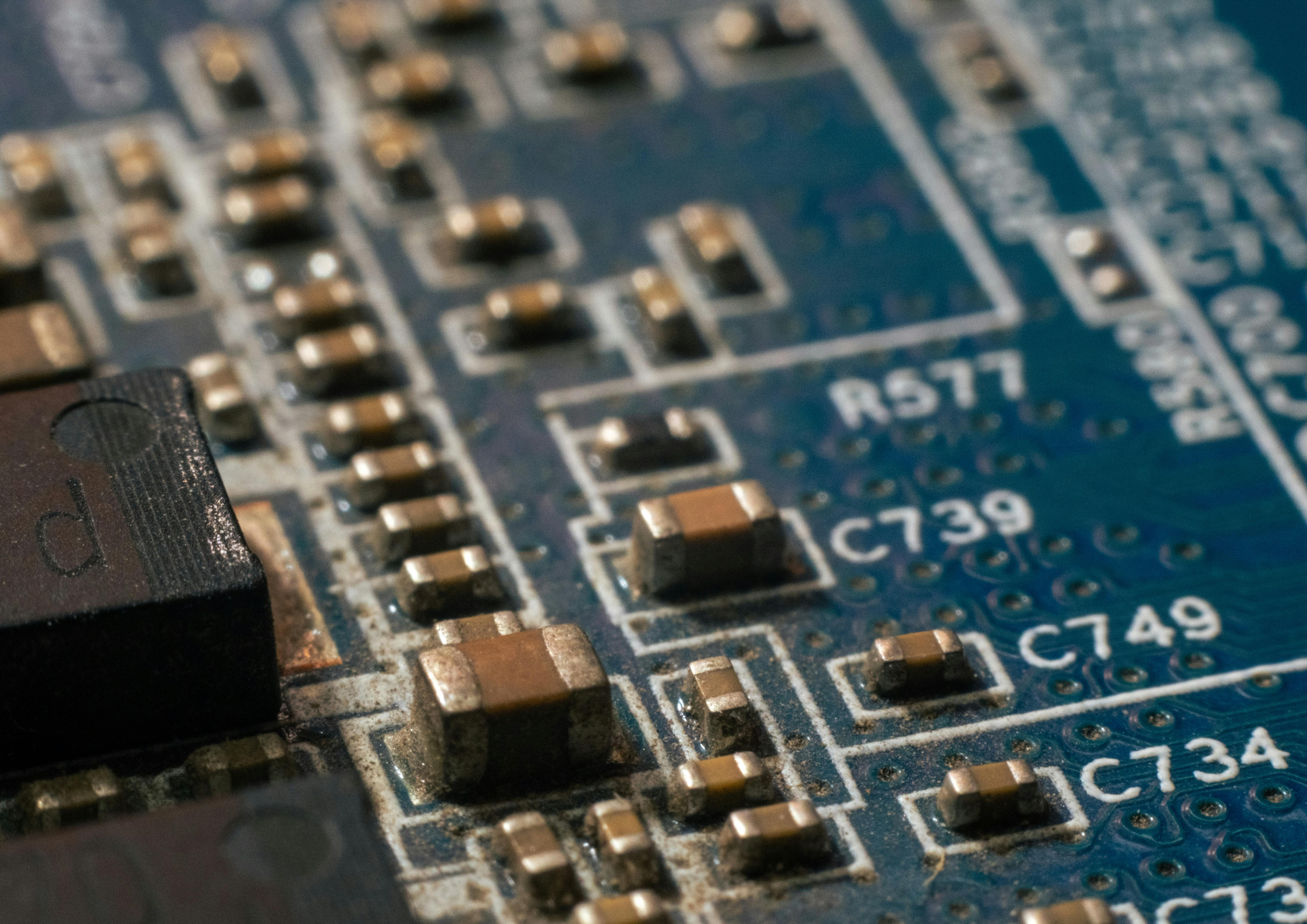

Patil said in the post: “Open source software runs the world's most critical systems. Banks, hospitals, financial markets. Most of it is maintained by small teams, often without dedicated security support.”

He continued by highlighting the downstream risk to AI systems, stating: “AI is only as trustworthy as the ecosystem it runs on. If the open source software underpinning AI development is vulnerable, nothing built on top of it is safe either. Security can't be an afterthought.”

Funding targets maintainers and tooling gaps

The funding will be used to expand security tooling, support, and processes for open source maintainers, who are facing an increase in AI-generated vulnerability reports without corresponding resources to manage them.

The Linux Foundation says the initiative will focus on embedding security expertise directly into projects and improving workflows so maintainers can triage and resolve issues more effectively.

Michael Winser, Co-Founder of Alpha-Omega, positioned the initiative as a continuation of earlier work embedding security into open source projects, stating: “Alpha-Omega was built on the idea that open source security should be both normal and achievable. By funding audits and embedding security experts directly into the ecosystem, we’ve proven that targeted investment works.”

He framed the next phase as scaling that model across more projects, adding: “Now, we’re scaling that expertise. We are excited to bring maintainer-centric AI security assistance to the hundreds of thousands of projects that power our world.”

Greg Kroah-Hartman of the Linux kernel project pointed to the operational pressure maintainers are now facing, particularly from AI-generated reports, noting: “Grant funding alone is not going to help solve the problem that AI tools are causing today on open source security teams.”

He emphasized the role of OpenSSF’s existing infrastructure in addressing this, stating: “OpenSSF has the active resources needed to support numerous projects that will help these overworked maintainers with the triage and processing of the increased AI-generated security reports they are currently receiving.”

AI shifts the scale of security risk

The rise of AI tools is increasing both the speed and scale of vulnerability detection, creating new operational challenges for teams maintaining widely used software components.

Steve Fernandez, General Manager of OpenSSF, positioned the initiative as part of a broader lifecycle approach to software security, stating: “Our commitment remains focused: to sustainably secure the entire lifecycle of open source software.”

He linked this directly to frontline maintainers, adding: “By directly empowering the maintainers, we have an extraordinary opportunity to ensure that those at the front lines of software security have the tools and standards to take preventative measures to stay ahead of issues and build a more resilient ecosystem for everyone.”

Industry contributors also pointed to the need for coordinated action as AI reshapes software development and risk exposure.

Vitaly Gudanets, CISO at Anthropic, framed the investment as foundational to AI safety, stating: “The open source ecosystem underpins nearly every software system in the world, and its security can’t be taken for granted.”

He connected this to the broader AI transition, adding: “Ensuring the world safely navigates the transition to transformative AI means investing in the foundations it runs on.”

Mark Russinovich, CTO, Deputy CISO and Technical Fellow at Microsoft Azure, positioned open source as central to modern infrastructure, stating: “Open source software is a critical part of the modern technology landscape.”

He highlighted the compounding effect of AI on risk, adding: “As AI accelerates both software development and the discovery of vulnerabilities, the industry must step up to protect this shared infrastructure.”

Dane Stuckey, CISO at OpenAI, framed the moment as requiring coordinated industry action, stating: “This is a critical moment for global cybersecurity that requires unprecedented levels of collaboration across the industry, and sustained commitment.”

He reinforced the role of maintainers within that system, adding: “Maintainers make an extraordinary contribution, and this program is an important step in providing them the support they need.”

ETIH Innovation Awards 2026

The ETIH Innovation Awards 2026 are now open and recognize education technology organizations delivering measurable impact across K–12, higher education, and lifelong learning. The awards are open to entries from the UK, the Americas, and internationally, with submissions assessed on evidence of outcomes and real-world application.