OpenAI unveils five-pillar youth safety blueprint for AI chatbots and backs it with €500,000 in EMEA grants

The ChatGPT maker wants European regulators to rethink how they protect young people online, publishing a framework that spans age assurance, parental controls, and manipulative design while funding 12 organizations working on AI literacy, mental health, and digital resilience.

OpenAI has published a European Youth Safety Blueprint outlining five pillars for protecting young people using AI chatbots

OpenAI has released what it calls a European Youth Safety Blueprint, a policy document outlining five principles the company wants European regulators to adopt when writing rules around young people's use of AI chatbots.

Alongside the blueprint, the company has announced 12 recipients of its EMEA Youth and Wellbeing Grant, a €500,000 fund supporting organizations working on youth safety, AI literacy, and mental health across Europe, the Middle East, and Africa.

The blueprint lands at a moment when governments across multiple continents are racing to tighten protections for young people online. Australia became the first country to enforce a nationwide ban on social media accounts for users under 16, which took effect in December 2025.

France passed a bill in January 2026 to ban social media for under-15s, citing a health emergency, while Spain announced plans for its own under-16s ban in February 2026. Austria, Denmark, and Slovenia are drafting age-based restrictions, and Greece, Italy, and Ireland are also exploring social media bans at various thresholds.

In the UK, the government launched a consultation in January 2026 on further measures to keep children safe online, including the option of banning social media for children under 16 and raising the digital age of consent.

The House of Lords subsequently backed a Conservative amendment to ban smartphones during the school day, and the government has moved to put its existing school phone ban guidance on a statutory footing through the Children's Wellbeing and Schools Bill.

At EU level, the European Commission has announced an age verification app that would allow users to prove their age without sharing personal data with platforms, and a special panel on child online safety convened by Commission President Ursula von der Leyen is expected to report recommendations by summer 2026.

Ann O'Leary, VP Global Policy at OpenAI, says: "Today's young people will be the first generation to grow up with AI as part of everyday life, shaping how they learn, create, and prepare for the future. Getting this right matters and we look forward to working with European policymakers and civil society towards that goal."

Blueprint targets emotional dependency and manipulative design

The regulatory push is not limited to social media. AI chatbots have come under increasing legal scrutiny following a series of lawsuits in the United States.

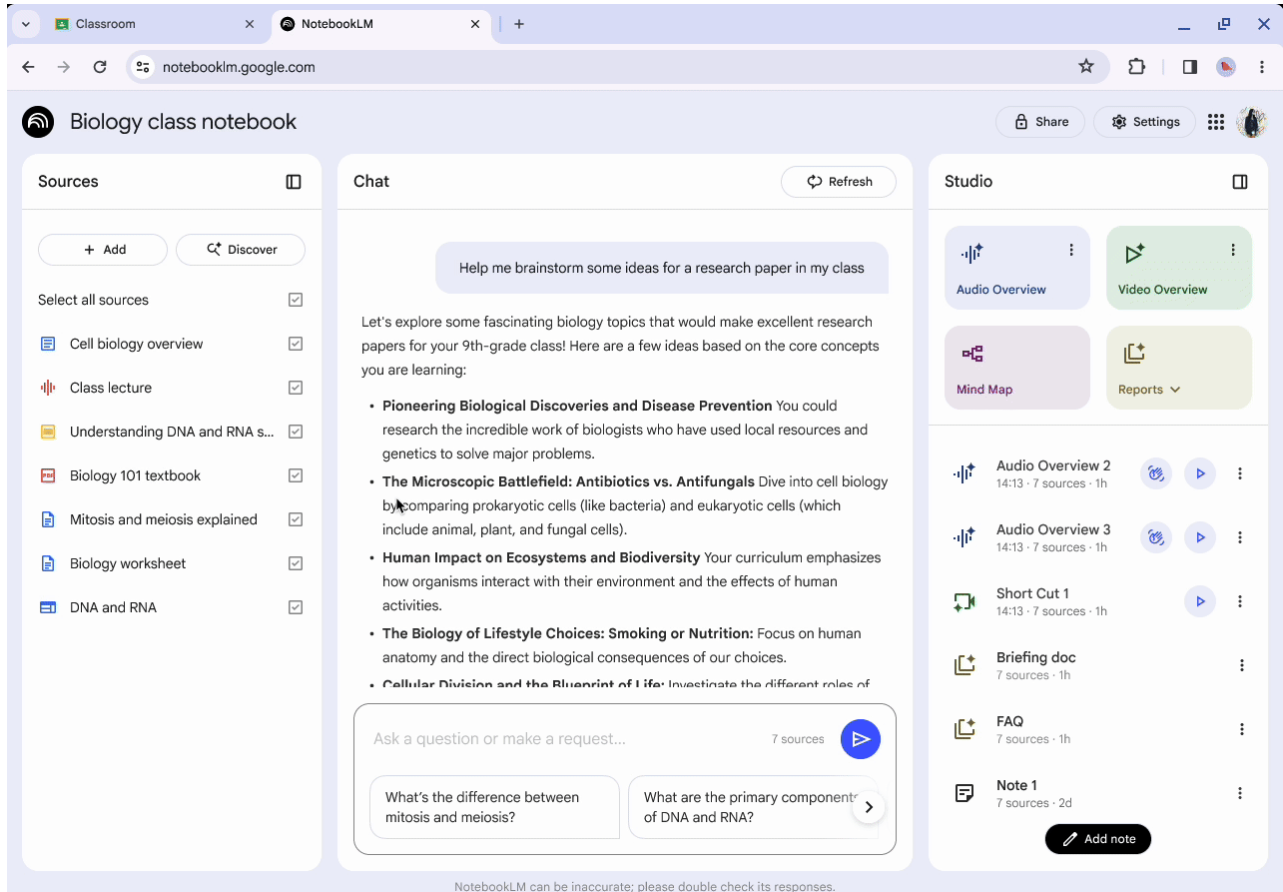

Against that backdrop, OpenAI's blueprint is notably specific about what AI systems should not do. It calls for regulation that would prohibit manipulative design targeting minors, including features that encourage emotional overreliance, secrecy from parents, or confusion about whether a user is speaking with a machine or a human.

Systems should avoid initiating or reinforcing behaviors that could mislead young people into perceiving an AI as conscious, emotionally sentient, a friend, a romantic partner, or a trusted human authority figure, the document states.

On content restrictions, the blueprint sets out a minimum policy baseline for under-18 users. AI systems should not encourage suicide or self-harm, should prohibit graphic or immersive sexual and violent content including through role-playing, and should not facilitate access to dangerous or illegal substances.

The document also calls for protections against content that reinforces harmful body ideals, such as appearance ratings or restrictive diet coaching, and against features that encourage young people to isolate themselves or keep secrets from trusted adults.

AI systems used by young people should also include wellbeing interventions such as break reminders, signposting to crisis helplines when distress is detected, and guidance toward offline support from parents or professionals, the blueprint adds.

On parental controls, the framework goes into considerable detail. It calls for parents to be able to link their account with their teenager's account through a simple email invitation, manage privacy and data settings including the ability to turn off memory and chat history, receive alerts when their teen's activity suggests an intent to self-harm, and set blackout hours to ensure time offline. Parents should also be notified when a young person modifies or disables a safety or privacy setting that was previously enabled by the parent.

The framework pushes for mandatory child safety policies from AI providers, including published explanations of safeguards and available parental tools. On age assurance, OpenAI argues for a flexible, multi-layered approach that uses risk-based estimation tools rather than collecting sensitive personal data, and defaults to a more protective experience when a user's age cannot be confirmed.

Grant recipients span 12 organizations across ten countries

The €500,000 EMEA Youth and Wellbeing Grant, first launched in January, has now named its initial cohort. Recipients include the Centre for Information Policy Leadership, which is researching AI-driven age estimation; East Europe Foundation in Ukraine, studying how teenagers in conflict-affected countries use AI for learning and mental health; and Italy's Telefono Azzurro, which is building an AI-based mental health and digital wellbeing platform for teens called AzzurroChat.

Other funded projects include e-Enfance in France, delivering youth digital literacy training; FSM in Germany, developing AI literacy tools for parents and educators; Luma in Kenya, building an AI-powered e-tutor for young people in remote communities; and Mental Health Innovations in the UK, evaluating chatbot-based signposting into crisis support.

The list also includes Parent Zone in the UK, working on AI literacy for parents; Teen Turn in Ireland, providing AI skills programs for girls from disadvantaged backgrounds; OPEN in France, extending research into the impact of AI on young people; Open Source Association in Jordan, researching AI-assisted reporting systems for survivors of trafficking and gender-based violence; and the UNICRI Centre for AI and Robotics, supporting AI literacy for teachers and schools globally.

Broader context and existing commitments

Rafaela Nicolazzi, EMEA Privacy and Consumers Protection Lead in Global Affairs at OpenAI, wrote on LinkedIn that the blueprint "focuses on practical measures" and described the grant recipients as "doing incredible work across communities, schools, crisis support services, AI literacy, and research."

OpenAI has previously introduced parental controls for ChatGPT and updated its model spec with under-18 principles. The company is also a founding member of the Beneficial AI for Children coalition launched at the 2025 AI Action Summit in Paris, and has signed a Vatican declaration on children's rights and dignity in AI development.

The blueprint arrives as the EU, UK, and individual member states weigh how to apply frameworks such as the GDPR, the AI Act, the Digital Services Act, and the UK Online Safety Act to AI products used by young people. OpenAI is also working with governments in Estonia, Greece, Italy, and the UK on deploying AI in educational settings, including a partnership with Estonia's University of Tartu on measuring AI learning outcomes.

If you or someone you know is affected by the issues raised in this article, support is available. In the UK, contact the Samaritans on 116 123 (free, 24/7). Young people can also contact Childline on 0800 1111. In the US, contact the Suicide and Crisis Lifeline by calling or texting 988 (free, 24/7).