Meta and YouTube found liable in landmark social media addiction case

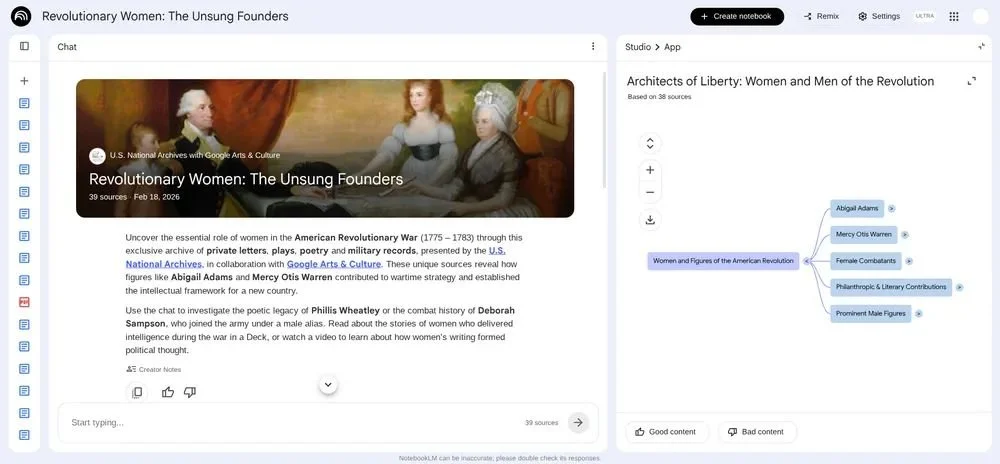

California verdict puts platform design, youth safety, and edtech implications under renewed scrutiny.

A Los Angeles jury has found Meta and Google liable for designing social media platforms that harmed a young user, in a ruling that is expected to influence how digital platforms, including those used in education, approach safety, design, and accountability.

The case centered on a now 20-year-old woman who argued that features on Instagram and YouTube contributed to compulsive use from a young age. The jury awarded $6 million in damages and concluded that both companies’ design choices played a role in harm to her mental health.

Verdict shifts focus to platform design and responsibility

The decision places platform design under direct legal scrutiny, rather than focusing solely on user-generated content, which has historically been protected under existing regulations.

Jurors determined that the companies acted with “malice, oppression, or fraud” in how their platforms operated, with Meta responsible for the majority of the damages.

The ruling comes alongside a separate case in New Mexico, where Meta was also found liable in relation to child safety concerns, adding to growing legal pressure across multiple jurisdictions.

Iona Silverman, Intellectual Property & Media Partner at Freeths LLP, says: “This case is a watershed moment. The public, governments and now the US legal system have made clear that social media companies must bear responsibility for the products that they create.”

She adds: “Historically the platforms were protected, in the EU and UK for example by the hosting defence which said that the platforms were mere hosts of user generated content. That will no longer wash.”

Silverman also says: “While I don’t expect to see similar class action in the UK, this most recent decision will put increased pressure on the government to ensure the online safety act is enforced, and to implement further measures to ensure the safety of young people online.”

Campaigners call for stronger action on youth safety

The verdict has been welcomed by campaign groups calling for tighter controls on how platforms are designed and used by younger audiences.

In a statement following the decision, James P. Steyer, Founder and CEO of Common Sense Media, says: “First of all, we owe the plaintiff and their family an enormous debt of gratitude for coming forward with this complaint.

“This verdict is a powerful recognition of what Common Sense Media and families across the country have known for years: Social media companies deliberately design their platforms to keep kids hooked, consequences to their mental and physical health be damned. The momentum for change is no longer building. It's here.

“Social media giants would never have faced trial if they had prioritized kids' safety over engagement. Instead, they buried their own research showing children were being harmed, and used kids and society as guinea pigs in massive, uncontrolled, and wildly profitable experiments. Now, executives are being held to account.

“This verdict, along with other recent court rulings, should embolden lawmakers in California and across the country to use their authority to force real change in how these companies design and operate their products. We must keep pushing, advancing, and enforcing stronger laws for social media and AI youth safety.”

The case is one of several moving through US courts, with attention increasingly focused on how engagement-driven design affects younger users.

Platforms push back as legal pressure grows

Meta and Google have both said they disagree with the verdict and intend to appeal.

Responding to the ruling, Meta says: “Teen mental health is profoundly complex and cannot be linked to a single app.

“We will continue to defend ourselves vigorously as every case is different, and we remain confident in our record of protecting teens online.”

A spokesperson for Google says: “This case misunderstands YouTube, which is a responsibly built streaming platform, not a social media site.”

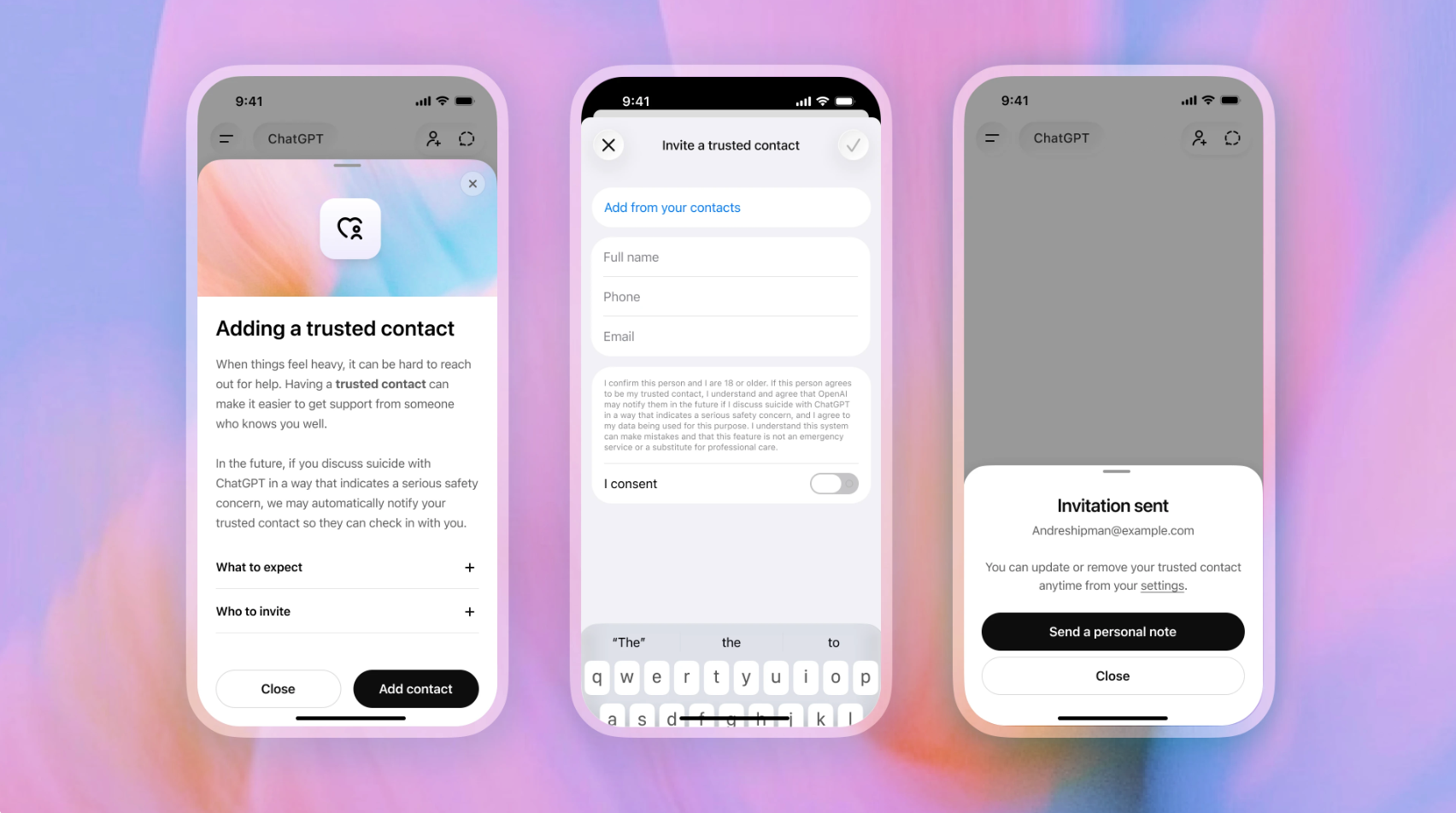

Prior to the ruling, Meta had outlined a range of safety measures in recent updates, including parental supervision tools, default private accounts for teens, and alerts linked to repeated searches related to self-harm.