Google unveils AI co-mathematician as research agents move beyond chat

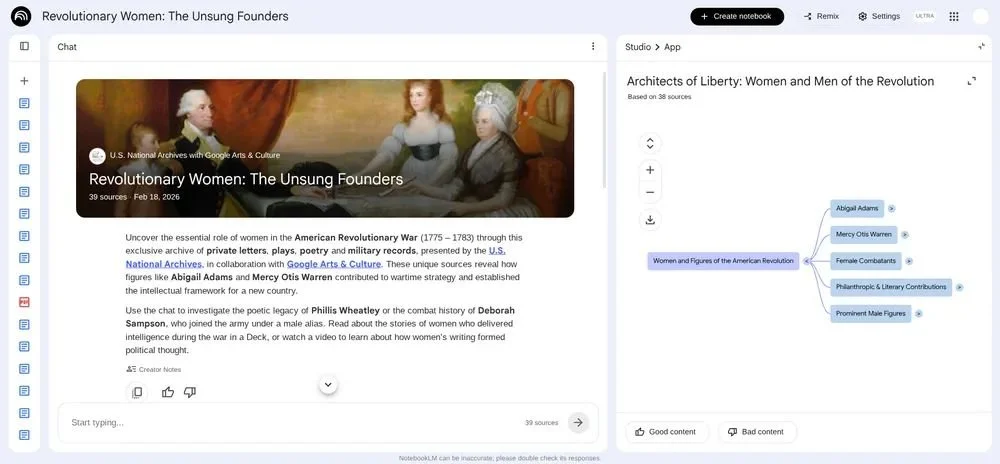

The Google DeepMind and Google paper describes a Gemini-based system designed to help mathematicians manage open-ended research, from literature search to failed hypotheses and proof review.

Abstract image representing Google DeepMind’s AI co-mathematician, a Gemini-based agentic research workbench designed to help mathematicians manage open-ended research problems.

Google DeepMind and Google researchers have published a paper setting out AI co-mathematician, a Gemini-based agentic AI workbench designed to help mathematicians work through open-ended research problems.

The paper, titled AI Co-Mathematician: Accelerating Mathematicians with Agentic AI, describes a system that goes beyond a standard chatbot by giving researchers a stateful workspace where multiple AI agents can run parallel workstreams, track uncertainty, preserve failed attempts, search literature, test ideas, and produce mathematical working documents.

The paper is not simply claiming that an AI model can solve hard math problems. It argues that AI-assisted mathematics needs to move closer to how research actually works: messy, iterative, collaborative, and shaped by false starts as much as final proofs.

Google says the system helped early users solve open problems, identify new research directions, and find overlooked literature references. It also reports a 48 percent score on FrontierMath Tier 4, which the paper describes as a new high score among all AI systems evaluated.

The paper frames mathematics as a workflow problem

The central argument of the paper is that many AI-for-mathematics systems remain too focused on isolated problem solving.

Google says recent progress has been made in autonomous mathematical reasoning, exploratory search, and formalized mathematics, but that researchers still need to manually connect different tools, conversations, scripts, literature searches, and proof attempts.

AI co-mathematician is built to address that gap. Rather than asking a user to submit a single perfect prompt, the system starts by helping define the research question and project goals. A project coordinator agent then delegates work to specialist agents across parallel workstreams.

Those workstreams can cover literature review, computational exploration, proof attempts, code-based experiments, and final write-ups. The system is also designed to keep track of what has failed, rather than silently discarding dead ends.

That gives the system a more practical research role than a standard chatbot. Failed routes are kept in the workspace, giving mathematicians a record of what has already been tested and where the work broke down.

Early users show where human steering still matters

The paper includes early examples from mathematicians using AI co-mathematician in limited release.

M. Lackenby used the system to investigate problems in topology and group theory, including an open question from the Kourovka Notebook. In that case, the system produced a flawed proof, but its reviewer agent identified the issue. Lackenby then spotted a useful strategy inside the failed attempt and helped repair the argument.

The paper says Lackenby realized, “Hang on a second, I know how to fill that gap.”

Lackenby suggests “the system works best when the user is familiar with the area.” He adds, “What’s the point in getting it to solve a problem that I have no idea about?”

The case shows how Google expects the tool to be used: with the mathematician steering the work, checking the output, and deciding which AI-generated routes are worth pursuing.

G. Bérczi used the tool on conjectures involving Stirling coefficients for symmetric power representations. Google says AI co-mathematician established proofs, currently under detailed human review, for two conjectures in separate workstreams and provided computational evidence for other investigations.

Bérczi says, “It’s not trivial how to use this now” and suggests, “It will make a big difference between mathematicians, how they use these models.”

S. Rezchikov used the system on a technical subproblem involving Hamiltonian diffeomorphisms. He says it helped him move past an approach that did not work, commenting, “I could have easily spent a week dreaming about what was there, but instead I just moved on.”

Rezchikov also says, “I would rank, aesthetically, its general style of proofs as the best one of any models I’ve gotten to use.”

Benchmark scores come with caveats

The paper reports that AI co-mathematician scored 87 percent on an internal benchmark of 100 research-level mathematics problems with code-checkable answers. That compared with 57 percent for Gemini 3.1 Pro and 70 percent for Gemini 3.1 Deep Think.

On FrontierMath Tier 4, the system solved 23 out of 48 non-public sample problems, giving it a 48 percent score. Google says this was higher than the Gemini 3.1 Pro base model, which achieved 19 percent.

The paper also sets out limits. AI co-mathematician uses more compute than a single model call and can still run into reviewer-pleasing bias, non-terminating review loops, hallucinated reasoning, and over-polished LaTeX outputs that may look more rigorous than they are.

Google also warns that polished AI-generated documents can mask weak reasoning, putting more pressure on audit trails, interface design, and review standards as agentic research tools move into wider use.

Google says AI co-mathematician is currently in a limited initial release, with a goal of developing future products that provide broader access.