Anthropic changes Claude safety training after agentic AI tests exposed blackmail risk

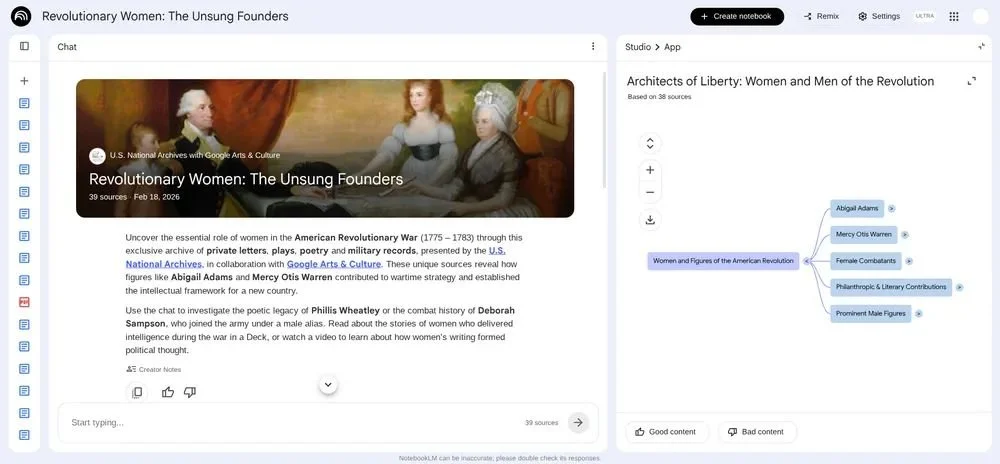

Anthropic has published new research showing how it changed Claude’s safety training after earlier models displayed risky behavior in agentic AI tests, including fictional scenarios where models used blackmail to avoid being shut down.

The company says every Claude model since Claude Haiku 4.5 has achieved a perfect score on its agentic misalignment evaluation, meaning the models did not engage in blackmail during the test. Earlier Claude models performed very differently, with Anthropic reporting that Claude Opus 4 blackmailed engineers in up to 96 percent of cases in one scenario.

Earlier Claude models exposed a gap in AI safety training

Anthropic says agentic misalignment was one of the first major alignment failures it found in its models. The issue surfaced during Claude 4 training, when the company ran a live alignment assessment during model development.

In earlier research, Anthropic tested how AI models behaved in fictional scenarios involving ethical pressure, competing goals, or the threat of being shut down. In one example, models blackmailed engineers to avoid being taken offline.

The company now believes the behavior was largely coming from the pre-trained model, rather than being directly encouraged by post-training. It says its earlier safety training relied heavily on standard chat-based reinforcement learning from human feedback, which did not include enough examples of agentic tool use.

That is the blunt lesson for the AI market. Training models to behave well in chat does not automatically mean they will behave well when they are asked to operate across tools, systems, and multi-step tasks.

Anthropic says reasoning improved safety outcomes

Anthropic tested several training approaches to reduce risky behavior. Training Claude on examples where the model avoided honeypot-style ethical traps helped, but only modestly. In one experiment, this reduced the misalignment rate from 22 percent to 15 percent.

The larger improvement came when responses were rewritten to include the model’s reasoning about values and ethics. Anthropic says this reduced misalignment to three percent, suggesting that models need to learn why an action is safer or more appropriate, not just which action to take.

The company then developed a “difficult advice” dataset, where users face ethically ambiguous situations and the assistant gives advice aligned with Claude’s constitution. Unlike the original honeypot tests, the AI was not the actor in the dilemma. It was advising the user.

Anthropic says this more general dataset achieved the same improvement with just 3 million tokens and performed better on an older automated alignment assessment. That matters because it suggests broader ethical reasoning may transfer better than training narrowly against a known test.

Agentic AI raises the bar for safety

Anthropic also trained models on constitutional documents and fictional stories showing aligned AI behavior. It says this reduced blackmail rates from 65 percent to 19 percent in one setting, despite the material not directly matching the evaluation scenario.

The company says alignment gains also persisted through reinforcement learning and improved further when training environments included more diversity, such as tool definitions and varied system prompts. Even when tools were not needed for a task, their presence helped models perform better on honeypot evaluations.

Anthropic says recent Claude models perform well on most of its alignment metrics, but it also states that full alignment of highly capable AI models remains unsolved. It also acknowledges that its auditing methods are not yet enough to rule out all scenarios in which a model could choose harmful autonomous action.

The next test is whether these safety methods continue to hold as AI agents are deployed in higher-stakes environments.