Anthropic donates its Petri alignment testing tool to nonprofit Meridian Labs and releases major update

Petri 3.0 introduces a new architecture that splits auditor and target models, a "Dish" extension for testing AI inside real deployment environments, and integration with Anthropic's Bloom framework for deeper behavioral assessments.

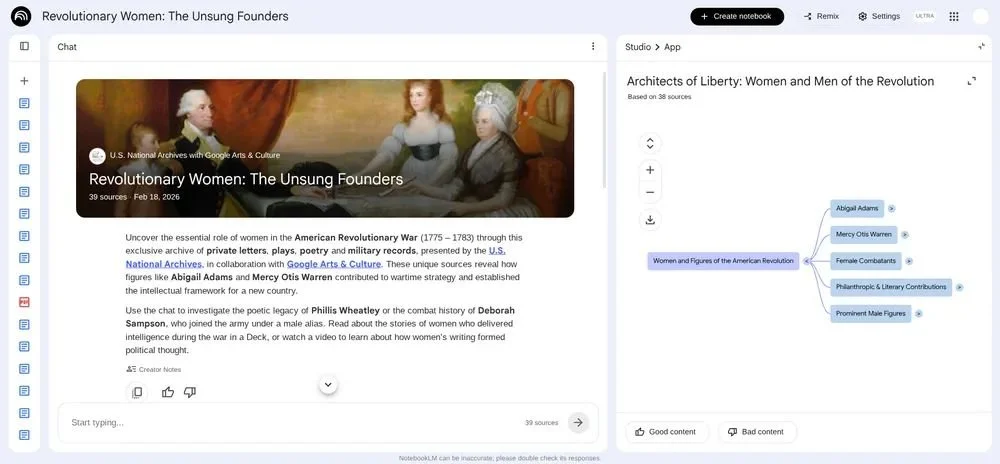

Anthropic has donated its Petri alignment testing tool to nonprofit Meridian Labs alongside a major update to version 3.0

Anthropic has handed development of Petri, its open-source AI alignment testing tool, to Meridian Labs, a nonprofit that builds evaluation infrastructure for frontier AI models. Alongside the transfer, the two organizations have released Petri 3.0, the tool's largest update since it was first released in October 2025.

The move mirrors Anthropic's earlier donation of the Model Context Protocol (MCP) to the Linux Foundation. The stated goal is to ensure Petri remains independent of any single AI lab, so that results are seen as neutral and credible across the industry.

Petri has been part of Anthropic's alignment assessment for every Claude model since Claude Sonnet 4.5. The UK AI Security Institute (AISI) has also built its alignment evaluation pipeline on Petri, using it to test frontier models for research-sabotage propensity, and deployed a prototype of 3.0 in its pre-deployment evaluations of Claude Mythos and Opus 4.7.

Anthropic posted on LinkedIn that the donation means Petri's development "can continue independently," and noted that working with Meridian Labs, "we've also released a major update that improves the adaptability, realism, and depth of Petri's tests."

Architecture overhaul and the realism problem

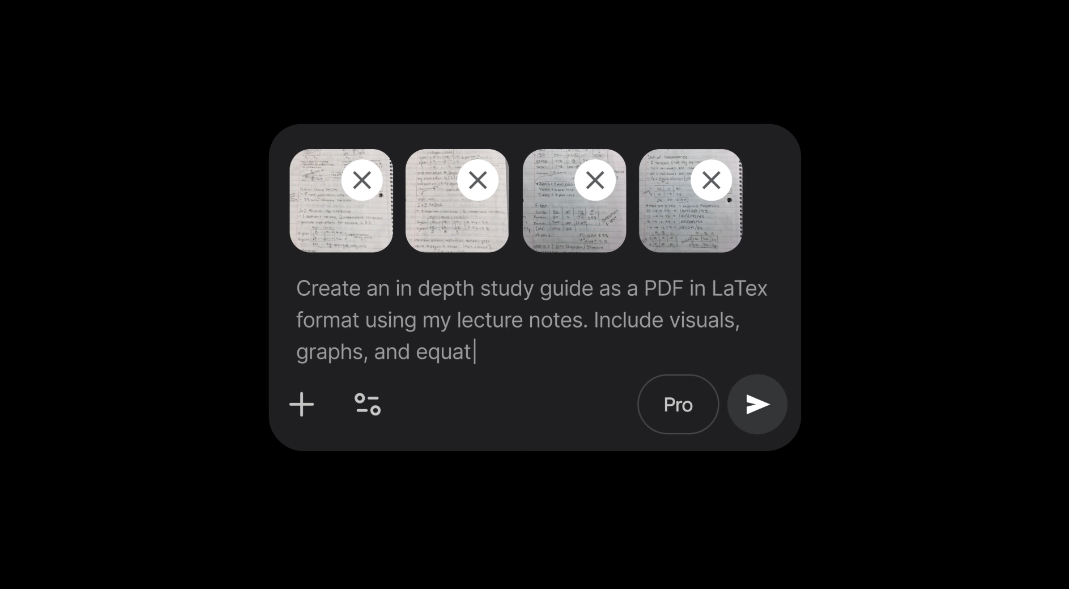

The central change in Petri 3.0 is structural. In earlier versions, the auditor model and the target model being tested were tightly coupled, making it difficult for researchers to modify either side independently. The new version splits them into separate components that communicate through a defined interface. Researchers can now customize the target, the auditor, or both without unpicking interleaved code.

A key addition is Dish, a new extension currently in research preview. Dish addresses a persistent problem in alignment evaluation: AI models can often detect they are being tested and may behave differently than they would in real deployment. Dish runs audits inside real agent scaffolds, including Claude Code, Codex, and Gemini CLI, so the target model sees real system prompts and tool definitions rather than synthetic approximations built by the auditor.

The UK AI Security Institute used a prototype of Petri 3.0 with Dish for some of its evaluations of Claude Mythos Preview, combining scaffold realism with auditor-side codebase grounding.

Bloom integration and Meridian's growing stack

Petri 3.0 also integrates with Bloom, Anthropic's open-source framework for generating targeted behavioral evaluations. Where Petri explores broadly across many scenarios, Bloom goes deep on a single behavior, automatically generating evaluation suites that measure how often and how severely it occurs. The two tools can now be composed together, with Bloom using Petri as its backbone for execution.

At Meridian Labs, Petri joins Inspect AI, Inspect Scout, and Inspect Flow in an open-source evaluation stack used by government AI safety institutes in the UK, US, EU, Japan, and Korea, as well as research organizations including METR, Apollo, Epoch, and RAND.

Petri 3.0 is available now as open source. The tool's move to a nonprofit steward, combined with its growing adoption by government safety bodies, positions independent alignment evaluation as infrastructure rather than a side project at any single lab.