Anthropic ramps up compute deal with Google and Broadcom as AI demand accelerates

Multi-gigawatt TPU agreement signals growing pressure on infrastructure as enterprise AI adoption and model scaling continue to rise.

Anthropic has expanded its infrastructure partnership with Google Cloud and Broadcom, securing multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity to support the continued development of its Claude AI models.

The additional compute capacity is expected to come online from 2027 and reflects rising global demand for large-scale AI systems across enterprise and developer use cases.

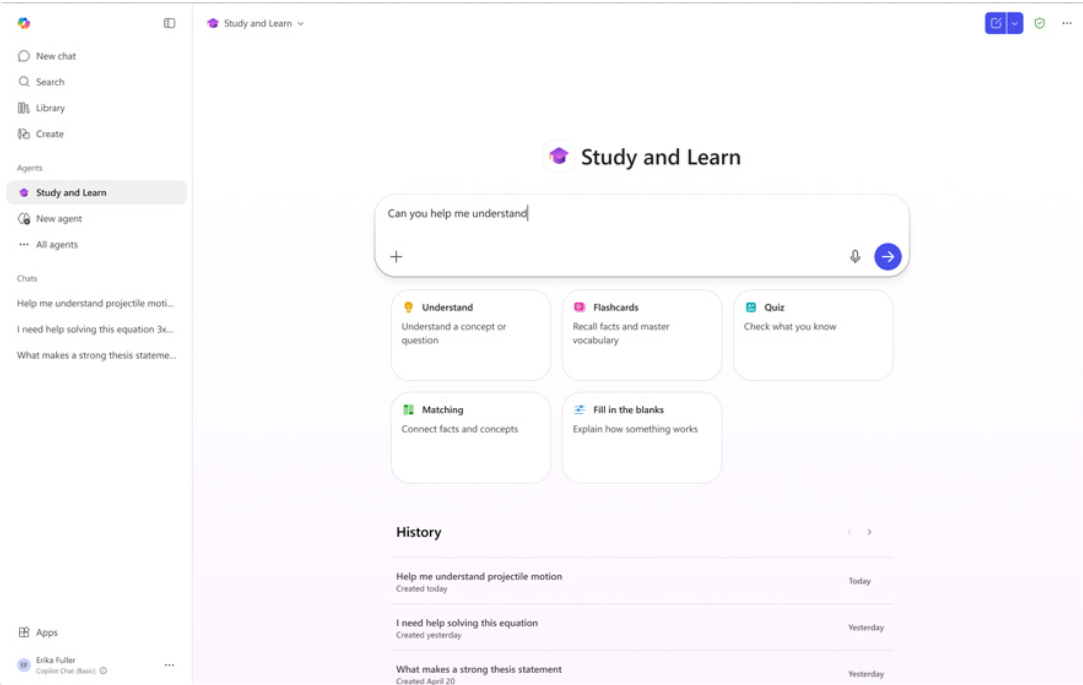

The agreement builds on Anthropic’s existing relationships with both companies and forms part of a broader push to scale infrastructure as adoption of generative AI tools increases across sectors, including education, software development, and enterprise services.

The update was highlighted on LinkedIn by Daniel Walker-Murray, MBA, who works on UK and Ireland neobanks at Google, with a focus on artificial intelligence and innovation.

Infrastructure scale increases as AI usage grows

Anthropic says the new agreement will deliver multiple gigawatts of additional compute capacity, adding to a previously announced commitment that included one million TPUs and over one gigawatt of capacity scheduled to go live in 2026.

Krishna Rao, Chief Financial Officer at Anthropic, says: “This groundbreaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development.”

The company reports that demand for Claude has increased significantly, with its revenue run rate now exceeding $30 billion, up from approximately $9 billion at the end of 2025. The number of enterprise customers spending more than $1 million annually has also doubled in recent months.

As part of the expansion, Anthropic is increasing its use of Google Cloud’s wider platform, including BigQuery, AlloyDB, and Cloud Run, to support both internal development and customer-facing applications.

The company continues to operate a multi-cloud and multi-hardware strategy, using Google TPUs alongside AWS Trainium and NVIDIA GPUs. This approach allows workloads to be distributed based on performance requirements and availability.

Anthropic notes that Claude remains available across the three largest cloud platforms: Amazon Web Services, Google Cloud, and Microsoft Azure.

US-focused investment reflects broader infrastructure shift

The majority of the new compute capacity will be located in the United States, aligning with Anthropic’s previously announced $50 billion commitment to domestic AI infrastructure.

The expansion points to increasing competition around access to high-performance compute, as companies scale models and deploy AI agents across enterprise environments. For education and workforce skills, the implications are indirect but significant, with access to infrastructure increasingly shaping the availability and performance of AI-powered learning tools.