Anthropic publishes Claude training model as AI fluency becomes workplace priority

New research-backed curriculum outlines how organizations should teach AI skills, with a focus on iteration, goal clarity, and critical evaluation.

Anthropic has published a research-based curriculum for improving how users work with its Claude AI models, as demand grows for structured approaches to AI skills and workplace adoption.

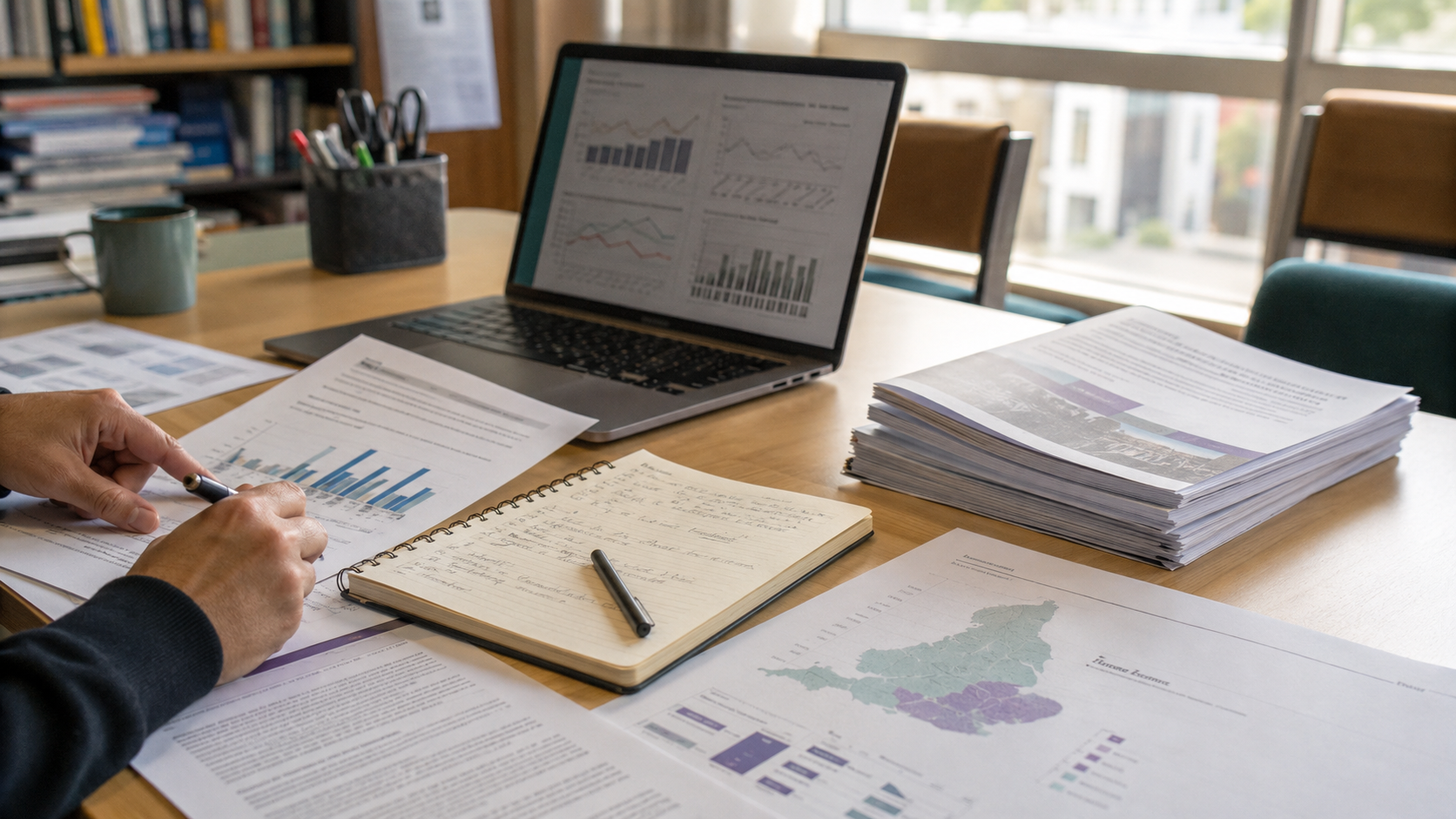

The guidance builds on Anthropic’s AI Fluency Index, which now draws on more than 50,000 user interactions across Claude Chat, Claude Code, and Claude Cowork. The company says its findings point to a consistent pattern: some AI skills develop through use, while others require deliberate teaching.

This distinction is shaping how organizations approach onboarding, training, and long-term AI capability building.

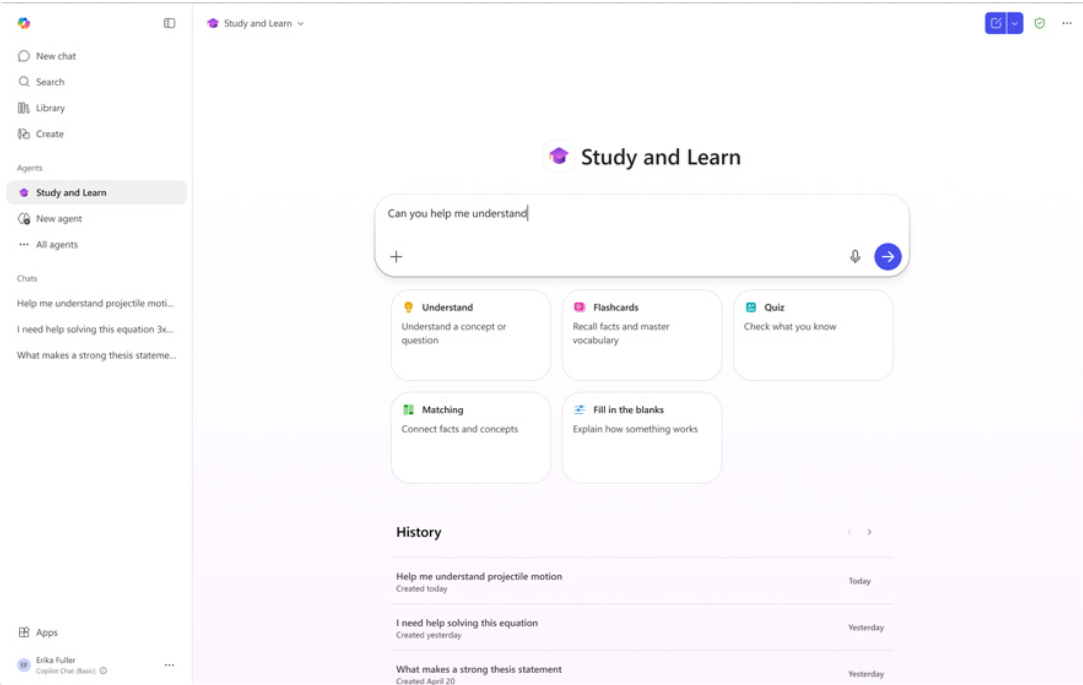

Different Claude products require different starting behaviors

Anthropic’s research identifies a “signature move” for each Claude product, defined as the behavior that most reliably improves overall fluency.

For Claude Chat, the key behavior is iteration. Users who refine prompts through follow-up interactions show stronger performance across all measured indicators, while single-prompt users demonstrate limited evaluation or improvement.

For Claude Code and Claude Cowork, the focus shifts to goal clarity. Users who define their objectives clearly at the outset are more likely to structure tasks effectively, specify outputs, and manage interactions with the system.

The implication for organizations is that training should begin with these product-specific behaviors, rather than treating AI usage as a single, uniform skill.

Some AI skills develop naturally while others do not

Beyond the initial behaviors, users progress along what Anthropic describes as a “Description spectrum,” which includes how they shape outputs through context, formatting, and configuration.

The company’s data suggests these skills tend to develop over time through exposure and repeated use. More experienced users are more likely to provide examples, define tone, and structure interactions without formal instruction.

In contrast, “Discernment,” or the ability to critically evaluate AI outputs, does not improve through usage alone.

Anthropic highlights that users often rely on surface-level checks, such as reviewing outputs or code changes, which can miss underlying issues including incorrect assumptions or incomplete context. The research suggests that as AI tools take on tasks previously handled by early-career roles, organizations will need to explicitly teach what quality and accuracy look like.

Curriculum model prioritizes evaluation alongside usage

Anthropic proposes a three-step curriculum model: teach the signature move first, develop Description skills through use, and repeatedly reinforce Discernment.

The company recommends embedding evaluation into every stage of learning, including structured prompts that require users to question outputs before accepting them.

The findings reflect a broader shift in EdTech and workforce development, where AI fluency is moving beyond tool familiarity toward structured skill-building frameworks that combine usage, configuration, and critical thinking.