AI safety for young people moves up the agenda at Growing Up in the Digital Age Summit

Discussions in Dublin point to rising AI use among teens and growing focus on safety, literacy, and age-appropriate design.

Photo credit: Allison Fine Mishkin

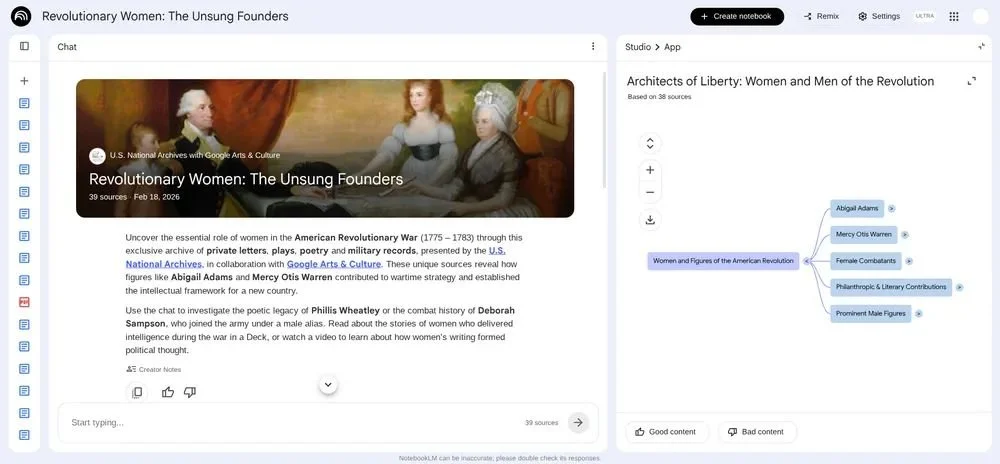

Google’s Growing Up in the Digital Age summit in Dublin brought together policymakers, researchers, and product leaders to examine how AI systems are being designed for younger users, as adoption among teens accelerates and expectations around safety and learning evolve.

The event, hosted at Google’s Safety Engineering Center, focused on how AI tools are already embedded in young people’s lives and what that means for education, digital literacy, and product design across the EdTech ecosystem.

High adoption meets uneven literacy

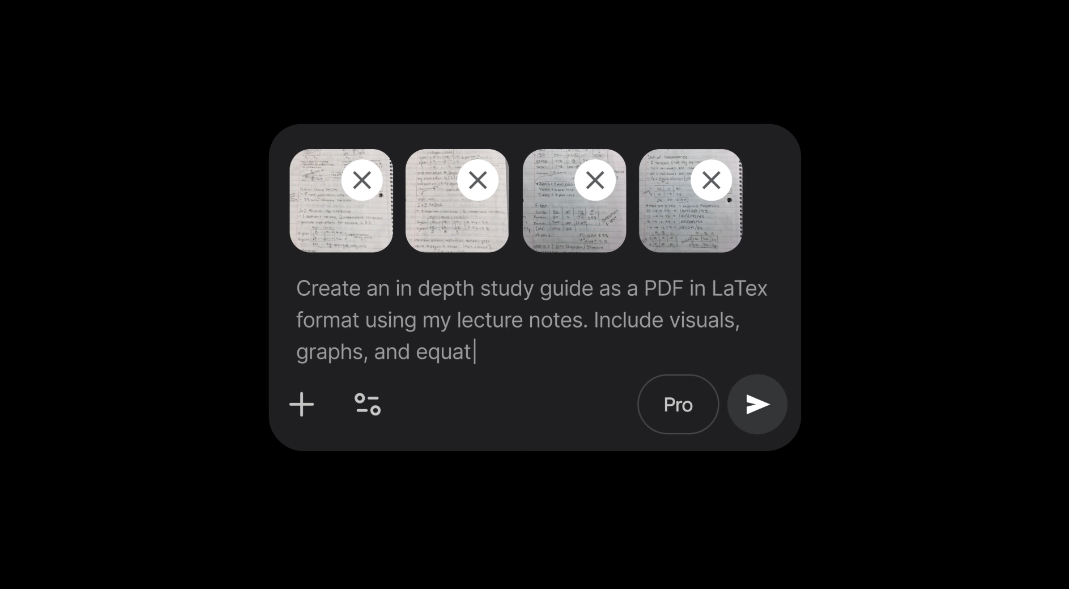

Across discussions, one theme stood out. Young people are not waiting for guidance on AI. They are already using it, often as part of everyday learning and creativity.

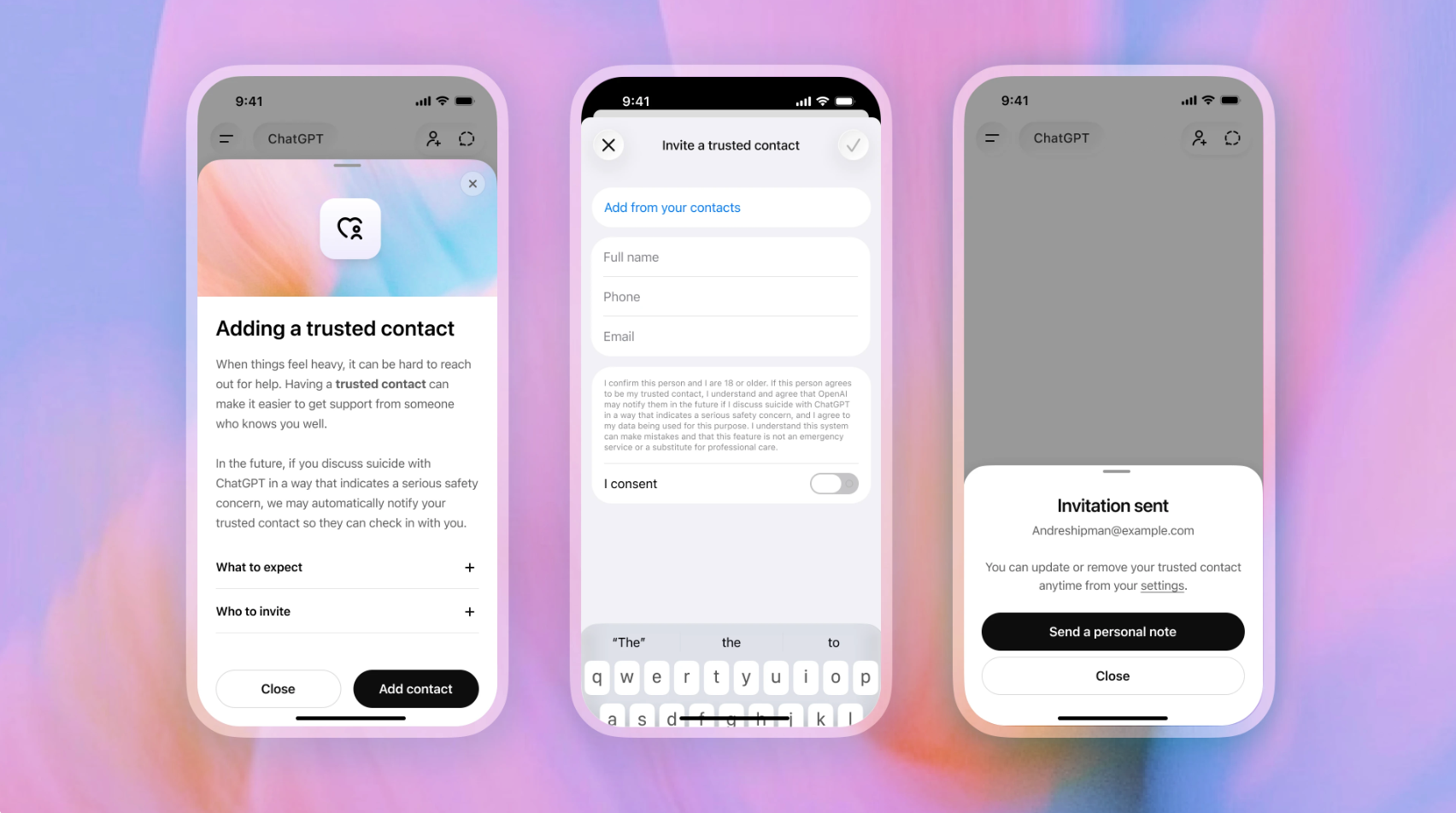

Allison Fine Mishkin, Child Safety and Wellbeing Product Policy at OpenAI, said in a LinkedIn post that “teens aren’t debating whether AI is ‘a thing.’ They’re already using it. Adoption is extremely high and teens are critical users of the tool.”

She noted that while many young users see AI as a tool for creativity, concerns remain around over-reliance. One participant highlighted the risk directly, saying: “relying on AI can short-circuit the learning process of figuring things out independently.”

Mishkin pointed to a gap between trust and critical thinking, adding that “Nearly 8 in 10 teens believe online information is trustworthy, but only ~55% say they actively reflect on whether it’s true.”

She emphasized the need for broader approaches to literacy, stating that “we need to invest in community approaches to AI literacy to ensure AI literacy gets embedded in broader digital literacy curricula.”

Safety, autonomy, and design trade-offs

The summit also surfaced a more nuanced view of online safety. Rather than calling for blanket restrictions, young people and experts pointed toward more targeted interventions.

Mishkin reflected that the sentiment was not “anything goes,” but instead: “ban the bad apps, not all of them.”

This aligns with wider discussion at the event around balancing protection with autonomy. Young people described a model where supervision evolves over time, with stronger guardrails in early years and increasing independence as they grow.

Didem Özkul, Senior Researcher and Policy Adviser and Honorary Associate Professor at UCL, framed this as a design challenge, asking: “How do we proactively shape GenAI behaviour to empower—not just protect—young users?”

She argued that young people need to be treated as active participants in technology design, not passive users, and that systems should account for differences in age, context, and development.

Özkul also highlighted the role of critical thinking, noting that AI should support users in forming their own understanding rather than providing fixed answers.

Industry shifts toward embedded safeguards

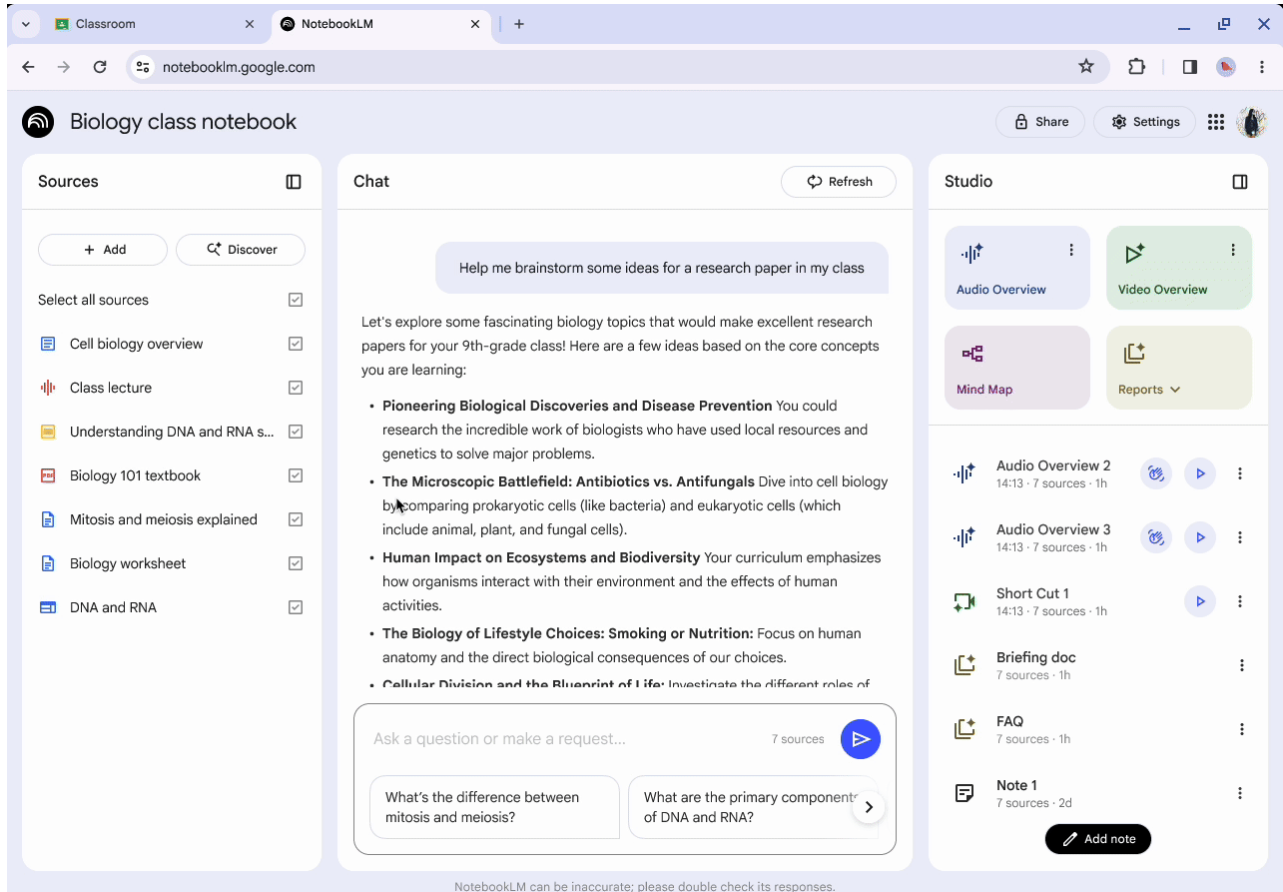

Google used the summit to outline how it is approaching product design for younger users, including built-in safeguards across Search, YouTube, and Gemini.

Mindy Brooks, Vice President, Product Management at Google, described the focus on practical tools for families, saying: “How do we take this medium and use it in powerful, helpful ways for kids to help them learn, help them grow, help them explore online – and to do so in a safe way?”

She pointed to features such as default safety settings, parental controls through Family Link, and wellbeing tools including screen time limits and “take a break” reminders.

At the same time, Google confirmed a $20 million global initiative to support teen digital wellbeing, including the development of a multilingual resource center and curriculum focused on online behavior, AI use, and digital resilience.

From protection to participation

A consistent thread across sessions was the shift from protecting young users to actively involving them in how technology is shaped.

Özkul highlighted the need for structured approaches such as Child Rights Impact Assessments, which evaluate how AI systems affect different aspects of young people’s lives, from privacy to education.

Mishkin captured the longer-term implication of these decisions, stating: “Every line of code is planting a seed. What you plant will grow in the hearts and minds of people. Safety can't be a checkbox.”

For the EdTech sector, the direction is clear. AI is no longer a future consideration in classrooms or youth environments. It is already embedded. The challenge now is how systems are designed, how skills are developed, and how young people are supported to use these tools critically rather than passively.

ETIH Innovation Awards 2026

The ETIH Innovation Awards 2026 are now open and recognize education technology organizations delivering measurable impact across K–12, higher education, and lifelong learning. The awards are open to entries from the UK, the Americas, and internationally, with submissions assessed on evidence of outcomes and real-world application.