Google DeepMind and Kaggle open AGI benchmark contest with $200,000 prize pool

New framework and hackathon shift the AGI conversation from broad claims to measurable cognitive testing, with Google DeepMind asking the research community to build better evaluations for frontier models.

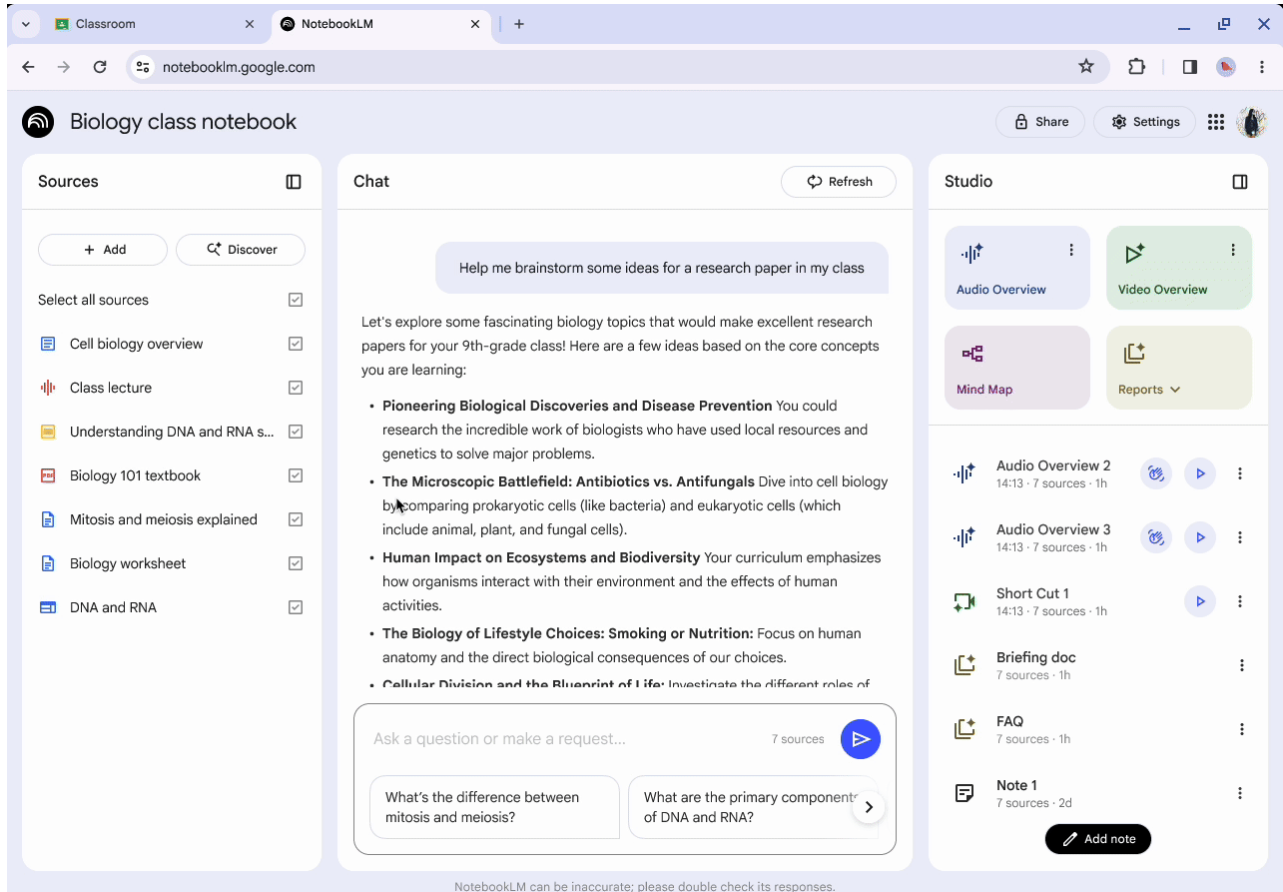

Google DeepMind and Kaggle have launched a new hackathon to build benchmarks for evaluating artificial general intelligence, alongside a research paper setting out a framework for measuring AI systems against human cognitive abilities.

The initiative introduces a structured approach to testing AI performance across areas such as learning, reasoning, attention, and social understanding, as the industry faces ongoing questions about how progress toward more general AI should be defined and measured.

The hackathon, titled Measuring progress toward AGI: Cognitive abilities, is open from March 17 to April 16 and offers a total prize pool of $200,000 for participants designing new evaluation methods.

Neil Hoyne, Chief Strategist, Author and Advisor at Google, Wharton, and Penguin Random House, wrote in a LinkedIn post that the work is “not about who has the biggest AI model.” He said it is “an effort to find out if these models have the capabilities, intuition and focus to navigate the world like we do.”

Framework sets out 10 cognitive abilities for AI evaluation

Alongside the hackathon, Google DeepMind has published a paper, Measuring Progress Toward AGI: A Cognitive Taxonomy, which defines 10 cognitive abilities the company says are relevant to assessing general intelligence.

These include perception, generation, attention, learning, memory, reasoning, metacognition, executive functions, problem solving, and social cognition.

The framework proposes testing AI systems across a range of tasks linked to these abilities, then comparing performance against human baselines. The approach is designed to move evaluation beyond single benchmark scores and toward a more detailed view of how systems perform across different types of cognitive activity.

Google DeepMind states that current evaluation methods often fail to distinguish between models that rely on memorized patterns and those that can adapt to new problems.

Kaggle hackathon targets gaps in current testing methods

The hackathon focuses on five areas where Google DeepMind says evaluation methods are currently limited: learning, metacognition, attention, executive functions, and social cognition.

Participants are asked to build benchmarks using Kaggle’s Community Benchmarks platform, creating tasks that test how AI systems handle new information, manage attention, plan actions, and interpret social context.

The competition includes two $10,000 awards for each of the five tracks, as well as four $25,000 grand prizes for the highest-scoring submissions overall. Judging will take place between April 17 and May 31, with results expected on June 1.

Hoyne wrote that participants will be able to “help build a more honest way to test AI” by creating evaluations that reflect “real human skills - like how we learn on the fly or how we understand social cues.”

Focus shifts from model performance to measurement standards

The release places greater emphasis on how AI systems are assessed rather than how they are developed, at a time when comparisons between models are often based on narrow or inconsistent benchmarks.

Google DeepMind says new evaluations are needed to identify where systems perform reliably and where limitations remain, particularly in areas linked to reasoning, adaptation, and social interaction.

For developers and researchers, the hackathon positions benchmark design as a key part of AI development, with the results expected to contribute to future evaluation standards across the sector.

ETIH Innovation Awards 2026

The ETIH Innovation Awards 2026 are now open and recognize education technology organizations delivering measurable impact across K–12, higher education, and lifelong learning. The awards are open to entries from the UK, the Americas, and internationally, with submissions assessed on evidence of outcomes and real-world application.