University of Pennsylvania researchers detail how AI is reshaping math research workflows

Professors outline how AI-generated proofs, faster iteration cycles, and new risks are changing how research is conducted, reviewed, and taught.

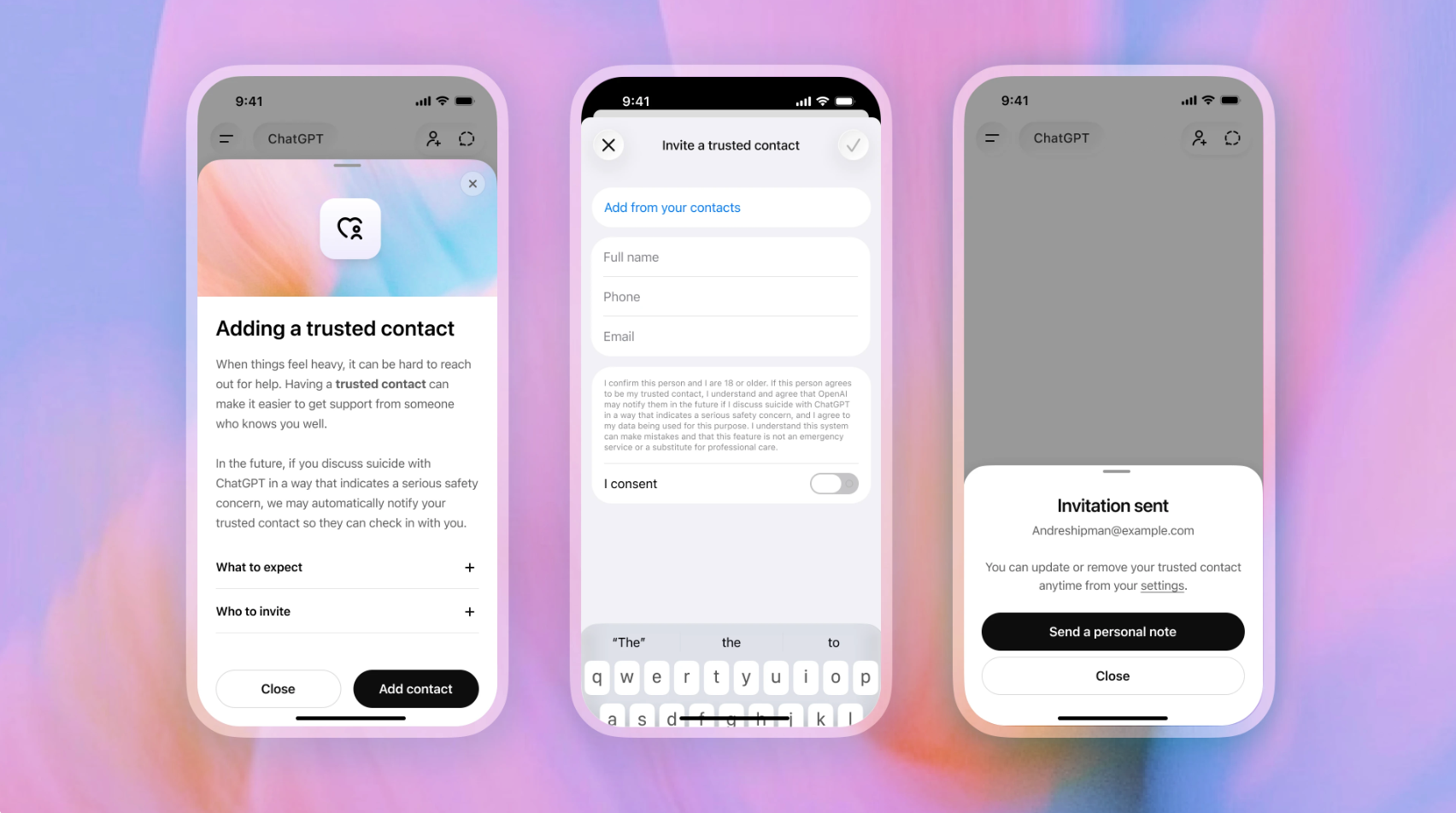

Michael Kearns (left) and Aaron Roth (right)

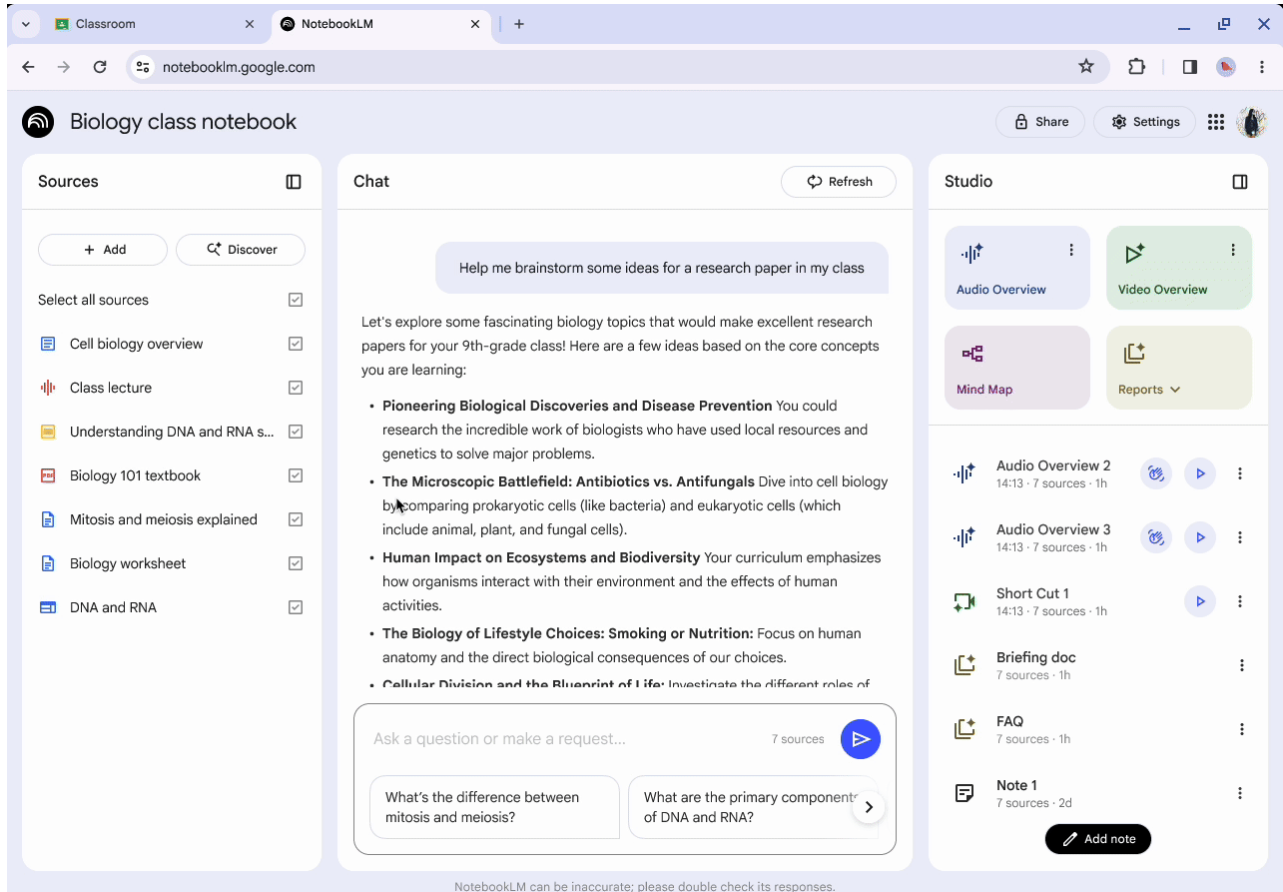

Researchers at the University of Pennsylvania, writing via Amazon Science, set out how AI tools are beginning to change the way mathematical research is conducted, from generating formal proofs to altering how early-career scientists develop expertise.

Michael Kearns, Professor at the University of Pennsylvania and Amazon Scholar at Amazon Web Services, and Aaron Roth, Professor at the University of Pennsylvania and Amazon Scholar at Amazon Web Services, describe a shift where AI systems can now translate high-level ideas into fully structured mathematical arguments. The development points to a broader change across EdTech and research, where AI is moving from support tool to active collaborator.

They write: “AI tools are now able to develop and write rigorous mathematical proofs only from prompts providing high-level proof sketches.”

AI moves from assistant to research collaborator

Kearns and Roth outline how their own work changed during a recent project, where they used agentic AI tools to produce a 50-page research paper in a matter of weeks rather than months.

The process involved prompting AI systems with high-level concepts and allowing them to generate formal proofs in established mathematical languages. They write: “Working with proof-based AI tools is akin to collaborating with a smart, broadly educated but occasionally error-prone colleague.”

In one example, the researchers provided a conceptual outline of a proof, and the AI translated it into formal definitions and arguments. While the outputs required correction, they note that the system produced “a great first draft that could then be corrected and smoothed.”

The researchers also highlight that AI is beginning to contribute beyond execution. They write that in some cases, the systems introduced “key ideas that were crucial to the final results,” suggesting a shift toward partial co-creation rather than simple automation.

At the same time, reliability remains a constraint. They note that generated proofs are “correct perhaps only three-quarters of the time,” requiring expert oversight to identify and resolve errors before continuing.

They warn that without that oversight, outputs can become “AI research slop — polished-looking but ultimately flawed or uninteresting work.”

Faster research cycles raise training concerns

The acceleration of research workflows is creating tension around how future scientists are trained. Kearns and Roth point out that traditional expertise in mathematical sciences is built through sustained effort and problem-solving over time. They write: “Historically, people earn expertise in the mathematical sciences through struggle as junior researchers.”

They argue that AI tools are now removing many of those formative steps: “If doctoral students can simply ask AI for proofs — which is extremely tempting — how do they develop the experience and skill that, for now at least, are required to use AI tools productively in the first place?”

The researchers suggest that institutions may need to separate training approaches, requiring early-stage researchers to work without AI before introducing advanced tools. They compare this to earlier shifts in computing, where developers moved away from low-level programming but still needed to understand underlying systems.

They say that future research culture may place more emphasis on “taste, problem selection, and modeling skills” rather than technical execution alone.

Peer review systems face growing pressure

Beyond individual workflows, the researchers highlight pressure on the broader research ecosystem, particularly peer review.

They argue that the core function of peer review is to direct attention within the scientific community, not just verify correctness: “Rather, its purpose is to focus a scarce resource — the attention of the research community — in the right places.”

AI-generated papers are complicating that process. The researchers note that tools now make it easier to produce work that appears credible, increasing submission volumes and placing additional strain on reviewers.

They write: “Reducing the time and effort needed to produce ‘a paper’ — not necessarily a good paper — is beginning to destabilize our existing institutions for peer review.”

They also point to the emergence of parallel systems, including AI tools designed to detect AI-generated research, creating what they describe as a cycle similar to “technological arms races.”

As a potential response, they highlight automated verification tools, such as formal proof checking, as a way to filter out incorrect work before human review.

They comment that without broader changes, “AI threatens to arrest scientific progress at the community level even as it accelerates it at the level of individual researchers.”

Research systems enter a period of rapid change

Kearns and Roth conclude that AI is driving a structural shift across research methodology, training, and publishing: “We think AI is bringing a sea change in scientific research methodology, training, and peer review; there is no hiding from what is coming.”

They also point to the pace of that shift: “We’ve seen more change in the past year than in the previous decade.”

The researchers frame the challenge as institutional rather than purely technical. Systems such as peer review, publishing, and graduate training were built around human time, effort, and cognitive limits. Those constraints are now changing, but the structures built on top of them have not yet caught up.

They argue that the next phase will depend on how those systems respond. The opportunity is to use AI to expand access to research, accelerate discovery, and enable more people to participate. The risk is that speed and scale begin to outweigh judgment, quality, and relevance.

For now, the direction is clear. AI is already embedded in how research is produced. The question is how quickly the surrounding systems adapt.

ETIH Innovation Awards 2026

The ETIH Innovation Awards 2026 are now open and recognize education technology organizations delivering measurable impact across K–12, higher education, and lifelong learning. The awards are open to entries from the UK, the Americas, and internationally, with submissions assessed on evidence of outcomes and real-world application.