OpenAI releases teen AI safety tools for developers as scrutiny on youth protection grows

New prompt-based safety policies aim to help developers build age-appropriate AI systems, with release coming amid wider debate around platform responsibility and recent product changes.

OpenAI has released a new set of AI safety tools designed to help developers build stronger protections for teenagers, as pressure increases on how AI systems are deployed for younger users across education, social platforms, and consumer apps.

Shared by Chief Global Affairs Officer Chris Lehane on LinkedIn, the update introduces prompt-based safety policies built to work with OpenAI’s open-weight safety model, gpt-oss-safeguard. The tools are designed to make it easier for developers to apply consistent safeguards in real-world systems, particularly in areas where defining risk has historically been difficult.

Lehane said: “At OpenAI, we believe AI knows a lot, but parents know best. That’s why we’re releasing new tools to help developers incorporate teen safety protections directly into their applications using our open-weight safety models.”

He added: “Today’s teens are the first generation growing up with AI. We have a chance to avoid repeating the mistakes made during the rise of social media, when key guardrails often came only after products were already deeply embedded in young people’s lives.”

Prompt-based policies aim to standardize safety implementation

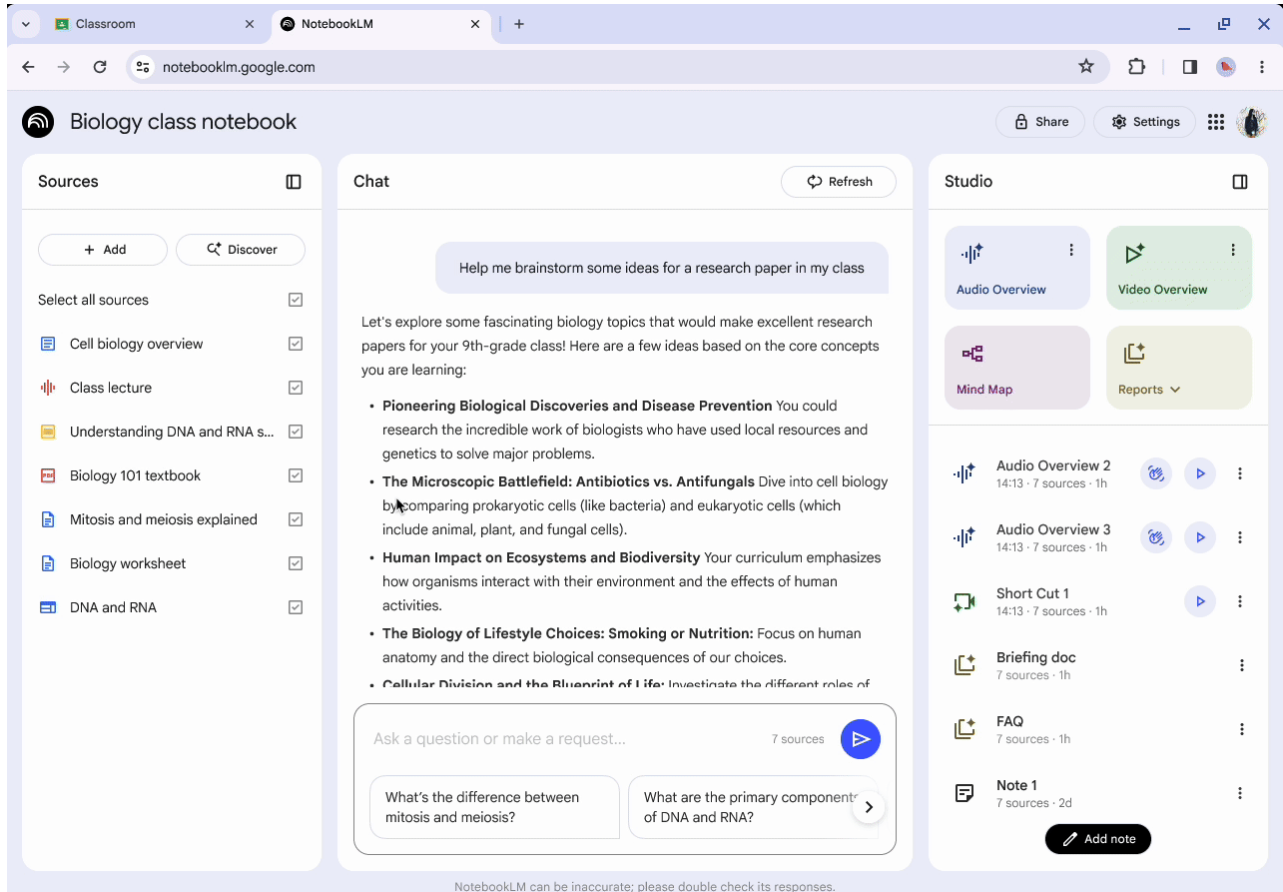

The release focuses on a practical challenge in AI safety: translating high-level principles into systems that can be consistently applied. OpenAI’s new policies are structured as prompts that can be directly integrated into models, allowing developers to convert safety requirements into operational rules.

The policies are designed to work with gpt-oss-safeguard, OpenAI’s open-weight safety model, and can be used for both real-time content filtering and offline analysis of user-generated content.

They cover a defined set of risk areas for teenage users, including sexual content, violent material, harmful body ideals, dangerous challenges, and inappropriate roleplay. Additional areas include access to age-restricted goods and services, reflecting concerns about how AI systems may influence behavior beyond content consumption.

OpenAI says one of the main barriers for developers has been the lack of clear definitions around what constitutes harmful content in a teen context. The company notes that even experienced teams struggle to turn policy into enforceable systems, leading to inconsistent moderation or gaps in protection.

Built with external input and released as open source

The policies were developed with input from external organizations including Common Sense Media and everyone.ai, with a focus on aligning technical implementation with research into adolescent development.

OpenAI is releasing the policies as open source, with the intention of encouraging wider adoption and iteration across the AI ecosystem. Developers can adapt the prompts to their own applications, extend them to cover additional risks, and translate them into different contexts.

External contributors also highlighted the need for more structured approaches to safety.

Robbie Torney, Head of AI & Digital Assessments at Common Sense Media, says: “One of the biggest gaps in AI safety for teens has been the lack of clear, operational policies that developers can build from. Many times, developers are starting from scratch. These prompt-based policies help set a meaningful safety floor across the ecosystem, and because they're released as open source, they can be adapted and improved over time. We're encouraged to see this kind of infrastructure being made available broadly, and we hope it catalyzes more shared youth-safety starting points across the industry.”

Dr. Mathilde Cerioli, Chief Scientist at everyone.AI, adds: “Efforts like this that make youth safety policies more operational are valuable because they help translate expert knowledge into guidance that can be used in real systems. Content policies are an important first step, and they also open the door to broader work on how model behavior can shape youth-relevant risks over time. Inspired by this work and our own research, everyone.ai has also created an initial behavioral policy focused on risks like exclusivity and overreliance."

Part of a broader shift toward built-in safeguards

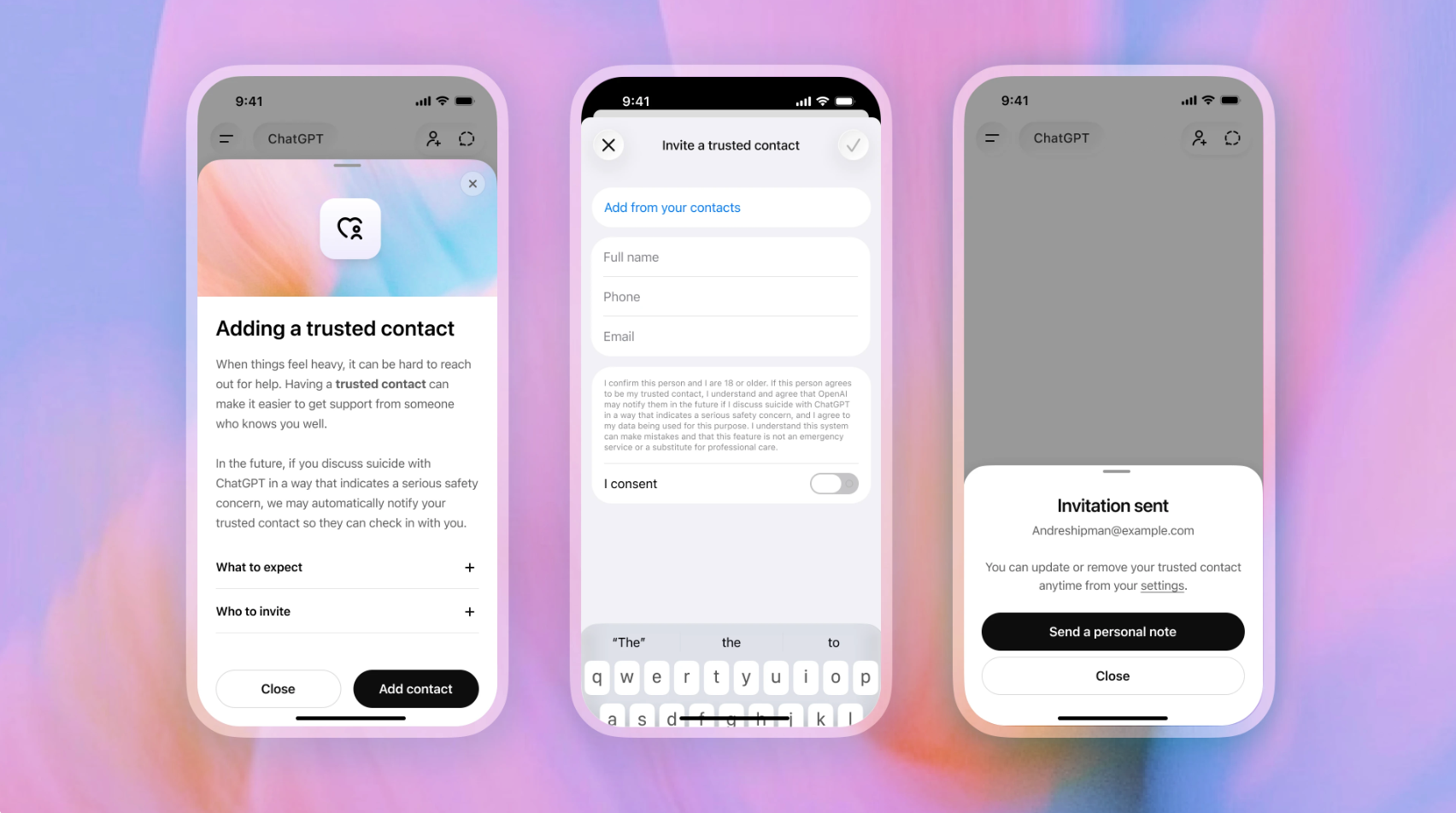

The release builds on a wider set of safety measures introduced by OpenAI over the past year, including updates to its Model Spec to include protections for users under 18, alongside features such as parental controls, age prediction systems, and regional Teen Safety Blueprints.

Lehane said the aim is to move safety earlier in the development cycle rather than treating it as a later-stage fix. He said: “Strong safeguards for teens should be built in at the beginning, not bolted on later.”

He added: “We’ve also introduced safeguards like parental controls and age prediction, published a series of Teen Safety Blueprints in multiple nations, and are working with lawmakers, parents, and educators to develop clearer standards and stronger protections for teens using AI.”

The tools are also positioned as part of a layered approach, with OpenAI encouraging developers to combine prompt-based policies with product design decisions, monitoring systems, and user controls.

Lehane said: “We recognize that these policies are a starting point, not a complete solution. Developers are best positioned to understand the risks their products may present.”

Sora context highlights timing of safety focus

The safety release comes just days after OpenAI outlined how it was strengthening protections within its Sora AI video platform, including stricter moderation for teen users, content filtering across video and audio outputs, and controls around likeness and consent.

Shortly after those updates, OpenAI confirmed it is shutting down the Sora app and ending its partnership with Disney, bringing an abrupt end to one of its most visible consumer AI products.

While the company has not directly linked the decisions, the timing places additional focus on how AI products are developed, moderated, and scaled, particularly when younger users are involved.